Ambari User's Guide

Overview

Hadoop is a large-scale, distributed data storage and processing infrastructure using

clusters of commodity hosts networked together. Monitoring and managing such complex

distributed systems is a non-trivial task. To help you manage the complexity, Apache

Ambari collects a wide range of information from the cluster's nodes and services

and presents it to you in an easy-to-read and use, centralized web interface, Ambari

Web.

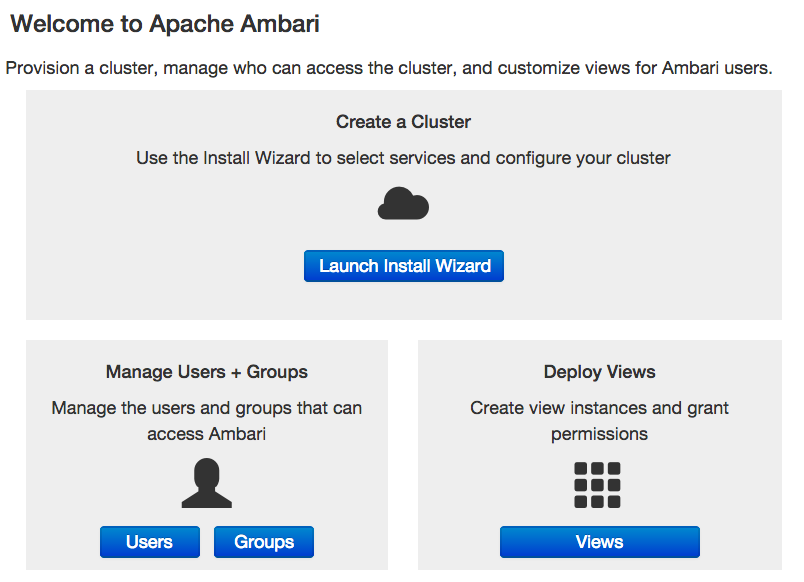

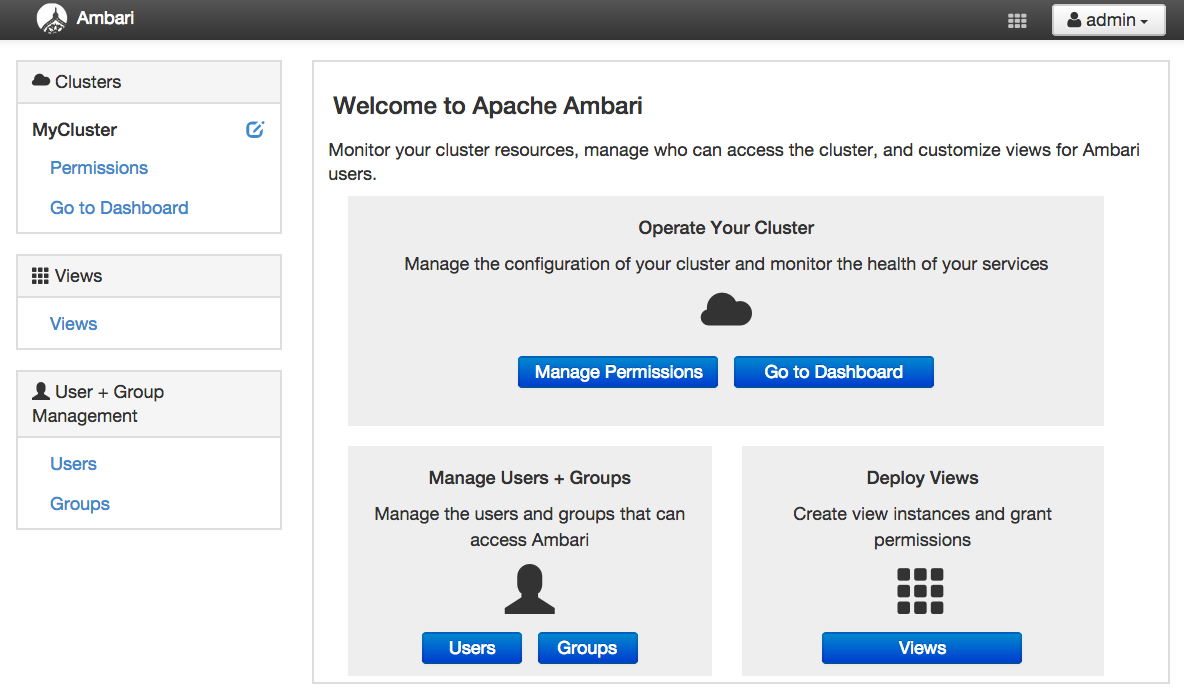

Ambari Web displays information such as service-specific summaries, graphs, and alerts.

You use Ambari Web to create and manage your HDP cluster and to perform basic operational

tasks such as starting and stopping services, adding hosts to your cluster, and updating

service configurations. You also use Ambari Web to perform administrative tasks for

your cluster, such as managing users and groups and deploying Ambari Views.

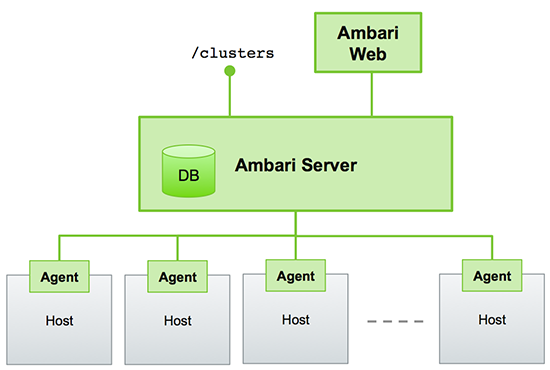

Architecture

The Ambari Server serves as the collection point for data from across your cluster.

Each host has a copy of the Ambari Agent - either installed automatically by the Install

wizard or manually - which allows the Ambari Server to control each host. In addition,

each host has a copy of Ganglia Monitor (gmond), which collects metric information that is passed to the Ganglia Connector, and

then on to the Ambari Server.

Figure - Ambari Server Architecture

Sessions

Ambari Web is a client-side JavaScript application, which calls the Ambari REST API (accessible from the Ambari Server) to access cluster information and perform cluster operations. After authenticating to Ambari Web, the application authenticates to the Ambari Server. Communication between the browser and server occurs asynchronously via the REST API.

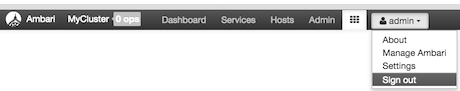

Ambari Web sessions do not time out. The Ambari Server application constantly accesses the Ambari REST API, which resets the session timeout. During any period of Ambari Web inactivity, the Ambari Web user interface (UI) refreshes automatically. You must explicitly sign out of the Ambari Web UI to destroy the Ambari session with the server.

Accessing Ambari Web

Typically, you start the Ambari Server and Ambari Web as part of the installation process. If Ambari Server is stopped, you can start it using a command line editor on the Ambari Server host machine. Enter the following command:

ambari-server start

To access Ambari Web, open a supported browser and enter the Ambari Web URL:

http://<your.ambari.server>:8080

Enter your user name and password. If this is the first time Ambari Web is accessed,

use the default values, admin/admin.

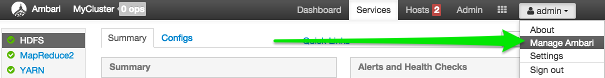

These values can be changed, and new users provisioned, using the Manage Ambari option.

For more information about managing users and other administrative tasks, see Administering Ambari.

Monitoring and Managing your HDP Cluster with Ambari

This topic describes how to use Ambari Web features to monitor and manage your HDP cluster. To navigate, select one of the following feature tabs located at the top of the Ambari main window. The selected tab appears white.

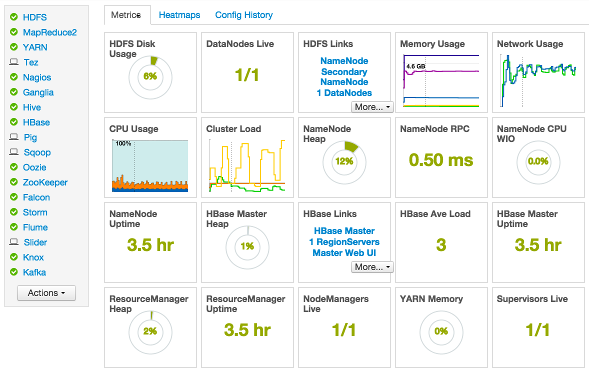

Viewing Metrics on the Dashboard

Ambari Web displays the Dashboard page as the home page. Use the Dashboard to view the operating status of your cluster in the following three ways:

Scanning System Metrics

View Metrics that indicate the operating status of your cluster on the Ambari Dashboard. Each metrics widget displays status information for a single service in your HDP cluster. The Ambari Dashboard displays all metrics for the HDFS, YARN, HBase, and Storm services, and cluster-wide metrics by default.

You can add and remove individual widgets, and rearrange the dashboard by dragging and dropping each widget to a new location in the dashboard.

Status information appears as simple pie and bar charts, more complex charts showing usage and load, sets of links to additional data sources, and values for operating parameters such as uptime and average RPC queue wait times. Most widgets display a single fact by default. For example, HDFS Disk Usage displays a load chart and a percentage figure. The Ambari Dashboard includes metrics for the following services:

|

Metric: |

Description: |

|---|---|

|

HDFS |

|

|

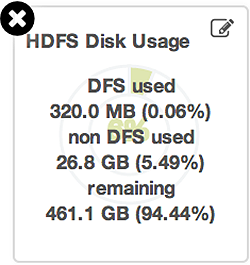

HDFS Disk Usage |

The Percentage of DFS used, which is a combination of DFS and non-DFS used. |

|

Data Nodes Live |

The number of DataNodes live, as reported from the NameNode. |

|

NameNode Heap |

The percentage of NameNode JVM Heap used. |

|

NameNode RPC |

The average RPC queue latency. |

|

NameNode CPU WIO |

The percentage of CPU Wait I/O. |

|

NameNode Uptime |

The NameNode uptime calculation. |

|

YARNHDP 2.0 and 2.1 Stacks |

|

|

ResourceManager Heap |

The percentage of ResourceManager JVM Heap used. |

|

ResourceManager Uptime |

The ResourceManager uptime calculation. |

|

NodeManagers Live |

The number of DataNodes live, as reported from the ResourceManager. |

|

YARN Memory |

The percentage of available YARN memory (used vs. total available). |

|

HBase |

|

|

HBase Master Heap |

The percentage of NameNode JVM Heap used. |

|

HBase Ave Load |

The average load on the HBase server. |

|

HBase Master Uptime |

The HBase Master uptime calculation. |

|

Region in Transition |

The number of HBase regions in transition. |

|

StormHDP 2.1 Stack |

|

|

Supervisors Live |

The number of Supervisors live, as reported from the Nimbus server. |

|

MapReduceHDP 1.3 Stack |

|

|

JobTracker Heap |

The percentage of JobTracker JVM Heap used. |

|

TaskTrackers Live |

The number of TaskTrackers live, as reported from the JobTracker. |

Drilling Into Metrics for a Service

-

To see more detailed information about a service, hover your cursor over a Metrics widget.

More detailed information about the service displays, as shown in the following example:

-

To remove a widget from the mashup, click the white X.

-

To edit the display of information in a widget, click the pencil icon. For more information about editing a widget, see Customizing Metrics Display.

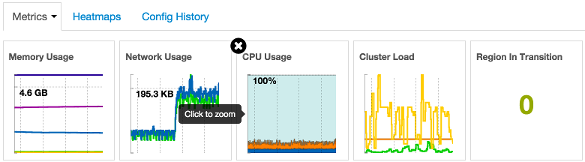

Viewing Cluster-Wide Metrics

Cluster-wide metrics display information that represents your whole cluster. The Ambari Dashboard shows the following cluster-wide metrics:

|

Metric: |

Description: |

|---|---|

|

Memory Usage |

The cluster-wide memory utilization, including memory cached, swapped, used, shared. |

|

Network Usage |

The cluster-wide network utilization, including in-and-out. |

|

CPU Usage |

Cluster-wide CPU information, including system, user and wait IO. |

|

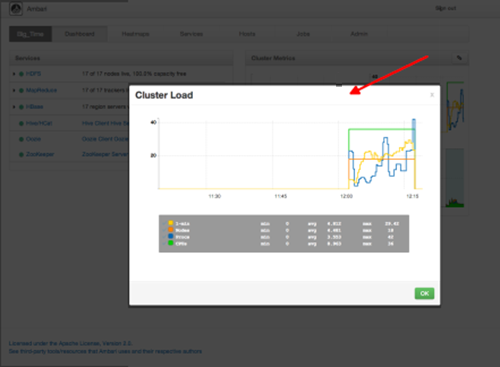

Cluster Load |

Cluster-wide Load information, including total number of nodes. total number of CPUs, number of running processes and 1-min Load. |

-

To remove a widget from the dashboard, click the white X.

-

Hover your cursor over each cluster-wide metric to magnify the chart or itemize the widget display.

-

To remove or add metric items from each cluster-wide metric widget, select the item on the widget legend.

-

To see a larger view of the chart, select the magnifying glass icon.

Ambari displays a larger version of the widget in a pop-out window, as shown in the following example:

Use the pop-up window in the same ways that you use cluster-wide metric widgets on the dashboard.

To close the widget pop-up window, choose OK.

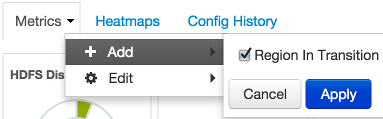

Adding a Widget to the Dashboard

To replace a widget that has been removed from the dashboard:

-

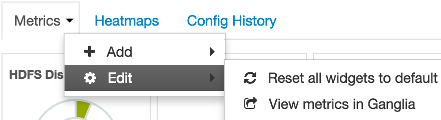

Select the Metrics drop-down, as shown in the following example:

-

Choose Add.

-

Select a metric, such as Region in Transition.

-

Choose Apply.

Resetting the Dashboard

To reset all widgets on the dashboard to display default settings:

-

Select the Metrics drop-down, as shown in the following example:

-

Choose Edit.

-

Choose Reset all widgets to default.

Viewing Metrics in Ganglia

To view metrics for your cluster using the Ganglia UI:

-

Select the Metrics drop-down:

-

Choose Edit.

-

Choose View Metrics in Ganglia.

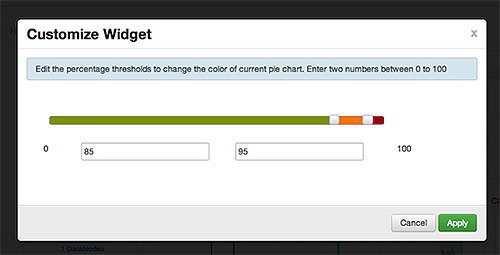

Customizing Metrics Display

To customize the way a service widget displays metrics information:

-

Hover your cursor over a service widget.

-

Select the pencil-shaped, edit icon that appears in the upper-right corner.

The Customize Widget pop-up window displays properties that you can edit, as shown in the following example.

-

Follow the instructions in the Customize Widget pop-up to customize widget appearance.

In this example, you can adjust the thresholds at which the HDFS Capacity bar chart changes color, from green to orange to red.

-

To save your changes and close the editor, choose

Apply. -

To close the editor without saving any changes, choose

Cancel.

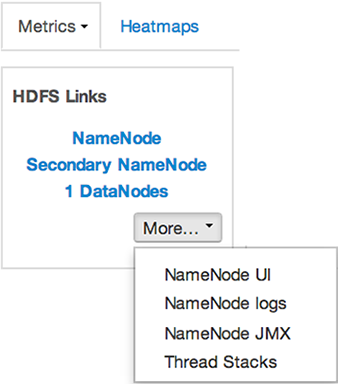

Viewing More Metrics for your HDP Stack

The HDFS Links and HBase Links widgets list HDP components for which links to more metrics information, such as thread stacks, logs and native component UIs are available. For example, you can link to NameNode, Secondary NameNode, and DataNode components for HDFS, using the links shown in the following example:

Choose the More drop-down to select from the list of links available for each service. The Ambari

Dashboard includes additional links to metrics for the following services:

|

Service: |

Metric: |

Description: |

|---|---|---|

|

HDFS |

||

|

NameNode UI |

Links to the NameNode UI. |

|

|

NameNode Logs |

Links to the NameNode logs. |

|

|

NameNode JMX |

Links to the NameNode JMX servlet. |

|

|

Thread Stacks |

Links to the NameNode thread stack traces. |

|

|

HBase |

||

|

HBase Master UI |

Links to the HBase Master UI. |

|

|

HBase Logs |

Links to the HBase logs. |

|

|

ZooKeeper Info |

Links to ZooKeeper information. |

|

|

HBase Master JPX |

Links to the HBase Master JMX servlet. |

|

|

Debug Dump |

Links to debug information. |

|

|

Thread Stacks |

Links to the HBase Master thread stack traces. |

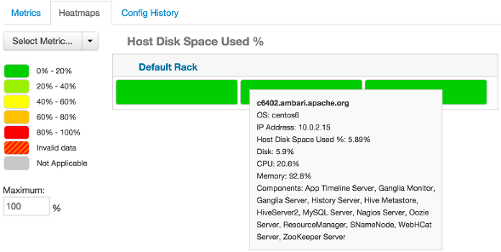

Viewing Heatmaps

Heatmaps provides a graphical representation of your overall cluster utilization using simple color coding.

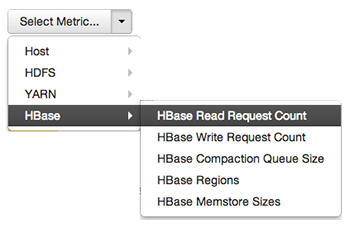

A colored block represents each host in your cluster. To see more information about a specific host, hover over the block representing the host in which you are interested. A pop-up window displays metrics about HDP components installed on that host. Colors displayed in the block represent usage in a unit appropriate for the selected set of metrics. If any data necessary to determine state is not available, the block displays "Invalid Data". Changing the default maximum values for the heatmap lets you fine tune the representation. Use the Select Metric drop-down to select the metric type.

Heatmaps supports the following metrics:

|

Metric |

Uses |

|---|---|

|

Host/Disk Space Used % |

disk.disk_free and disk.disk_total |

|

Host/Memory Used % |

memory.mem_free and memory.mem_total |

|

Host/CPU Wait I/O % |

cpu.cpu_wio |

|

HDFS/Bytes Read |

dfs.datanode.bytes_read |

|

HDFS/Bytes Written |

dfs.datanode.bytes_written |

|

HDFS/Garbage Collection Time |

jvm.gcTimeMillis |

|

HDFS/JVM Heap MemoryUsed |

jvm.memHeapUsedM |

|

YARN/Garbage Collection Time |

jvm.gcTimeMillis |

|

YARN / JVM Heap Memory Used |

jvm.memHeapUsedM |

|

YARN / Memory used % |

UsedMemoryMB and AvailableMemoryMB |

|

HBase/RegionServer read request count |

hbase.regionserver.readRequestsCount |

|

HBase/RegionServer write request count |

hbase.regionserver.writeRequestsCount |

|

HBase/RegionServer compaction queue size |

hbase.regionserver.compactionQueueSize |

|

HBase/RegionServer regions |

hbase.regionserver.regions |

|

HBase/RegionServer memstore sizes |

hbase.regionserver.memstoreSizeMB |

Scanning Status

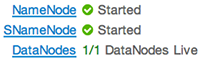

Notice the color of the dot appearing next to each component name in a list of components, services or hosts. The dot color and blinking action indicates operating status of each component, service, or host. For example, in the Summary View, notice green dot next to each service name. The following colors and actions indicate service status:

|

Color |

Status |

|---|---|

|

Solid Green |

All masters are running |

|

Blinking Green |

Starting up |

|

Solid Red |

At least one master is down |

|

Blinking Red |

Stopping |

Click the service name to open the Services screen, where you can see more detailed information on each service.

Managing Hosts

Use Ambari Hosts to manage multiple HDP components such as DataNodes, NameNodes, TaskTrackers and RegionServers, running on hosts throughout your cluster. For example, you can restart all DataNode components, optionally controlling that task with rolling restarts. Ambari Hosts supports filtering your selection of host components, based on operating status, host health, and defined host groupings.

Working with Hosts

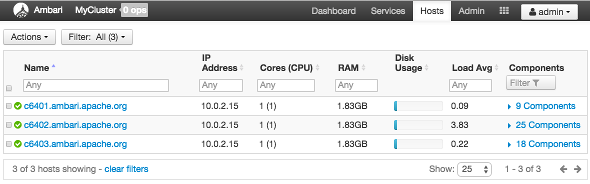

Use Hosts to view hosts in your cluster on which Hadoop services run. Use options on Actions to perform actions on one or more hosts in your cluster.

View individual hosts, listed by fully-qualified domain name, on the Hosts landing page.

Determining Host Status

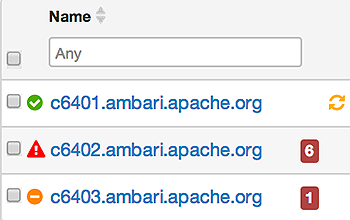

A colored dot beside each host name indicates operating status of each host, as follows:

-

Red - At least one master component on that host is down. Hover to see a tooltip that lists affected components.

-

Orange - At least one slave component on that host is down. Hover to see a tooltip that lists affected components.

-

Yellow - Ambari Server has not received a heartbeat from that host for more than 3 minutes.

-

Green - Normal running state.

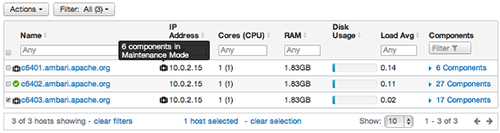

A red condition flag overrides an orange condition flag, which overrides a yellow condition flag. In other words, a host having a master component down may also have other issues. The following example shows three hosts, one having a master component down, one having a slave component down, and one healthy. Warning indicators appear next to hosts having a component down.

Filtering the Hosts List

Use Filters to limit listed hosts to only those having a specific operating status. The number of hosts in your cluster having a listed operating status appears after each status name, in parenthesis. For example, the following cluster has one host having healthy status and three hosts having Maintenance Mode turned on.

For example, to limit the list of hosts appearing on Hosts home to only those with Healthy status, select Filters, then choose the Healthy option. In this case, one host name appears on Hosts home. Alternatively, to limit the list of hosts appearing on Hosts home to only those having Maintenance Mode on, select Filters, then choose the Maintenance Mode option. In this case, three host names appear on Hosts home.

Use the general filter tool to apply specific search and sort criteria that limits the list of hosts appearing on the Hosts page.

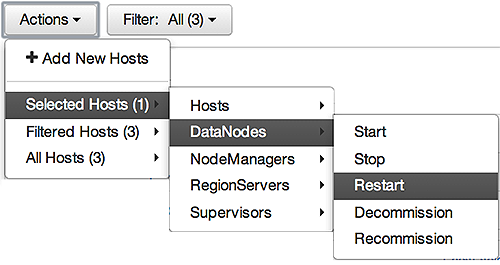

Performing Host-Level Actions

Use Actions to act on one, or multiple hosts in your cluster. Actions performed on multiple hosts are also known as bulk operations.

Actions comprises three menus that list the following options types:

-

Hosts - lists selected, filtered or all hosts options, based on your selections made using Hosts home and Filters.

-

Objects - lists component objects that match your host selection criteria.

-

Operations - lists all operations available for the component objects you selected.

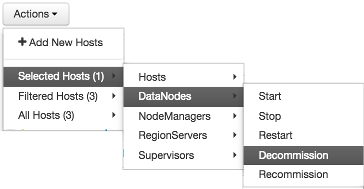

For example, to restart DataNodes on one host:

-

In Hosts, select a host running at least one DataNode.

-

In Actions, choose

Selected Hosts > DataNodes > Restart, as shown in the following image.

-

Choose OK to confirm starting the selected operation.

-

Optionally, use Monitoring Background Operations to follow, diagnose or troubleshoot the restart operation.

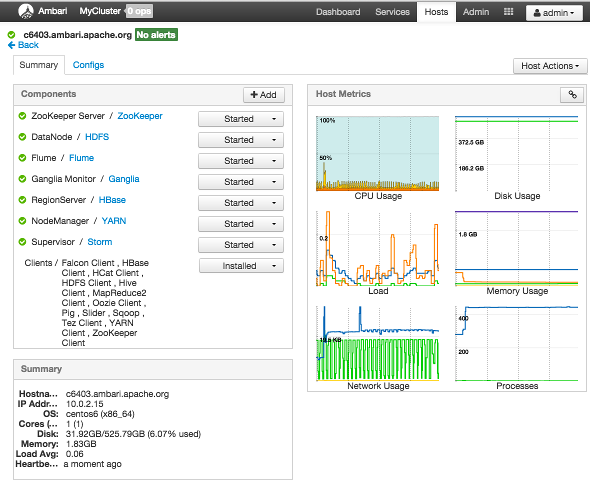

Viewing Components on a Host

To manage components running on a specific host, choose a FQDN on the Hosts page. For example, choose c6403.ambari.apache.org in the default example shown. Summary-Components lists all components installed on that host.

Choose options in Host Actions, to start, stop, restart, delete, or turn on maintenance mode for all components

installed on the selected host.

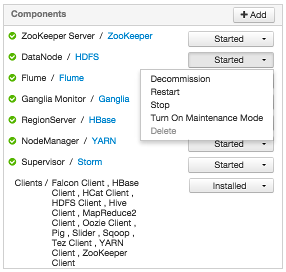

Alternatively, choose action options from the drop-down menu next to an individual component on a host. The drop-down menu shows current operation status for each component, For example, you can decommission, restart, or stop the DataNode component (started) for HDFS, by selecting one of the options shown in the following example:

Decommissioning Masters and Slaves

Decommissioning is a process that supports removing a component from the cluster. You must decommission a master or slave running on a host before removing the component or host from service. Decommissioning helps prevent potential loss of data or service disruption. Decommissioning is available for the following component types:

-

DataNodes

-

NodeManagers

-

TaskTrackers

-

RegionServers

Decommissioning executes the following tasks:

-

For DataNodes, safely replicates the HDFS data to other DataNodes in the cluster.

-

For NodeManagers and TaskTrackers, stops accepting new job requests from the masters and stops the component.

-

For RegionServers, turns on drain mode and stops the component.

How to Decommission a Component

To decommission a component using Ambari Web, browse Hosts to find the host FQDN on which the component resides.

Using Actions, select HostsComponent Type, then choose Decommission.

For example:

The UI shows "Decommissioning" status while steps process, then "Decommissioned" when complete.

How to Delete a Component

To delete a component using Ambari Web, on Hosts choose the host FQDN on which the component resides.

-

In

Components, find a decommissioned component. -

Stop the component, if necessary.

-

For a decommissioned component, choose Delete from the component drop-down menu.

-

Restart the Ganglia and Nagios services.

Deleting a slave component, such as a DataNode does not automatically inform a master component, such as a NameNode to remove the slave component from its exclusion list. Adding a deleted slave component back into the cluster presents the following issue; the added slave remains decommissioned from the master's perspective. Restart the master component, as a work-around.

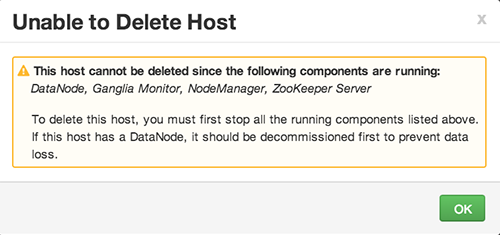

Deleting a Host from a Cluster

Deleting a host removes the host from the cluster. Before deleting a host, you must complete the following prerequisites:

-

Stop all components running on the host.

-

Decommission any DataNodes running on the host.

-

Move from the host any master components, such as NameNode or ResourceManager, running on the host.

-

Turn Off Maintenance Mode, if necessary, for the host.

How to Delete a Host from a Cluster

-

In Hosts, click on a host name.

-

On the Host-Details page, select Host Actions drop-down menu.

-

Choose Delete.

If you have not completed prerequisite steps, a warning message similar to the following one appears:

Setting Maintenance Mode

Maintenance Mode supports suppressing alerts and skipping bulk operations for specific services, components and hosts in an Ambari-managed cluster. You typically turn on Maintenance Mode when performing hardware or software maintenance, changing configuration settings, troubleshooting, decommissioning, or removing cluster nodes. You may place a service, component, or host object in Maintenance Mode before you perform necessary maintenance or troubleshooting tasks.

Maintenance Mode affects a service, component, or host object in the following two ways:

-

Maintenance Mode suppresses alerts, warnings and status change indicators generated for the object

-

Maintenance Mode exempts an object from host-level or service-level bulk operations

Explicitly turning on Maintenance Mode for a service implicitly turns on Maintenance Mode for components and hosts that run the service. While Maintenance Mode On prevents bulk operations being performed on the service, component, or host, you may explicitly start and stop a service, component, or host having Maintenance Mode On.

Setting Maintenance Mode for Services, Components, and Hosts

For example, examine using Maintenance Mode in a 3-node, Ambari-managed cluster installed using default options. This cluster has one data node, on host c6403. This example describes how to explicitly turn on Maintenance Mode for the HDFS service, alternative procedures for explicitly turning on Maintenance Mode for a host, and the implicit effects of turning on Maintenance Mode for a service, a component and a host.

How to Turn On Maintenance Mode for a Service

-

Using Services, select

HDFS. -

Select Service Actions, then choose

Turn On Maintenance Mode. -

Choose OK to confirm.

Notice, on Services Summary that Maintenance Mode turns on for the NameNode and SNameNode components.

How to Turn On Maintenance Mode for a Host

-

Using Hosts, select c6401.ambari.apache.org.

-

Select

Host Actions, then chooseTurn On Maintenance Mode. -

Choose OK to confirm.

Notice on Components, that Maintenance Mode turns on for all components.

How to Turn On Maintenance Mode for a Host (alternative using filtering for hosts)

-

Using Hosts, select c6403.ambari.apache.org.

-

In

Actions > Selected Hosts > HostschooseTurn On Maintenance Mode. -

Choose

OKto confirm.Notice that Maintenance Mode turns on for host c6403.ambari.apache.org.

Your list of Hosts now shows Maintenance Mode On for hosts c6401 and c6403.

-

Hover your cursor over each Maintenance Mode icon appearing in the Hosts list.

-

Notice that hosts c6401 and c6403 have Maintenance Mode On.

-

Notice that on host c6401; Ganglia Monitor, HbaseMaster, HDFS client, NameNode, and Zookeeper Server have Maintenance Mode turned On.

-

Notice on host c6402, that HDFS client and Secondary NameNode have Maintenance Mode On.

-

Notice on host c6403, that 15 components have Maintenance Mode On.

-

-

The following behavior also results:

-

Alerts are suppressed for the DataNode.

-

DataNode is skipped from HDFS Start/Stop/Restart All, Rolling Restart.

-

DataNode is skipped from all Bulk Operations except Turn Maintenance Mode ON/OFF.

-

DataNode is skipped from Start All and / Stop All components.

-

DataNode is skipped from a host-level restart/restart all/stop all/start.

-

Maintenance Mode Use Cases

Four common Maintenance Mode Use Cases follow:

-

You want to perform hardware, firmware, or OS maintenance on a host.

You want to:

-

Prevent alerts generated by all components on this host.

-

Be able to stop, start, and restart each component on the host.

-

Prevent host-level or service-level bulk operations from starting, stopping, or restarting components on this host.

To achieve these goals, turn On Maintenance Mode explicitly for the host. Putting a host in Maintenance Mode implicitly puts all components on that host in Maintenance Mode.

-

-

You want to test a service configuration change. You will stop, start, and restart the service using a rolling restart to test whether restarting picks up the change.

You want:

-

No alerts generated by any components in this service.

-

To prevent host-level or service-level bulk operations from starting, stopping, or restarting components in this service.

To achieve these goals, turn on Maintenance Mode explicitly for the service. Putting a service in Maintenance Mode implicitly turns on Maintenance Mode for all components in the service.

-

-

You turn off a service completely.

You want:

-

The service to generate no warnings.

-

To ensure that no components start, stop, or restart due to host-level actions or bulk operations.

To achieve these goals, turn On Maintenance Mode explicitly for the service. Putting a service in Maintenance Mode implicitly turns on Maintenance Mode for all components in the service.

-

-

A host component is generating alerts.

You want to:

-

Check the component.

-

Assess warnings and alerts generated for the component.

-

Prevent alerts generated by the component while you check its condition.

-

To achieve these goals, turn on Maintenance Mode explicitly for the host component. Putting a host component in Maintenance Mode prevents host-level and service-level bulk operations from starting or restarting the component. You can restart the component explicitly while Maintenance Mode is on.

Adding Hosts to a Cluster

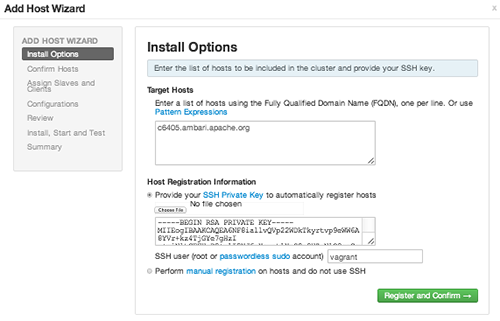

To add new hosts to your cluster, browse to the Hosts page and select Actions > +Add New Hosts. The Add Host Wizard provides a sequence of prompts similar to those in the Ambari Install Wizard. Follow

the prompts, providing information similar to that provided to define the first set

of hosts in your cluster.

Managing Services

Use Services to monitor and manage selected services running in your Hadoop cluster.

All services installed in your cluster are listed in the leftmost Services panel.

Services supports the following tasks:

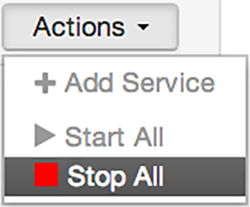

Starting and Stopping All Services

To start or stop all listed services at once, select Actions, then choose Start All or Stop All, as shown in the following example:

Selecting a Service

Selecting a service name from the list shows current summary, alert, and health information for the selected service. To refresh the monitoring panels and show information about a different service, select a different service name from the list.

Notice the colored dot next to each service name, indicating service operating status and a small, red, numbered rectangle indicating any alerts generated for the service.

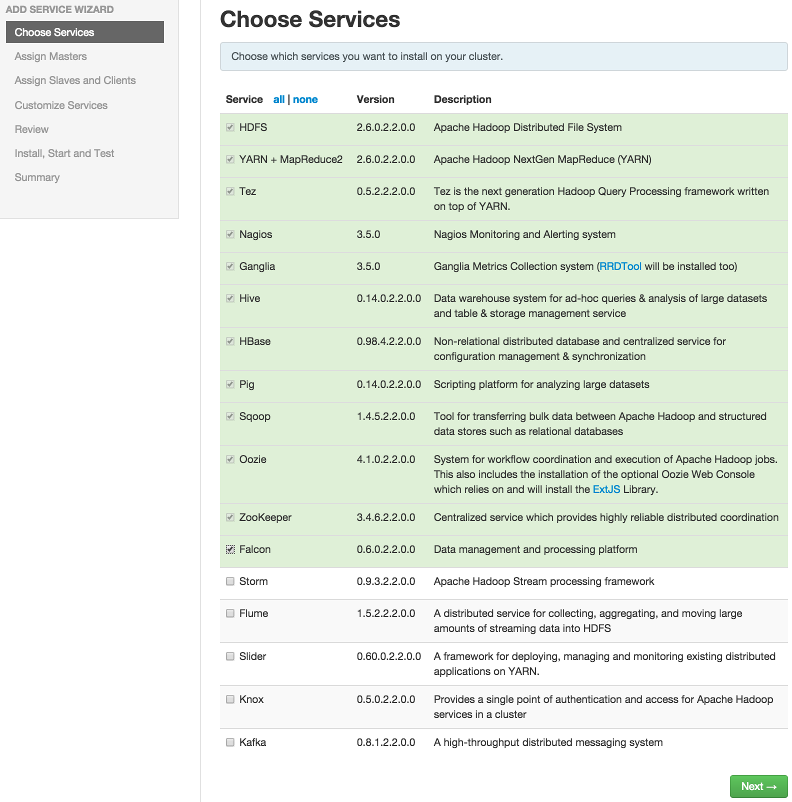

Adding a Service

The Ambari install wizard installs all available Hadoop services by default. You may

choose to deploy only some services initially, then add other services at later times.

For example, many customers deploy only core Hadoop services initially. Add Service supports deploying additional services without interrupting operations in your Hadoop

cluster. When you have deployed all available services, Add Service displays disabled.

For example, if you are using HDP 2.2 Stack and did not install Falcon or Storm, you

can use the Add Service capability to add those services to your cluster.

To add a service, select Actions > Add Service, then complete the following procedure using the Add Service Wizard.

Adding a Service to your Hadoop cluster

This example shows the Falcon service selected for addition.

-

Choose

Services.Choose an available service. Alternatively, choose all to add all available services to your cluster. Then, choose Next. The Add Service wizard displays installed services highlighted green and check-marked, not available for selection.

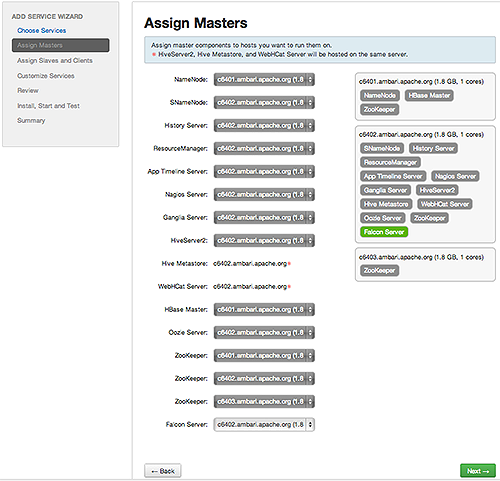

-

In

Assign Masters, confirm the default host assignment. Alternatively, choose a different host machine to which master components for your selected service will be added. Then, choose Next.The Add Services Wizard indicates hosts on which the master components for a chosen service will be installed. A service chosen for addition shows a grey check mark.

Using the drop-down, choose an alternate host name, if necessary.-

A green label located on the host to which its master components will be added, or

-

An active drop-down list on which available host names appear.

-

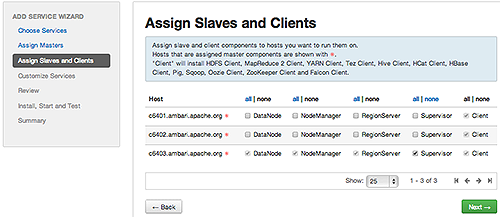

-

In

Assign Slaves and Clients, accept the default assignment of slave and client components to hosts. Then, choose Next.Alternatively, select hosts on which you want to install slave and client components. You must select at least one host for the slave of each service being added.

Service Added

Host Role Required

MapReduce

TaskTracker

YARN

NodeManager

HBase

RegionServer

Host Roles Required for Added Services

The Add Service Wizard skips and disables the Assign Slaves and Clients step for a service requiring no slave nor client assignment.

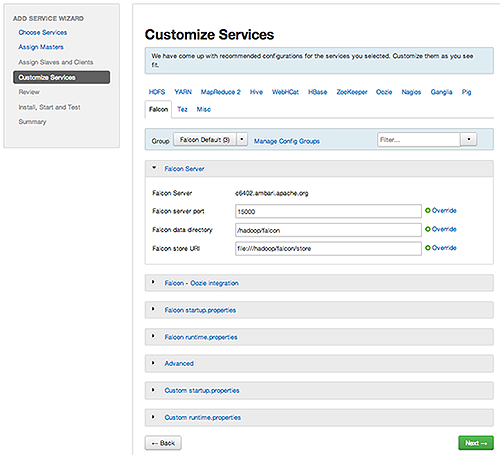

-

In

Customize Services, accept the default configuration properties.Alternatively, edit the default values for configuration properties, if necessary. Choose Override to create a configuration group for this service. Then, choose Next.

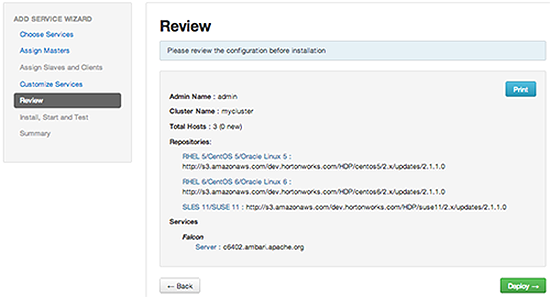

-

In Review, make sure the configuration settings match your intentions. Then, choose Deploy.

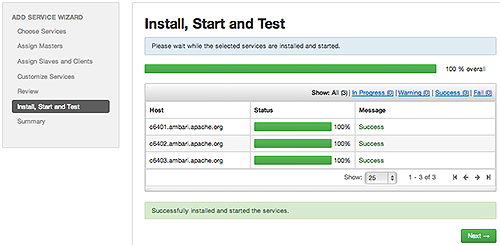

-

Monitor the progress of installing, starting, and testing the service. When the service installs and starts successfully, choose Next.

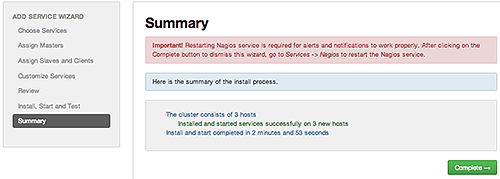

-

Summary displays the results of installing the service. Choose Complete.

-

Restart the Nagios service and any other components having stale configurations.

If you do not restart Nagios service after completing the Add Service Wizard, alerts and notifications may not work properly.

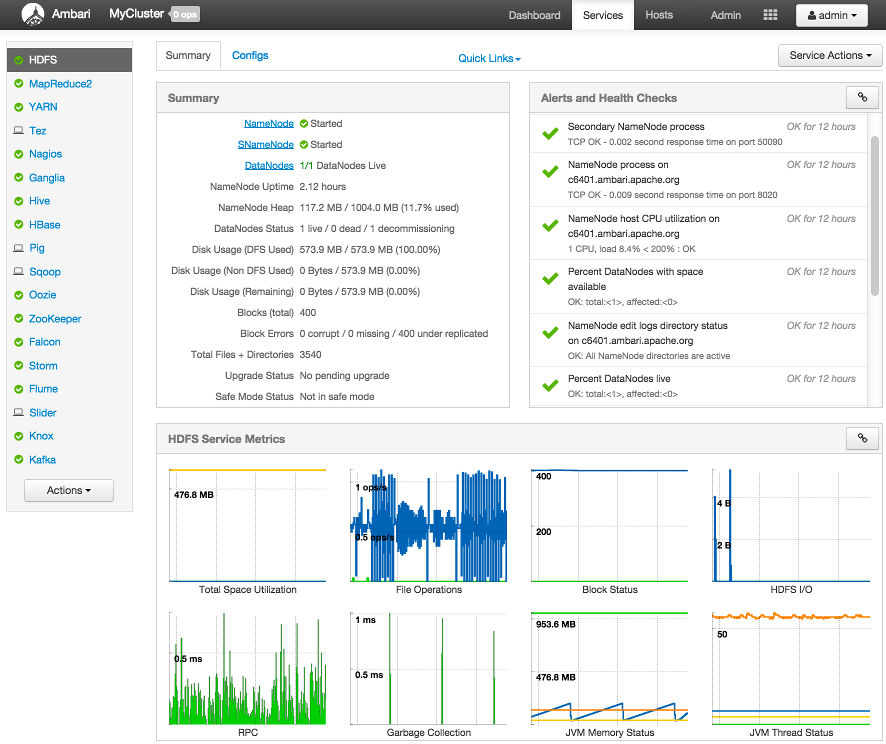

Viewing Summary, Alert, and Health Information

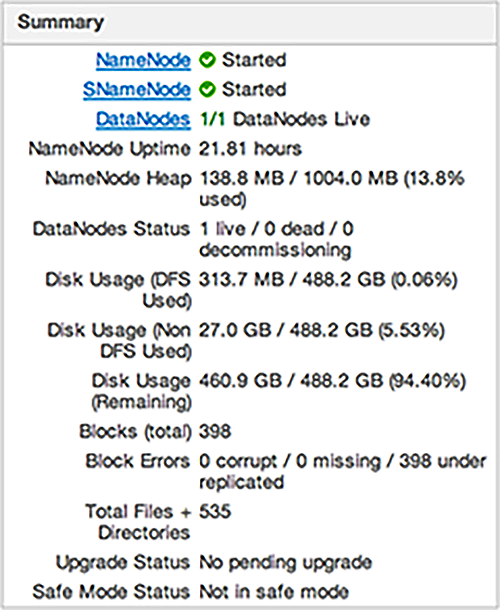

After you select a service, the Summary tab displays basic information about the selected service.

Select one of the View Host links, as shown in the following example, to view components and the host on which

the selected service is running.

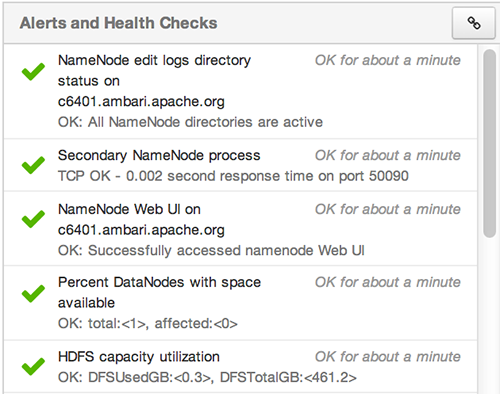

Alerts and Health Checks

View results of the health checks performed on your cluster by Nagios in Alerts and Health Checks. Alerts and Health Checks displays a list of each issue and its rating, sorted first

by descending severity, then by descending time. To access more detailed information,

select the native Nagios GUI link located at the upper right corner of the panel.

Use the Nagios credentials you set up during installation to log in to Nagios.

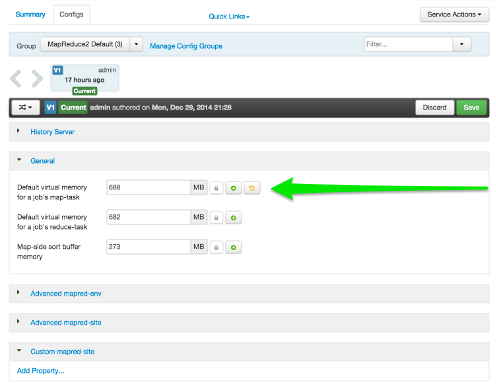

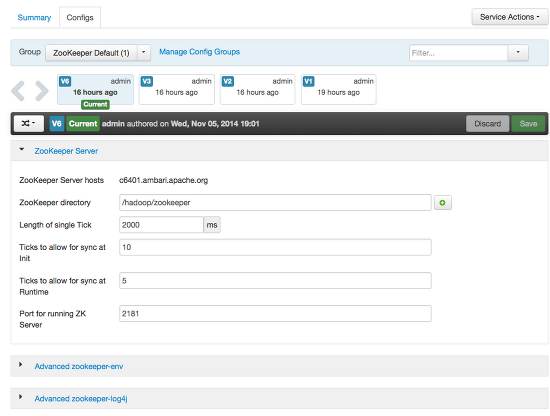

Editing Service Config Properties

Select a service, then select Configs to view and update configuration properties for the selected service. For example,

select MapReduce2, then select Configs. Expand a config category to view configurable

service properties. For example, select General to configure Default virtual memory

for a job's map task.

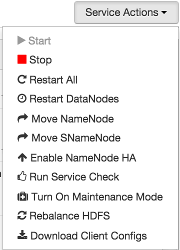

Performing Service Actions

Manage a selected service on your cluster by performing service actions. In Services, select the Service Actions drop-down menu, then choose an option. Available options depend on the service you

have selected. For example, HDFS service action options include:

Optionally, choose Turn On Maintenance Mode to suppress alerts generated by a service before performing a service action. Maintenance

Mode suppresses alerts and status indicator changes generated by the service, while

allowing you to start, stop, restart, move, or perform maintenance tasks on the service.

For more information about how Maintenance Mode affects bulk operations for host components,

see Maintenance Mode.

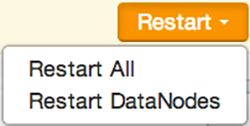

Rolling Restarts

When you restart multiple services, components, or hosts, use rolling restarts to distribute the task; minimizing cluster downtime and service disruption. A rolling restart stops, then starts multiple, running slave components such as DataNodes, TaskTrackers, NodeManagers, RegionServers, or Supervisors, using a batch sequence. You set rolling restart parameter values to control the number of, time between, tolerance for failures, and limits for restarts of many components across large clusters.

To run a rolling restart:

-

Select a Service, then link to a list of specific components or hosts that Require Restart.

-

Select Restart, then choose a slave component option.

-

Review and set values for Rolling Restart Parameters.

-

Optionally, reset the flag to only restart components with changed configurations.

-

Choose Trigger Restart.

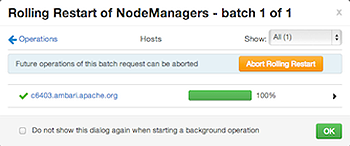

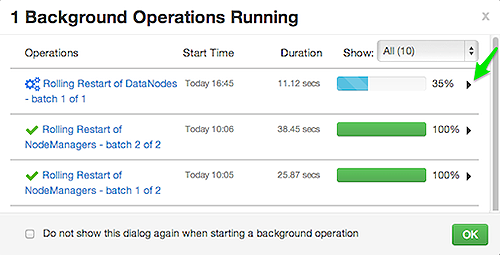

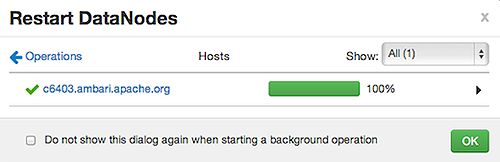

Use Monitor Background Operations to monitor progress of rolling restarts.

Setting Rolling Restart Parameters

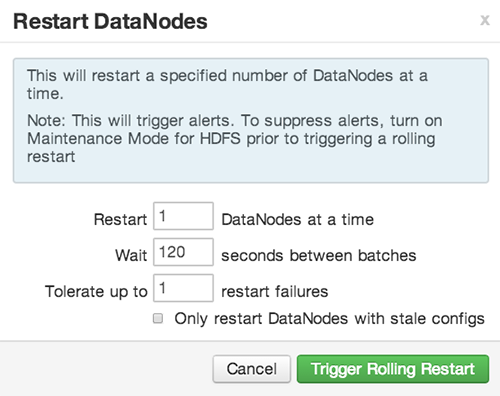

When you choose to restart slave components, use parameters to control how restarts of components roll. Parameter values based on ten percent of the total number of components in your cluster are set as default values. For example, default settings for a rolling restart of components in a 3-node cluster restarts one component at a time, waits two minutes between restarts, will proceed if only one failure occurs, and restarts all existing components that run this service.

If you trigger a rolling restart of components, Restart components with stale configs defaults to true. If you trigger a rolling restart of services, Restart services with stale configs defaults to false.

Rolling restart parameter values must satisfy the following criteria:

|

Parameter |

Required |

Value |

Description |

|---|---|---|---|

|

Batch Size |

Yes |

Must be an integer > 0 |

Number of components to include in each restart batch. |

|

Wait Time |

Yes |

Must be an integer > = 0 |

Time (in seconds) to wait between queuing each batch of components. |

|

Tolerate up to x failures |

Yes |

Must be an integer > = 0 |

Total number of restart failures to tolerate, across all batches, before halting the restarts and not queuing batches. |

Aborting a Rolling Restart

To abort future restart operations in the batch, choose Abort Rolling Restart.

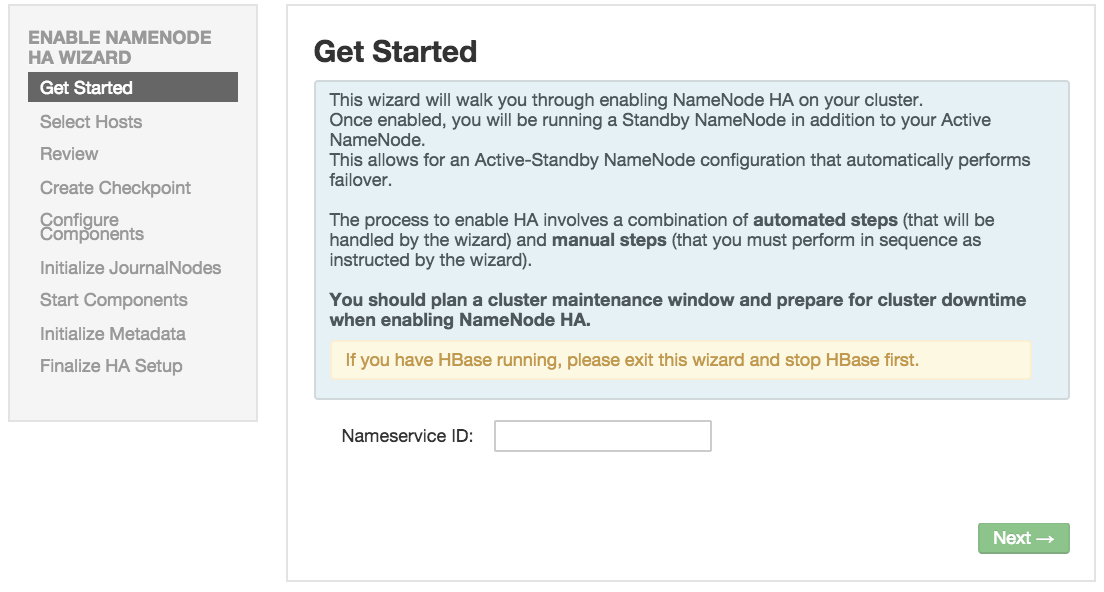

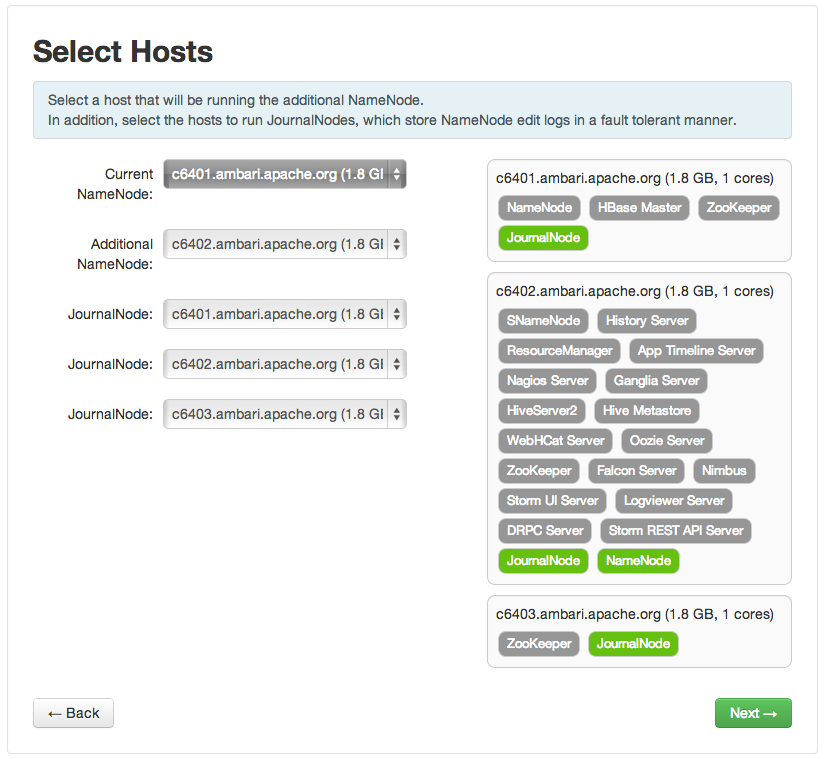

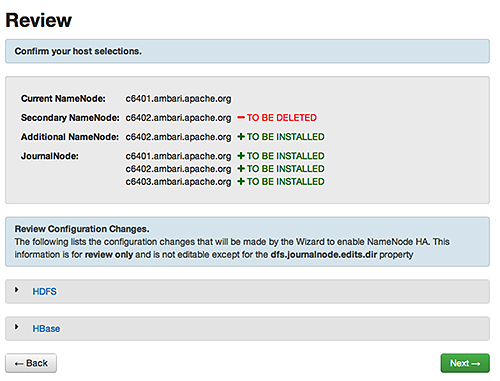

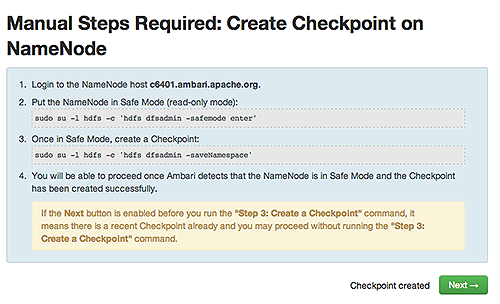

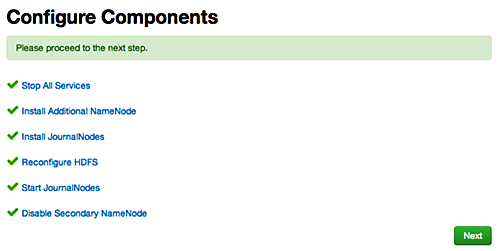

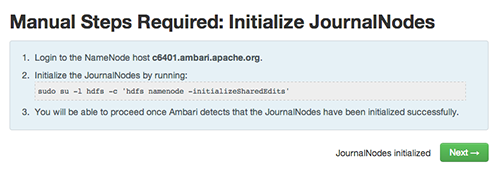

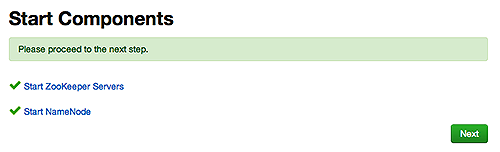

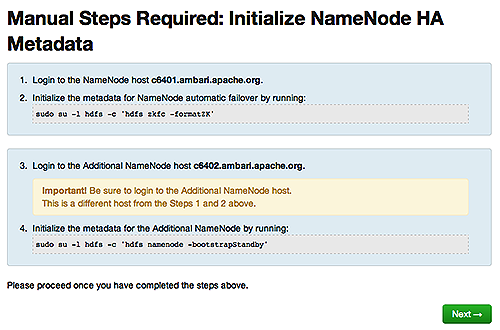

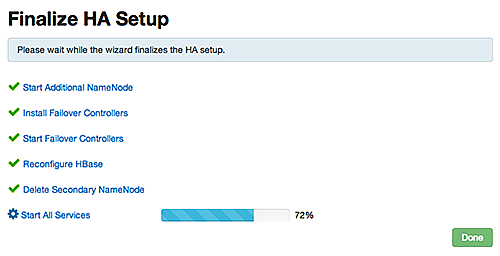

Enabling NameNode High Availability

To ensure that a NameNode in your cluster is always available if the primary NameNode

host fails, enable and set up NameNode High Availability on your cluster using Ambari

Web.

In Ambari Web, browse to Services > HDFS > Summary, select Service Actions and then choose Enable NameNode HA. Follow the steps in the Enable NameNode HA Wizard.

For more information about using the Enable NameNode HA Wizard to set up NameNode

High Availability, see Configuring NameNode High Availability.

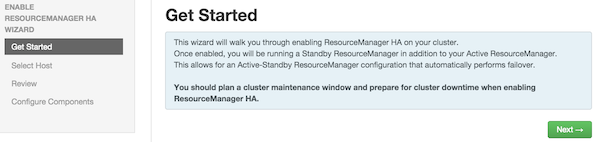

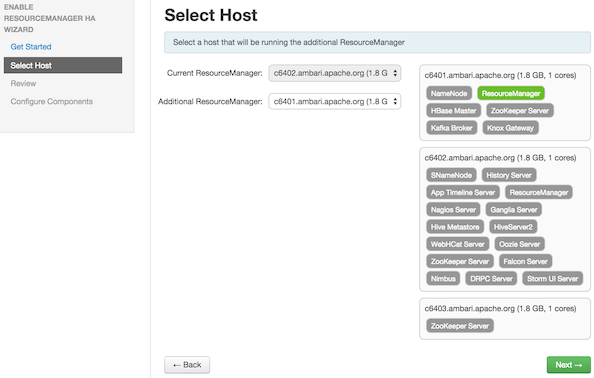

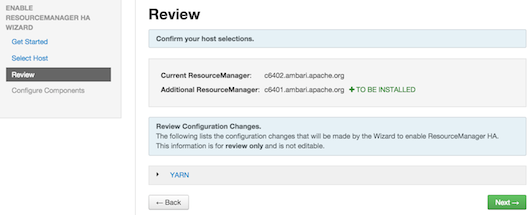

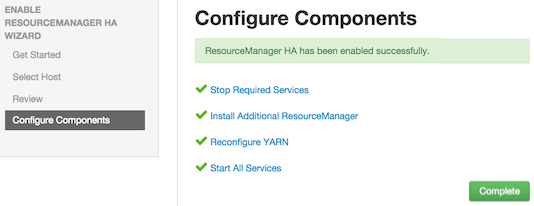

Enabling Resource Manager High Availability

To ensure that a ResourceManager in your cluster is always available if the primary

ResourceManager host fails, enable and set up ResourceManager High Availability on

your cluster using Ambari Web.

In Ambari Web, browse to Services > YARN > Summary, select Service Actions and then choose Enable ResourceManager HA. Follow the steps

in the Enable ResourceManager HA Wizard.

For more information about using the Enable ResourceManager HA Wizard to set up ResourceManager

High Availability, see Configuring ResourceManager High Availability.

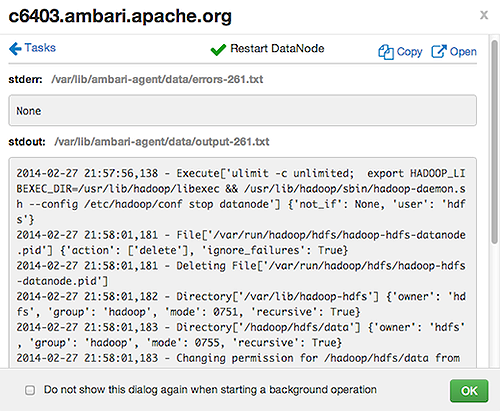

Monitoring Background Operations

Optionally, use Background Operations to monitor progress and completion of bulk operations such as rolling restarts.

Background Operations opens by default when you run a job that executes bulk operations.

-

Select the right-arrow for each operation to show restart operation progress on each host.

-

After restarts complete, Select the right-arrow, or a host name, to view log files and any error messages generated on the selected host.

-

Select links at the upper-right to copy or open text files containing log and error information.

Optionally, select the option to not show the bulk operations dialog.

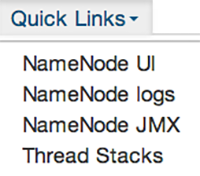

Using Quick Links

Select Quick Links options to access additional sources of information about a selected service. For

example, HDFS Quick Links options include the native NameNode GUI, NameNode logs,

the NameNode JMX output, and thread stacks for the HDFS service. Quick Links are not

available for every service.

Analyzing Service Metrics

Review visualizations in Metrics that chart common metrics for a selected service. Services > Summary displays metrics widgets for HDFS, HBase, Storm services. For more information about

using metrics widgets, see Scanning System Metrics. To see more metrics information, select the link located at the upper right of the

Metrics panel that opens the native Ganglia GUI.

Managing Configurations

Use Ambari Web to manage your HDP component configurations. Select any of the following topics:

Configuring Services

Select a service, then select Configs to view and update configuration properties for the selected service. For example,

select MapReduce2, then select Configs. Expand a config category to view configurable

service properties.

Updating Service Properties

-

Expand a configuration category.

-

Edit values for one or more properties that have the Override option.

Edited values, also called stale configs, show an Undo option.

-

Choose Save.

Restarting components

After editing and saving a service configuration, Restart indicates components that

you must restart.

Select the Components or Hosts links to view details about components or hosts requiring

a restart.

Then, choose an option appearing in Restart. For example, options to restart YARN

components include:

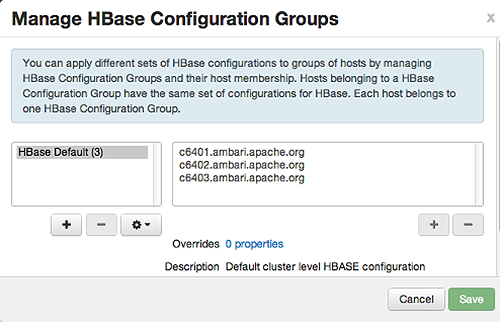

Using Host Config Groups

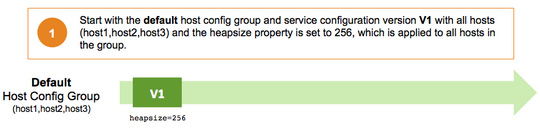

Ambari initially assigns all hosts in your cluster to one, default configuration group

for each service you install. For example, after deploying a three-node cluster with

default configuration settings, each host belongs to one configuration group that

has default configuration settings for the HDFS service. In Configs, select Manage Config Groups, to create new groups, re-assign hosts, and override default settings for host components

you assign to each group.

To create a Configuration Group:

-

Choose

Add New Configuration Group. -

Name and describe the group, then choose Save.

-

Select a Config Group, then choose Add Hosts to Config Group.

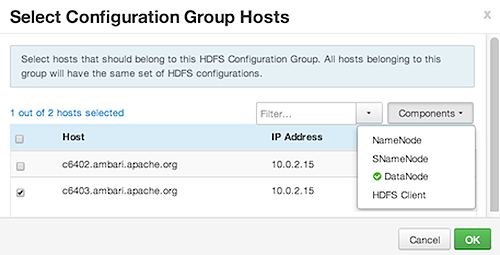

-

Select Components and choose from available Hosts to add hosts to the new group.

Select Configuration Group Hosts enforces host membership in each group, based on installed components for the selected service.

-

Choose OK.

-

In Manage Configuration Groups, choose Save.

To edit settings for a configuration group:

-

In Configs, choose a Group.

-

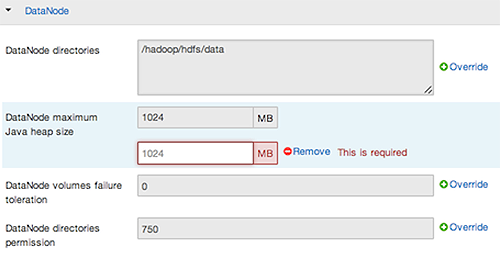

Select a Config Group, then expand components to expose settings that allow Override.

-

Provide a non-default value, then choose Override or Save.

Configuration groups enforce configuration properties that allow override, based on installed components for the selected service and group.

-

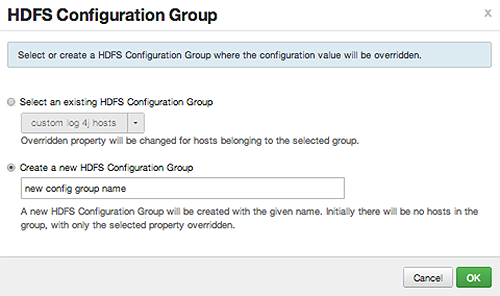

Override prompts you to choose one of the following options:

-

Select an existing configuration group (to which the property value override provided in step 3 will apply), or

-

Create a new configuration group (which will include default properties, plus the property override provided in step 3).

-

Then, choose

OK.

-

-

In Configs, choose Save.

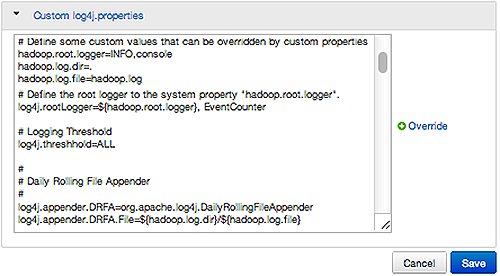

Customizing Log Settings

Ambari Web displays default logging properties in Service Configs > Custom log 4j Properties. Log 4j properties control logging activities for the selected service.

Restarting components in the service pushes the configuration properties displayed in Custom log 4j Properties to each host running components for that service. If you have customized logging properties that define how activities for each service are logged, you will see refresh indicators next to each service name after upgrading to Ambari 1.5.0 or higher. Make sure that logging properties displayed in Custom log 4j Properties include any customization. Optionally, you can create configuration groups that include custom logging properties. For more information about saving and overriding configuration settings, see Configuring Services.

Downloading Client Configs

For Services that include client components (for example Hadoop Client or Hive Client), you can download the client configuration files associated with that client from Ambari.

-

In Ambari Web, browse to the Service with the client for which you want the configurations.

-

Choose

Service Actions. -

Choose

Download Client Configs. You are prompted for a location to save the client configs bundle.

-

Save the bundle.

Service Configuration Versions

Ambari provides the ability to manage configurations associated with a Service. You can make changes to configurations, see a history of changes, compare + revert changes and push configuration changes to the cluster hosts.

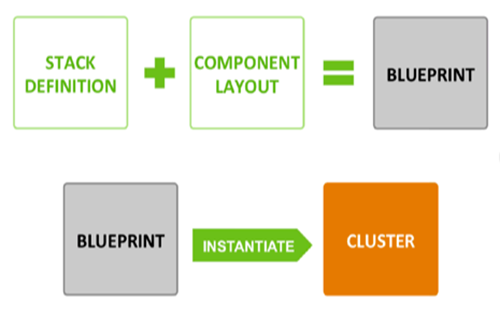

Basic Concepts

It’s important to understand how service configurations are organized and stored in

Ambari. Properties are grouped into Configuration Types (config types). A set of config

types makes up the set of configurations for a service.

For example, the HDFS Service includes the following config types: hdfs-site, core-site,

hdfs-log4j, hadoop-env, hadoop-policy. If you browse to Services > HDFS > Configs, the configuration properties for these config types are available for edit.

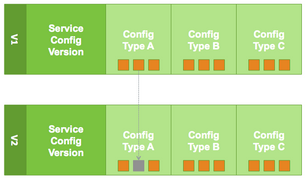

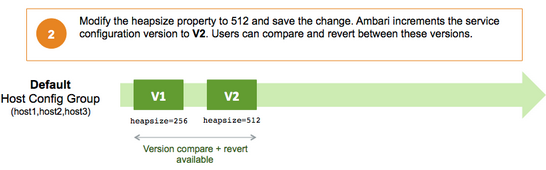

Versioning of configurations is performed at the service-level. Therefore, when you

modify a configuration property in a service, Ambari will create a Service Config

Version. The figure below shows V1 and V2 of a Service Configuration Version with

a change to a property in Config Type A. After making the property change to Config

Type A in V1, V2 is created.

Terminology

The following table lists configuration versioning terms and concepts that you should know.

|

Term |

Description |

|---|---|

|

Configuration Property |

Configuration property managed by Ambari, such as NameNode heapsize or replication factor. |

|

Configuration Type (Config Type) |

Group of configuration properties. For example: hdfs-site is a Config Type. |

|

Service Configurations |

Set of configuration types for a particular service. For example: hdfs-site and core-site Config Types are part of the HDFS Service Configuration. |

|

Change Notes |

Optional notes to save with a service configuration change. |

|

Service Config Version (SCV) |

Particular version of configurations for a specific service. Ambari saves a history of service configuration versions. |

|

Host Config Group (HCG) |

Set of configuration properties to apply to a specific set of hosts. Each service has a default Host Config Group, and custom config groups can be created on top of the default configuration group to target property overrides to one or more hosts in the cluster. See Managing Configuration Groups for more information. |

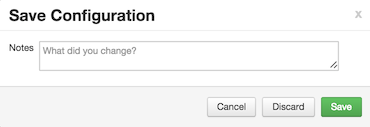

Saving a Change

-

Make the configuration property change.

-

Choose Save.

-

You are prompted to enter notes that describe the change.

-

Click Save to confirm your change. Cancel will not save but instead returns you to the configuration page to continuing editing.

To revert the changes you made and not save, choose Discard.

To return to the configuration page and continue editing without saving changes, choose Cancel.

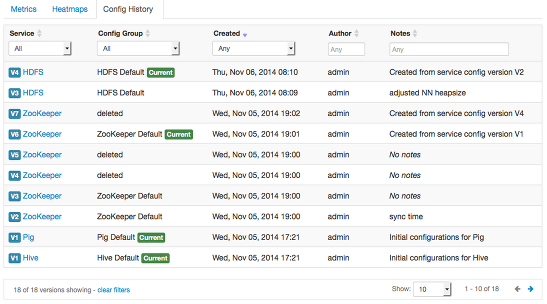

Viewing History

Service Config Version history is available from Ambari Web in two places: On the

Dashboard page under the Config History tab; and on each Service page under the Configs

tab.

The Dashboard > Config History tab shows a list of all versions across services with each version number and the

date and time the version was created. You can also see which user authored the change

with the notes entered during save. Using this table, you can filter, sort and search

across versions.

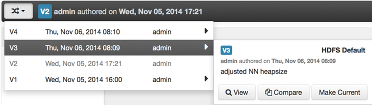

The most recent configuration changes are shown on the Service > Configs tab. Users can navigate the version scrollbar left-right to see earlier versions.

This provides a quick way to access the most recent changes to a service configuration.

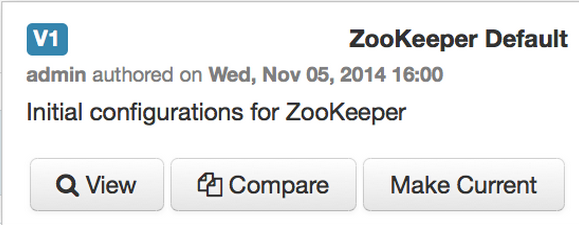

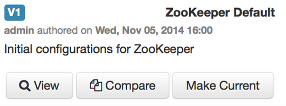

Click on any version in the scrollbar to view, and hover to display an option menu which allows you compare versions and perform a revert. Performing a revert makes any config version that you select the current version.

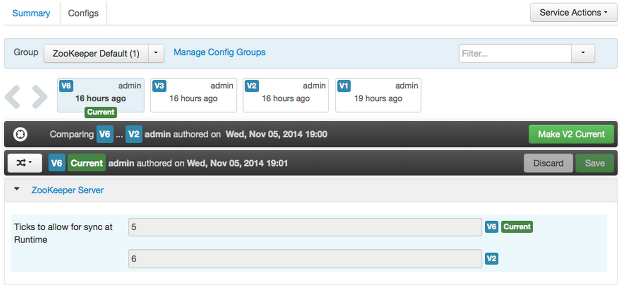

Comparing Versions

When navigating the version scroll area on the Services > Configs tab, you can hover over a version to display options to view, compare or revert.

- To perform a compare between two service configuration versions:

-

Navigate to a specific configuration version. For example “V6”.

-

Using the version scrollbar, find the version would you like to compare against “V6”. For example, if you want to compare V6 to V2, find V2 in the scrollbar.

-

Hover over the version to display the option menu. Click “Compare”.

-

Ambari displays a comparison of V6 to V2, with an option to revert to V2.

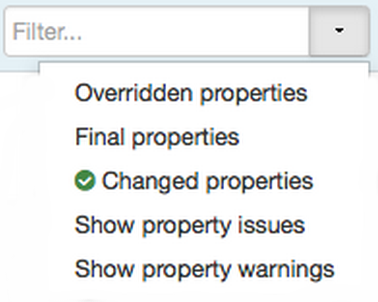

-

Ambari also filters the display by only “Changed properties”. This option is available under the Filter control.

Reverting a Change

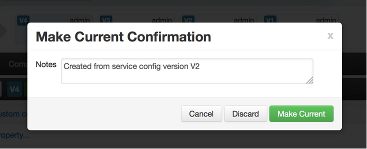

You can revert to an older service configuration version by using the “Make Current” feature. The “Make Current” will actually create a new service configuration version with the configuration properties from the version you are reverting -- it is effectively a “clone”. After initiating the Make Current operation, you are prompted to enter notes for the new version (i.e. the clone) and save. The notes text will include text about the version being cloned.

There are multiple methods to revert to a previous configuration version:

-

View a specific version and click the “Make V* Current” button.

-

Use the version navigation dropdown and click the “Make Current” button.

-

Hover on a version in the version scrollbar and click the “Make Current” button.

-

Perform a comparison and click the “Make V* Current” button.

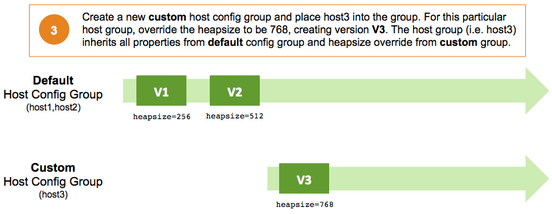

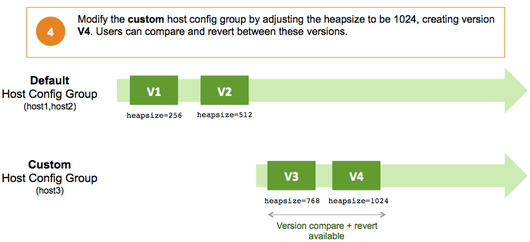

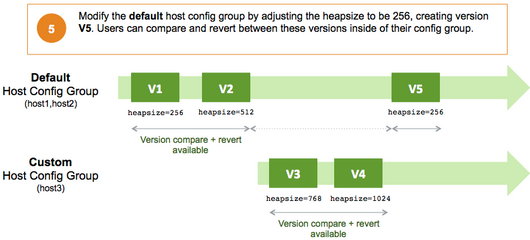

Versioning and Host Config Groups

Service configuration versions are scoped to a host config group. For example, changes made in the default group can be compared and reverted in that config group. Same with custom config groups.

The following example describes a flow where you have multiple host config groups and create service configuration versions in each config group.

Administering the Cluster

Use Admin options to view repositories and service accounts for your cluster and to enable

or disable Kerberos security for your cluster.

For more information about administering your cluster, see Administering Ambari.

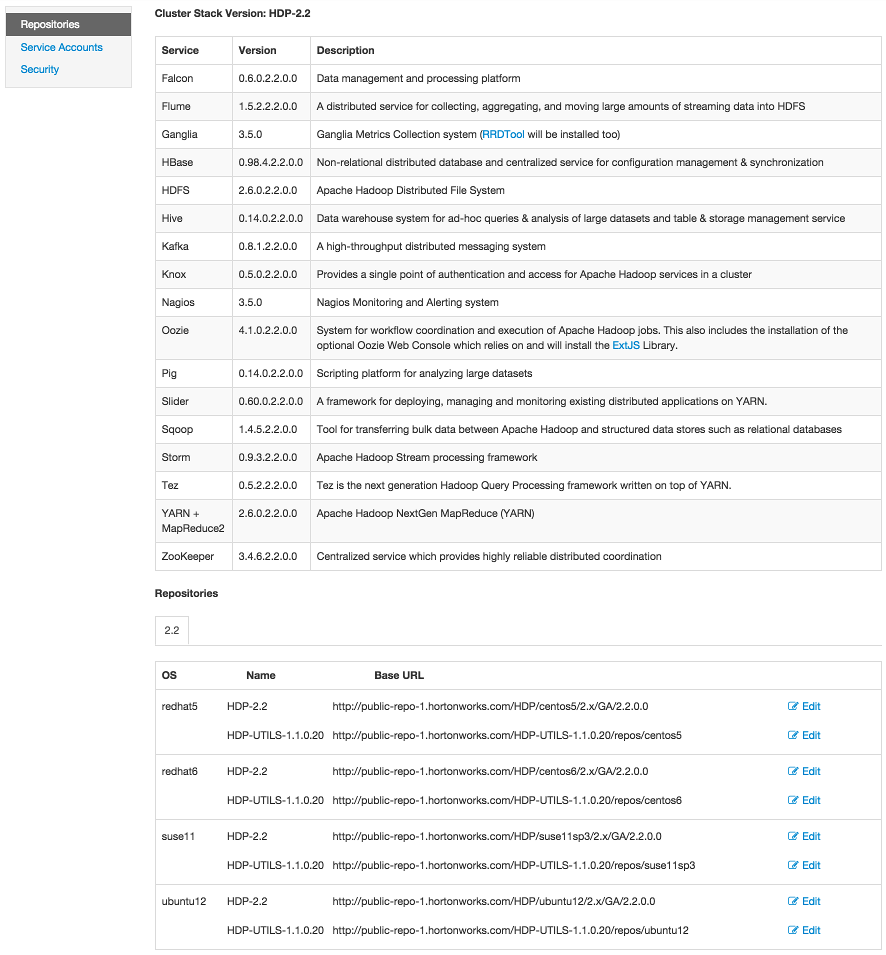

Viewing Cluster Stack Version and Repository URLs

To view the version of each Service version installed in your HDP cluster and the

base repository path, choose Admin > Repositories. Version and repository information for the HDP 2.2 Stack is shown in the following

example:

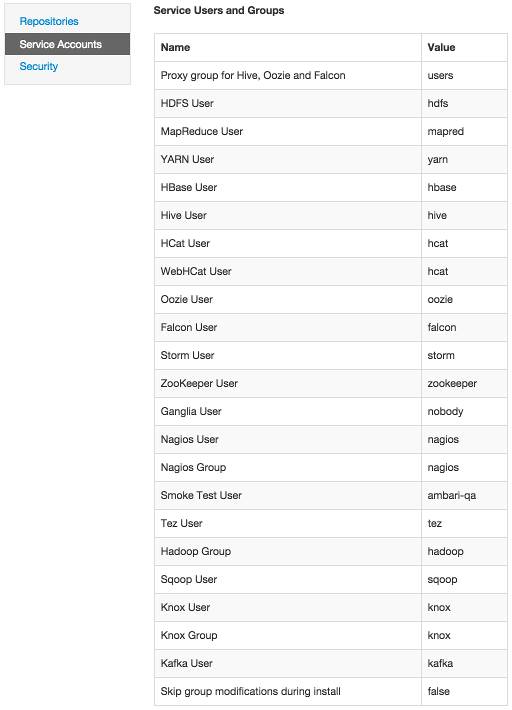

Viewing Service Users and Groups

To view Service Accounts defined in your HDP cluster, choose Admin > Serrvice Accounts. Default Service User and Group information for the HDP 2.2 Stack is shown in the

following example:

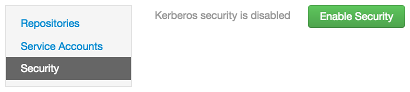

Enabling Kerberos Security

Ambari supports the Kerberos protocol which allows nodes in your cluster to prove their identities, or authenticate, in a secure manner. To enable Kerberos security you must:

-

Set up Kerberos for your cluster. For more information on setting up Kerberos, see Preparing Kerberos for Hadoop.

-

Choose

Enable Securityand follow the Enable Security Wizard.

Monitoring and Alerts

Nagios is an open source network monitoring system designed to monitor all aspects of your Hadoop cluster (such as hosts, services, and so forth) over the network. It can monitor many facets of your installation, ranging from operating system attributes like CPU and memory usage to the status of applications, files, and more.

Nagios is primarily used for the following kinds of tasks:

-

Getting instant information about your organization's Hadoop infrastructure

-

Detecting and repairing problems, and mitigating future issues, before they affect end-users and customers

-

Leveraging Nagios’ event monitoring capabilities to receive alerts for potential problem areas

-

Analyzing specific trends; for example: what is the CPU usage for a particular Hadoop service weekdays between 2 p.m. and 5 p.m?

For more information, see the Nagios website at http://www.nagios.org.

Basic Nagios Architecture

Using the open source monitoring system Nagios, Ambari gathers information on the status of both of the hosts and the services that run on them.

-

Host and System Information: Ambari monitors basic host and system information such as CPU utilization, disk I/O bandwidth and operations per second, average memory and swap space utilization, and average network latency.

-

Service Information: Ambari monitors the health and performance status of each service by presenting information generated by that service. Because services that run in master/slave configurations (HDFS, MapReduce, and HBase) are fault tolerant in regard to service slaves, master information is presented individually, whereas slave information is presented largely in aggregate.

-

Alert Information: Using Nagios with Hadoop-specific plug-ins and configurations, Ambari Web can issue alerts based on service states defined on the following basic levels:

-

OK

-

Warning

-

Critical

The thresholds for these alerts can be tuned using configuration files, and new alerts can be added. For more details on Nagios architecture, see the Nagios Overview at at http://www.nagios.org.

-

Installing Nagios

The Ambari Installation Wizard gives you the option of installing and configuring Nagios, including the out-of-the-box plug-ins for Hadoop-specific alerts. The Nagios server, Nagios plug-ins, and the web-based user interface are installed on the Nagios server host, as specified during the installation procedure.

Configuration File Locations

All Hadoop-specific configurations are added to Nagios through files prefixed with

“hadoop-“ located in the /etc/nagios/objects directory of the Nagios Server host. The default general Nagios configuration file,

nagios.cfg (in /etc/nagios), is set up to pick up the new Hadoop specific configurations from this directory.

Hadoop-specific plug-ins are stored in the Nagios plug-ins directory, /usr/lib64/nagios/plug-ins/.

By default, the Nagios server runs as a user named “nagios” which is in a group also

named “nagios”. The user and group can be customized during the Ambari Cluster Install

(Cluster Install Wizard > Customize Services > Misc). After you install Nagios, use Ambari Web to start and stop the Nagios server.

Configuring Nagios Alerts For Hadoop Services

For each alert, the out-of-the-box Hadoop Nagios configuration file defines default values for the following Nagios directives:

-

Warning threshold

The value that produces a warning alert.

-

Critical threshold

The value that produces a critical alert.

-

Check interval

The number of minutes between regularly scheduled checks on the host, if the check does not change the state.

-

Retry interval

The number of minutes between “retries”, when a service changes state, Nagios can confirm that state change by retrying the check multiple times. This retry interval can be different than the original check interval.

-

Maximum number of check attempts

The maximum number of retry attempts. Usually when the state of a service changes, this change is considered “soft” until multiple retries confirm it. Once the state change is confirmed, it is considered “hard”. Ambari Web displays hard states for all the Nagios Hadoop specific checks.

Nagios Alerts For Hadoop Services

This topic provides more information about Hadoop alerts provided by Ambari. All these alerts are displayed in Ambari Web and in the native Nagios web interface.

Ambari provides two types of alerts configured out-of-the-box:

-

Host-level Alerts

These alerts refer to a specific host and specific component running on that host. These alerts check a component and system-level metrics to determine health of the host.

-

Service-level Alerts

These alerts refer to a Hadoop Service and do not refer to a specific host. These alerts check one or more components of a service as well as system-level metrics to determine overall health of a Hadoop Service.

HDFS Service Alerts

These alerts are used to monitor the HDFS service.

Blocks health

This service-level alert is triggered if the number of corrupt or missing blocks exceeds

the configured critical threshold. This alert uses the check_hdfs_blocks plug-in.

Potential causes

-

Some DataNodes are down and the replicas that are missing blocks are only on those DataNodes.

-

The corrupt/missing blocks are from files with a replication factor of 1. New replicas cannot be created because the only replica of the block is missing.

Possible remedies

-

For critical data, use a replication factor of 3.

-

Bring up the failed DataNodes with missing or corrupt blocks.

-

Identify the files associated with the missing or corrupt blocks by running the Hadoop

fsckcommand. -

Delete the corrupt files and recover them from backup, if it exists.

NameNode process

This host-level alert is triggered if the NameNode process cannot be confirmed to

be up and listening on the network for the configured critical threshold, given in

seconds. It uses the Nagios check_tcp

Potential causes

-

The NameNode process is down on the HDFS master host.

-

The NameNode process is up and running but not listening on the correct network port. The default port is 8201.

-

The Nagios server cannot connect to the HDFS master through the network.

Possible remedies

-

Check for any errors in the logs, located at

/var/log/hadoop/hdfs/. Then restart the NameNode host/process using theHMC Manage Servicestab. -

Run the

netstat-tuplpncommand to check if the NameNode process is bound to the correct network port. -

Use

pingto check the network connection between the Nagios server and the NameNode.

DataNode space

This host-level alert is triggered if storage capacity if full on the DataNode (90%

critical). It uses the check_datanode_storage.php plug-in which checks the DataNode JMX Servlet for the Capacity and Remaining properties.

Potential causes

-

Cluster storage is full.

-

If cluster storage is not full, DataNode is full.

Possible remedies

-

If the cluster has available storage, use Balancer to distribute the data to relatively less-used datanodes.

-

If the cluster is full, delete unnecessary data or add additional storage by adding either more DataNodes or more or larger disks to the DataNodes. After adding more storage run Balancer.

DataNode process

This host-level alert is triggered if the individual DataNode processes cannot be

established to be up and listening on the network for the configured critical threshold,

given in seconds. It uses the Nagios check_tcp plugin.

Potential causes

-

DataNode process is down or not responding.

-

DataNode is not down, but is not listening to the correct network port/address.

-

Nagios server cannot connect to the DataNodes.

Possible remedies

-

Check for dead DataNodes in Ambari Web.

-

Check for any errors in the DataNode logs, located at

/var/log/hadoop/hdfs. Then, restart the DataNode, if necessary. -

Run the

netstat-tuplpncommand to check if the DataNode process is bound to the correct network port. -

Use

pingto check the network connection between the Nagios server and the DataNode.

NameNode host CPU utilization

This host-level alert is triggered if CPU utilization of the NameNode exceeds certain

thresholds (200% warning, 250% critical). It uses the check_cpu.php plug-in which checks the NameNode JMX Servlet for the SystemCPULoad property. This information is only available if you are running JDK 1.7.

Potential causes

-

Unusually high CPU utilization: Can be caused by a very unusual job/query workload; but this is generally due to an issue in the daemon.

Possible remedies

-

Use the

topcommand to determine which processes are consuming excess CPU. -

Reset the offending process.

NameNode edit logs directory status

This host-level alert is triggered if the NameNode cannot write to one of its configured edit log directories.

Potential causes

-

At least one of the multiple edit log directories is mounted over NFS and has become unreachable.

-

The permissions on at least one of the multiple edit log directories is set to Read-only.

Possible remedies

-

Check permissions on all edit log directories.

-

Use the

dfs.name.dirparameter in thehdfs-site.xmlfile on the NameNode to identify the locations of all the edit log directories for the NameNode. Check whether the NameNode can reach all those locations.

NameNode Web UI

This host-level alert is triggered if the NameNode Web UI is unreachable.

Potential causes

-

The NameNode Web UI is unreachable from the Nagios Server.

-

The NameNode process is not running.

Possible remedies

-

Check whether the NameNode process is running.

-

Check whether the Nagios Server can ping the NameNode server.

-

Using a browser, check whether the Nagios Server can reach the NameNode Web UI.

Percent DataNodes with space available

This service-level alert is triggered if the storage if full on a certain percentage

of DataNodes (10% warn, 30% critical). It uses the check_aggregate.php plug-in which aggregates the result from the check_datanode_storage.php plug-in.

Potential causes

-

Cluster storage is full.

-

If cluster storage is not full, DataNode is full.

Possible remedies

-

If cluster still has storage, use Balancer to distribute the data to relatively less-used DataNodes.

-

If the cluster is full, delete unnecessary data or add additional storage by adding either more DataNodes or more or larger disks to the DataNodes. After adding more storage run Balancer.

Percent DataNodes live

This alert is triggered if the number of down DataNodes in the cluster is greater

than the configured critical threshold. It uses the check_aggregate plug-in to aggregate the results of Data node process checks.

Potential causes

-

DataNodes are down.

-

DataNodes are not down but are not listening to the correct network port/address.

-

Nagios server cannot connect to one or more DataNodes.

Possible remedies

-

Check for dead DataNodes in Ambari Web.

-

Check for any errors in the DataNode logs, located at

/var/log/hadoop/hdfs. Then, restart the DataNode hosts/processes. -

Run the

netstat-tuplpncommand to check if the DataNode process is bound to the correct network port. -

Use

pingto check the network connection between the Nagios server and the DataNodes.

NameNode RPC latency

This host-level alert is triggered if the NameNode operations RPC latency exceeds

the configured critical threshold. Typically an increase in the RPC processing time

increases the RPC queue length, causing the average queue wait time to increase for

NameNode operations. It uses the Nagios check_rpcq_latency plug-in.

Potential causes

-

A job or an application is performing too many NameNode operations.

Possible remedies

-

Review the job or the application for potential bugs causing it to perform too many NameNode operations.

HDFS capacity utilization

This service-level alert is triggered if the HDFS capacity utilization exceeds the

configured critical threshold (80% warn, 90% critical). It uses the check_hdfs_capacity.php plug-in which checks the NameNode JMX Servlet for the CapacityUsed and CapacityRemaining properties.

Potential causes

-

Cluster storage is full.

Possible remedies

-

Delete unnecessary data.

-

Archive unused data.

-

Add more DataNodes.

-

Add more or larger disks to the DataNodes.

-

After adding more storage, run Balancer.

NameNode HA Alerts (Hadoop 2 only)

These alerts are available only when you are using Hadoop 2.x and you have enabled NameNode HA.

JournalNode process

This host-level alert is triggered if the individual JournalNode process cannot be

established to be up and listening on the network for the configured critical threshold,

given in seconds. It uses the Nagios check_tcp plug-in.

Potential causes

-

The JournalNode process is down or not responding.

-

The JournalNode is not down, but is not listening to the correct network port/address.

-

The Nagios server cannot connect to the JournalNode.

Possible remedies

-

Check if the JournalNode process is dead.

-

Use

pingto check the network connection between the Nagios server and the JournalNode host.

NameNode HA Healthy process

This service-level alert is triggered if either the Active NameNode or Standby NameNode are not running.

Potential causes

-

The Active, Standby or both NameNode processes are down.

-

The Nagios Server cannot connect to one or both NameNode hosts.

Possible remedies

-

On each host running NameNode, check for any errors in the logs, located at

/var/log/hadoop/hdfs/. Then, restart the NameNode host/process using Ambari Web. -

On each host running NameNode, run the

netstat-tuplpncommand to check if the NameNode process is bound to the correct network port. -

Use

pingto check the network connection between the Nagios server and the hosts running NameNode.

YARN Alerts (Hadoop 2 only)

These alerts are used to monitor YARN.

ResourceManager process

This host-level alert is triggered if the individual ResourceManager process cannot

be established to be up and listening on the network for the configured critical threshold,

given in seconds. It uses the Nagios check_tcp plug-in.

Potential causes

-

The ResourceManager process is down or not responding.

-

The ResourceManager is not down, but is not listening to the correct network port/address.

-

Nagios Server cannot connect to the ResourceManager.

Possible remedies

-

Check for a dead ResourceManager.

-

Check for any errors in the ResourceManager logs, located at

/var/log/hadoop/yarn. Then, restart the ResourceManager, if necessary. -

Use

pingto check the network connection between the Nagios Server and the ResourceManager host.

Percent NodeManagers live

This alert is triggered if the number of down NodeManagers in the cluster is greater

than the configured critical threshold. It uses the check_aggregate plug-in to aggregate the results of DataNode process alert checks.

Potential causes

-

NodeManagers are down.

-

NodeManagers are not down but are not listening to the correct network port/address.

-

Nagios server cannot connect to one or more NodeManagers.

Possible remedies

-

Check for dead NodeManagers.

-

Check for any errors in the NodeManager logs, located at

/var/log/hadoop/yarn. Then, restart the NodeManagers hosts/processes, as necessary. -

Run the

netstat-tuplpncommand to check if the NodeManager process is bound to the correct network port. -

Use

pingto check the network connection between the Nagios Server and the NodeManagers host.

ResourceManager Web UI

This host-level alert is triggered if the ResourceManager Web UI is unreachable.

Potential causes

-

The ResourceManager Web UI is unreachable from the Nagios Server.

-

The ResourceManager process is not running.

Possible remedies

-

Check if the ResourceManager process is running.

-

Check whether the Nagios Server can ping the ResourceManager server.

-

Using a browser, check whether the Nagios Server can reach the ResourceManager Web UI.

ResourceManager RPC latency

This host-level alert is triggered if the ResourceManager operations RPC latency exceeds

the configured critical threshold. Typically an increase in the RPC processing time

increases the RPC queue length, causing the average queue wait time to increase for

ResourceManager operations. It uses the Nagios check_rpcq_latency plug-in.

Potential causes

-

A job or an application is performing too many ResourceManager operations.

Possible remedies

-

Review the job or the application for potential bugs causing it to perform too many ResourceManager operations.

ResourceManager CPU utilization

This host-level alert is triggered if CPU utilization of the ResourceManager exceeds

certain thresholds (200% warning, 250% critical). It uses the check_cpu.php plug-in which checks the ResourceManager JMX Servlet for the SystemCPULoad property. This information is only available if you are running JDK 1.7.

Potential causes

-

Unusually high CPU utilization: Can be caused by a very unusual job/query workload, but this is generally the sign of an issue in the daemon.

Possible remedies

-

Use the

topcommand to determine which processes are consuming excess CPU. -

Reset the offending process.

NodeManager process

This host-level alert is triggered if the NodeManager process cannot be established

to be up and listening on the network for the configured critical threshold, given

in seconds. It uses the Nagios check_tcp plug-in.

Potential causes

-

NodeManager process is down or not responding.

-

NodeManager is not down but is not listening to the correct network port/address.

-

Nagios Server cannot connect to the NodeManager

Possible remedies

-

Check if the NodeManager is running.

-

Check for any errors in the NodeManager logs (

/var/log/hadoop/yarn) and restart the NodeManager, if necessary. -

Use

pingto check the network connection between the Nagios Server and the NodeManager host.

NodeManager health

This host-level alert checks the node health property available from the NodeManager component.

Potential causes

-

Node Health Check script reports issues or is not configured.

Possible remedies

-

Check in the NodeManager logs (

/var/log/hadoop/yarn) for health check errors and restart the NodeManager, and restart if necessary. -

Check in the ResourceManager UI logs (

/var/log/hadoop/yarn) for health check errors.

MapReduce2 Alerts (Hadoop 2 only)

These alerts are used to monitor MR2.

HistoryServer Web UI

This host-level alert is triggered if the HistoryServer Web UI is unreachable.

Potential causes

-

The HistoryServer Web UI is unreachable from the Nagios Server.

-

The HistoryServer process is not running.

Possible remedies

-

Check if the HistoryServer process is running.

-

Check whether the Nagios Server can ping the HistoryServer server.

-

Using a browser, check whether the Nagios Server can reach the HistoryServer Web UI.

HistoryServer RPC latency

This host-level alert is triggered if the HistoryServer operations RPC latency exceeds

the configured critical threshold. Typically an increase in the RPC processing time

increases the RPC queue length, causing the average queue wait time to increase for

NameNode operations. It uses the Nagios check_rpcq_latency plug-in.

Potential causes

-

A job or an application is performing too many HistoryServer operations.

Possible remedies

-

Review the job or the application for potential bugs causing it to perform too many HistoryServer operations.

HistoryServer CPU utilization

This host-level alert is triggered if the percent of CPU utilization on the HistoryServer

exceeds the configured critical threshold. This alert uses the Nagios check_snmp_load plug-in.

Potential causes

-

Unusually high CPU utilization: Can be caused by a very unusual job/query workload, but this is generally the sign of an issue in the daemon.

-

A down SNMP daemon on the HistoryServer node, producing an unknown status.

Possible remedies

-

Use the

topcommand to determine which processes are consuming excess CPU. -

Reset the offending process.

-

Check the status of the SNMP daemon.

HistoryServer process

This host-level alert is triggered if the HistoryServer process cannot be established

to be up and listening on the network for the configured critical threshold, given

in seconds. It uses the Nagios check_tcp plug-in.

Potential causes

-

HistoryServer process is down or not responding.

-

HistoryServer is not down but is not listening to the correct network port/address.

-

Nagios Server cannot connect to the HistoryServer.

Possible remedies

-

Check the HistoryServer is running.

-

Check for any errors in the HistoryServer logs, located at

/var/log/hadoop/mapred.Then, restart the HistoryServer, if necessary. -

Use

pingto check the network connection between the Nagios Server and the HistoryServer host.

MapReduce Service Alerts (Hadoop 1 only)

These alerts are used to monitor the MapReduce service.

JobTracker RPC latency alert

This host-level alert is triggered if the JobTracker operations RPC latency exceeds

the configured critical threshold. Typically an increase in the RPC processing time

increases the RPC queue length, causing the average queue wait time to increase for

JobTracker operations. This alert uses the Nagios check_rpcq_latency plug-in.

Potential causes

-

A job or an application is performing too many JobTracker operations.

Possible remedies

-

Review the job or the application for potential bugs causing it to perform too many JobTracker operations.

JobTracker process

This host-level alert is triggered if the individual JobTracker process cannot be

confirmed to be up and listening on the network for the configured critical threshold,

given in seconds. It uses the Nagios check_tcp plug-in.

Potential causes

-

JobTracker process is down or not responding.

-

JobTracker is not down but is not listening to the correct network port/address.

-

The Nagios server cannot connect to the JobTracker

Possible remedies

-

Check if the JobTracker process is running.

-

Check for any errors in the JobTracker logs, located at

/var/log/hadoop/mapred. Then, restart the JobTracker, if necessary -

Use

pingto check the network connection between the Nagios Server and the JobTracker host.

JobTracker Web UI

This Host-level alert is triggered if the JobTracker Web UI is unreachable.

Potential causes

-

The JobTracker Web UI is unreachable from the Nagios Server.

-

The JobTracker process is not running.

Possible remedies

-

Check if the JobTracker process is running.

-

Check whether the Nagios Server can ping the JobTracker server.

-

Using a browser, check whether the Nagios Server can reach the JobTracker Web UI.

JobTracker CPU utilization

This host-level alert is triggered if CPU utilization of the JobTracker exceeds certain

thresholds (200% warning, 250% critical). It uses the check_cpu.php plug-in which checks the JobTracker JMX Servlet for the SystemCPULoad property. This information is only available if you are running JDK 1.7.

Potential causes

-

Unusually high CPU utilization: Can be caused by a very unusual job/query workload, but this is generally the sign of an issue in the daemon.

Possible remedies

-

Use the

topcommand to determine which processes are consuming excess CPU. -

Reset the offending processor.

HistoryServer Web UI

This host-level alert is triggered if the HistoryServer Web UI is unreachable.

Potential causes

-

The HistoryServer Web UI is unreachable from the Nagios Server.

-

The HistoryServer process is not running.

-

Using a browser, check whether the Nagios Server can reach the HistoryServer Web UI.

Possible remedies

-

Check the HistoryServer process is running.

-

Check whether the Nagios Server can ping the HistoryServer server.

-

Check the status of the SNMP daemon.

HistoryServer process

This host-level alert is triggered if the HistoryServer process cannot be established

to be up and listening on the network for the configured critical threshold, given

in seconds. It uses the Nagios check_tcp plug-in.

Potential causes

-

The HistoryServer process is down or not responding.

-

The HistoryServer is not down but is not listening to the correct network port/address.

-

The Nagios Server cannot connect to the HistoryServer.

Possible remedies

-

Check for any errors in the HistoryServer logs located at

/var/log/hadoop/mapred. Then, restart the HistoryServer, if necessary. -

Use

pingto check the network connection between the Nagios Server and the HistoryServer host.

HBase Service Alerts

These alerts are used to monitor the HBase service.

Percent RegionServers live

This service-level alert is triggered if the configured percentage of Region Server

processes cannot be determined to be up and listening on the network for the configured

critical threshold. The default setting is 10% to produce a WARN alert and 30% to

produce a CRITICAL alert. It uses the check_aggregate plug-in to aggregate the results of RegionServer process down checks.

Potential causes

-

Misconfiguration or less-than-ideal configuration caused the RegionServers to crash.

-

Cascading failures brought on by some workload caused the RegionServers to crash.

-

The RegionServers shut themselves down because there were problems in the dependent services, ZooKeeper, or HDFS.

-

GC paused the RegionServer for too long and the RegionServers lost contact with ZooKeeper.

Possible remedies

-

Check the dependent services to make sure they are operating correctly.

-

Look at the RegionServer log files, usually located at

/var/log/hbase/*.logfor further information. -

Look at the configuration files located at

/etc/hbase/conf. -

If the failure was associated with a particular workload, try to better understand the workload.

-

Restart the RegionServers.

HBase Master process

This alert is triggered if the HBase master processes cannot be confirmed to be up

and listening on the network for the configured critical threshold, given in seconds.

It uses the Nagios check_tcp plug-in.

Potential causes

-

The HBase master process is down.

-

The HBase master has shut itself down because there were problems in the dependent services, ZooKeeper, or HDFS.

-

The Nagios server cannot connect to the HBase master through the network.

Possible remedies

-

Check the dependent services.

-

Look at the master log files, usually located at

/var/log/hbase/*.log,for further information. -

Look at the configuration files in

/etc/hbase/conf.Use

pingto check the network connection between the Nagios server and the HBase master. -

Restart the master.

HBase Master Web UI

This host-level alert is triggered if the HBase Master Web UI is unreachable.

Potential causes

-