Create a NiFi Flow to Stream Events to CCP

You can use NiFi to create a data flow to capture events from Squid and push them into Cloudera Cybersecurity Platform (CCP). For this task we will use NiFi to create two processors, one TailFile processor that will ingest Squid events and one PutKafka processor that will stream the information to Metron. When we create and define the PutKafka processor, Kafka will create a topic for the Squid data source. We'll then connect the two processors, generate some data in the Squid log, and watch the data flow from one processor to the other.

- Drag the first icon on the toolbar

(the processor icon) to your

workspace.

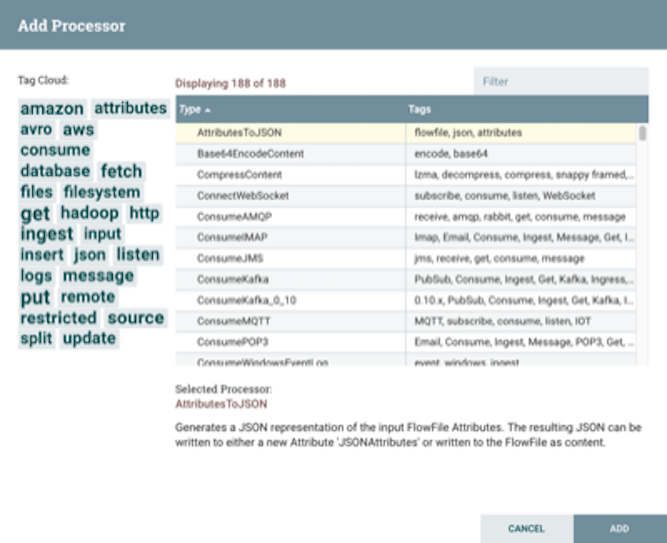

NiFi displays the Add Processor dialog box.

(the processor icon) to your

workspace.

NiFi displays the Add Processor dialog box.

- Select the TailFile type of processor and click Add.

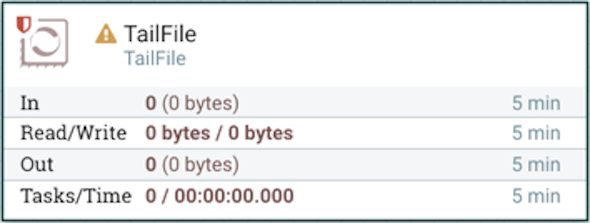

NiFi displays a new TailFile processor.

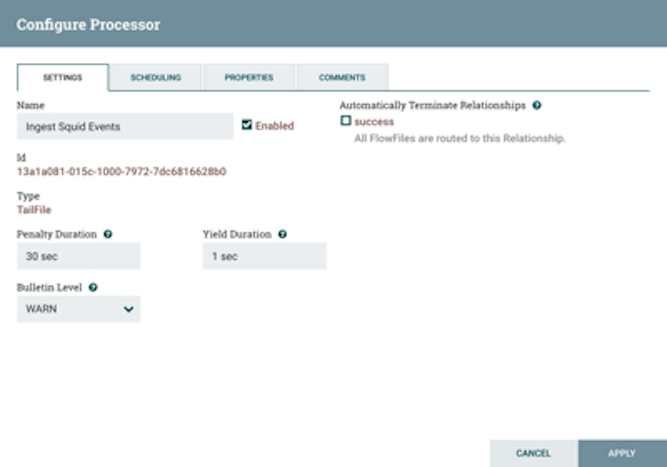

- Right-click the processor icon and select Configure to display the

Configure Processor dialog box.

-

In the Settings tab, change the name to

Ingest Squid Events.

-

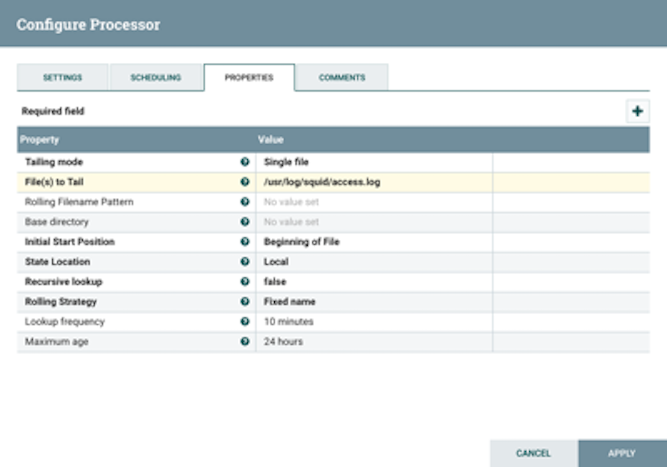

In the Properties tab, enter the path to the squid

access.log file in the Value

column for the File(s) to Tail property.

-

In the Settings tab, change the name to

Ingest Squid Events.

- Click Apply to save your changes and dismiss the Configure Processor dialog box.

- Add another processor by dragging the Processor icon to the main window.

- Select the PutKafka type of processor and click Add.

- Right-click the processor and select Configure.

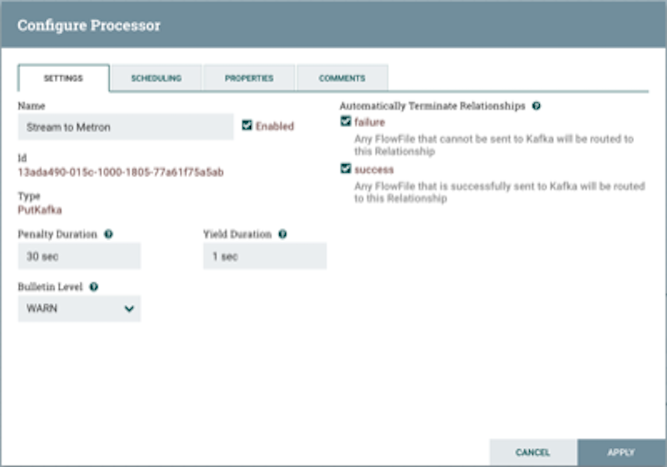

- In the Settings tab, change the name to Stream to Metron and then

select the Automatically Terminate Relationships check boxes for

Failure and Success.

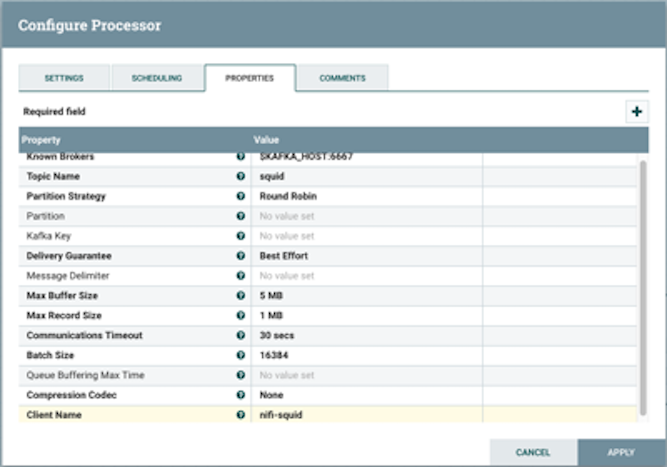

- In the Properties tab, set the following three properties:

- Known Brokers: $KAFKA_HOST:6667

- Topic Name: squid

- Client Name: nifi-squid

- Click Apply to save your changes and dismiss the Configure Processor dialog box.

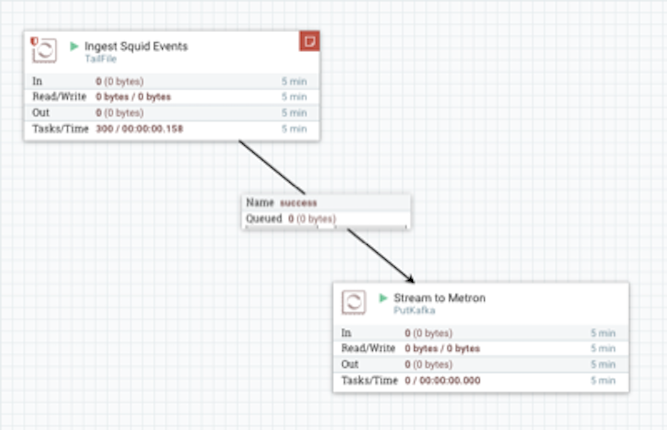

- Create a connection by dragging the arrow from the Ingest Squid Events processor to the Stream

to Metron processor.

NiFi displays a Create Connection dialog box.

- Click Add to accept the default settings for the connection.

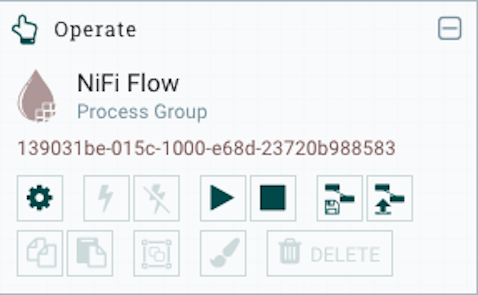

- Press the Shift key and draw a box around both parsers to select the entire flow.

- Click

(Start button) in the Operate panel.

(Start button) in the Operate panel.

- Generate some data using the new data processor client.

- Use ssh to access the host for the new data source.

-

With Squid started, look at the different log files that get created:

sudo su - cd /var/log/squid lsThe file you want for Squid is the access.log, but another data source might use a different name. -

Generate entries for the log so you can see the format of the entries.

squidclient -h 127.0.0.1 "http://www.atmape.ru"You will see the following data in the access.log file.1481143984.330 1111 127.0.0.1 TCP_MISS/301 714 GET http://www.atmape.ru/ - DIRECT/212.109.217.71 text/html -

Using the Squid log entries, you can determine the format of the log entries

is:

timestamp | time elapsed | remotehost | code/status | bytes | method | URL rfc931 peerstatus/peerhost | typeYou should see metrics on the processor of data being pushed into Metron.

- Look at the Storm UI for the parser topology and you should see tuples coming in.

- Navigate to Ambari UI.

- From the Quick Links pull-down menu in the upper center of the window, select Storm UI.

-

Before leaving this section, run the following commands to fill the

access.log with data. You'll need this data when you enrich

the telemetry data.

squidclient -h 127.0.0.1 "http://www.aliexpress.com/af/shoes.html?ltype=wholesale&d=y&origin=n&isViewCP=y&catId=0&initiative_id=SB_20160622082445&SearchText=shoes" squidclient -h 127.0.0.1 "http://www.help.1and1.co.uk/domains-c40986/transfer-domains-c79878" squidclient -h 127.0.0.1 "http://www.pravda.ru/science/" squidclient -h 127.0.0.1 "http://www.brightsideofthesun.com/2016/6/25/12027078/anatomy-of-a-deal-phoenix-suns-pick-bender-chriss" squidclient -h 127.0.0.1 "https://www.microsoftstore.com/store/msusa/en_US/pdp/Microsoft-Band-2-Charging-Stand/productID.329506400" squidclient -h 127.0.0.1 "https://tfl.gov.uk/plan-a-journey/" squidclient -h 127.0.0.1 "https://www.facebook.com/Africa-Bike-Week-1550200608567001/" squidclient -h 127.0.0.1 "http://www.ebay.com/itm/02-Infiniti-QX4-Rear-spoiler-Air-deflector-Nissan-Pathfinder-/172240020293?fits=Make%3AInfiniti%7CModel%3AQX4&hash=item281a4e2345:g:iMkAAOSwoBtW4Iwx&vxp=mtr" squidclient -h 127.0.0.1 "http://www.recruit.jp/corporate/english/company/index.html" squidclient -h 127.0.0.1 "http://www.lada.ru/en/cars/4x4/3dv/about.html" squidclient -h 127.0.0.1 "http://www.help.1and1.co.uk/domains-c40986/transfer-domains-c79878" squidclient -h 127.0.0.1 "http://www.aliexpress.com/af/shoes.html?ltype=wholesale&d=y&origin=n&isViewCP=y&catId=0&initiative_id=SB_20160622082445&SearchText=shoes"

For more information about creating a NiFi data flow, see the NiFi documentation.