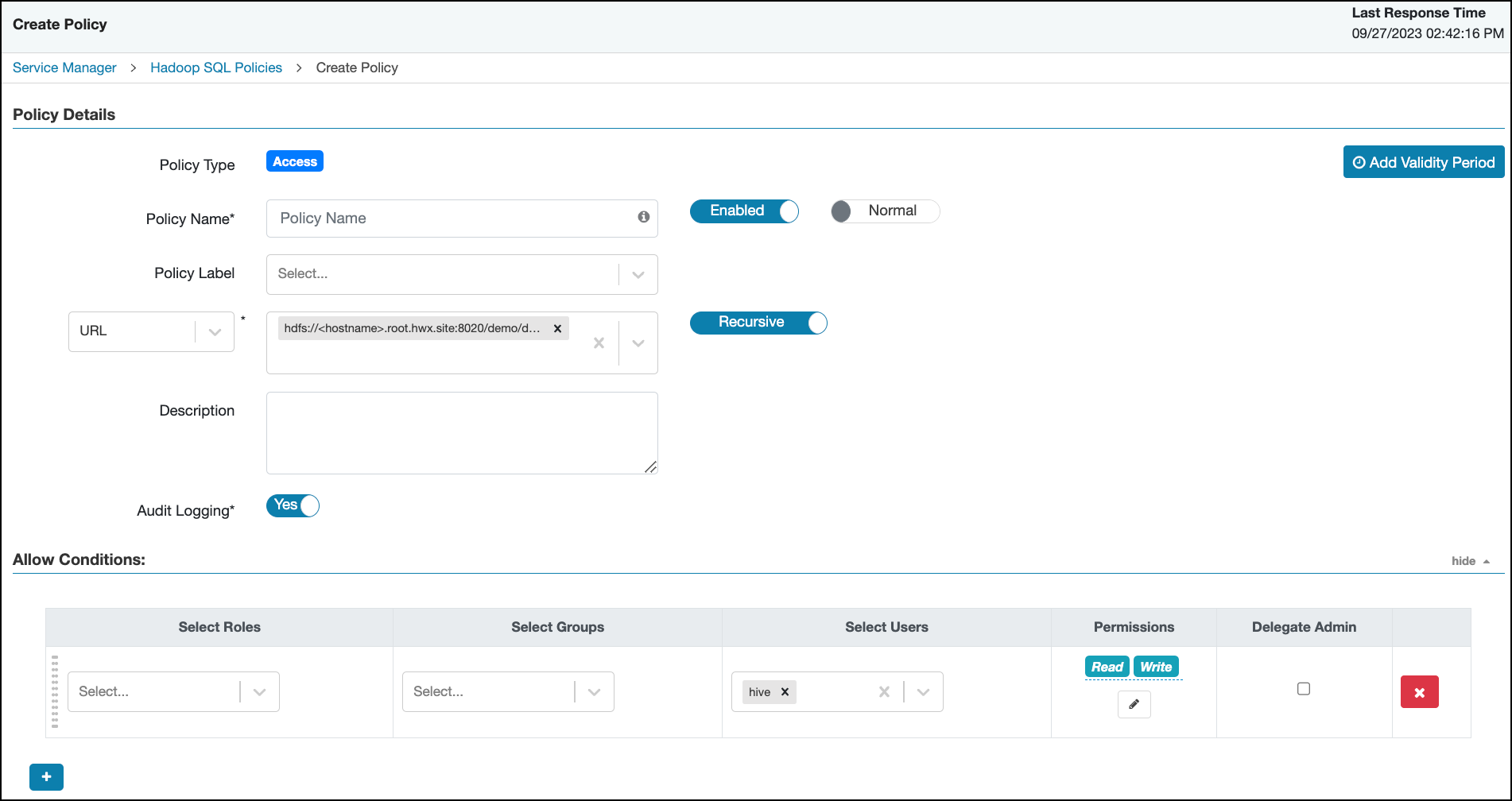

Create a Hive authorizer URL policy

You can create a Hive Authorizer URL policy in Ranger that maintains Read and Write permissions for a location or folder.

Hive supports several commands that include URLs which refer to a current or future data location. Such locations must authorize end user access to that location. Currently, you can create a Ranger HDFS policy that grants "All" permissions for a location, recursively. If no such policy exists, HDFS authorization "falls back" to the current ACL that defines access to a location or folder. By default the value of the parameter is “hdfs:,file:,wasb:,adl:”. If you remove “hdfs:”, access requests will be authorized against the HIVE URL policy and won't check for hdfs plugin or Hadoop ACL. This solution requires maintaining many policies or ACLs at the storage level. You can create a Hive Authorizer URL policy in Ranger that maintains Read and Write permissions for a location or folder.

To create a Hive Authorizer policy:

Hive authorizer URL policy with hdfs default value

Learn about the performance issue you face when the

ranger.plugin.hive.urlauth.filesystem.schemes configuration includes

hdfs: as a default filesystem scheme.

The default value of the ranger.plugin.hive.urlauth.filesystem.schemes

configuration is hdfs:,file:,wasb:,adl:. When the

ranger.plugin.hive.urlauth.filesystem.schemes configuration includes

hdfs: as a default filesystem scheme in both HMS and HiveServer2, and you

perform a CREATE EXTERNAL TABLE operation with a LOCATION clause, if both the Ranger Hive and

HDFS plugins are enabled, the Ranger Plugin Authorizer performs authorization checks on all files

within the target directory. This results in performance degradation that scales up linearly with

the number of files. The operation can take a significantly long time to complete or even fail

with an error if the specified location contains a large number of subdirectories.

hdfs: from the configuration, the request will be authorized

against the HIVE URL policy and will not check for the Ranger HDFS plugin or Hadoop ACL. This

will lead to faster responses and less waiting time.