Ephemeral storage

Ephemeral storage space is scratch space a CML session, job, application or model can use. This feature helps in better scheduling of CML pods, and provides a safety valve to ensure runaway computations do not consume all available scratch space on the node.

By default, each user pod in CML is allocated 0 GB of scratch space, and it is allowed to use up to 10 GB. These settings can be applied to an entire site, or on a per-project basis.

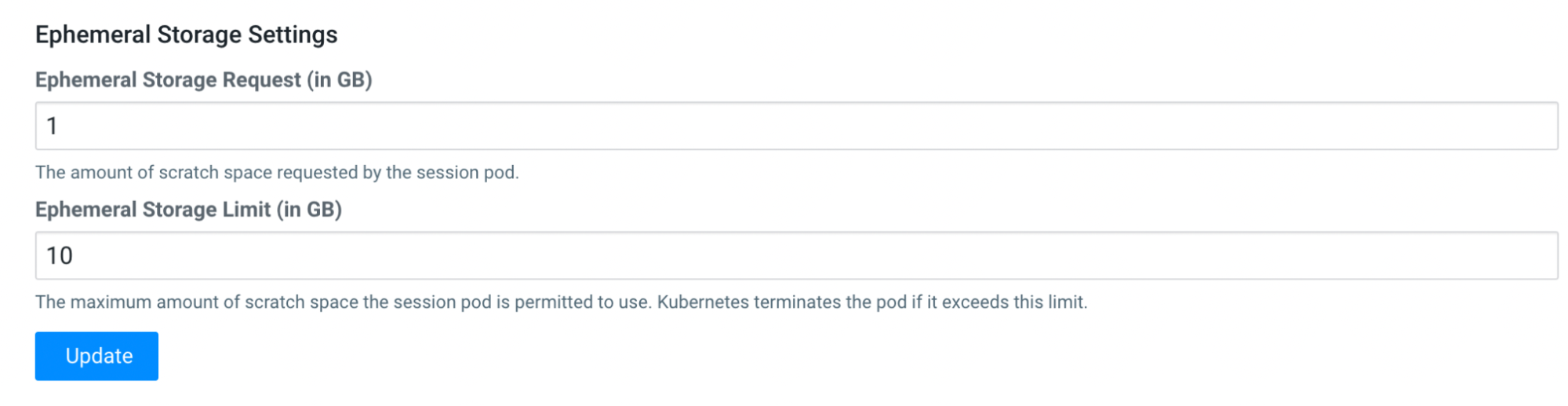

Change Site-wide Ephemeral Storage Configuration

In , you can see the fields to change the ephemeral storage request (minimum) and maximum limit.

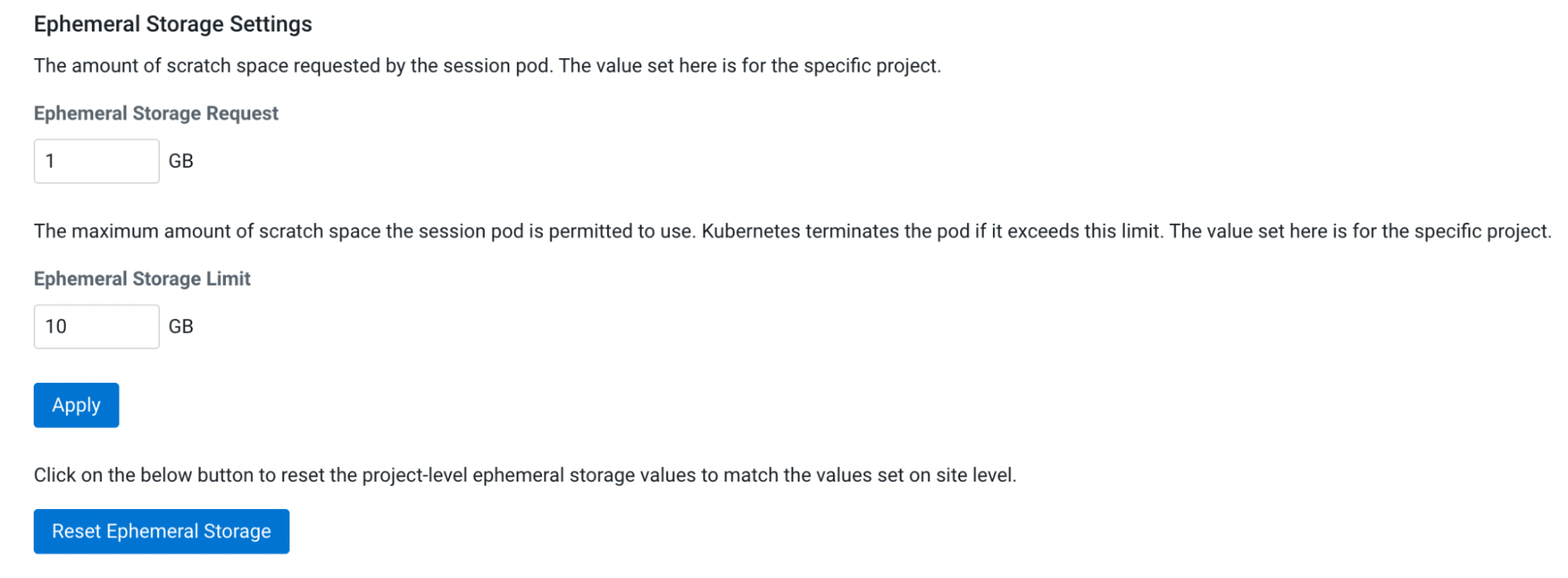

Override Site-wide Ephemeral Storage Configuration

If you want to customize the ephemeral storage settings, you can do so on a per-project basis. Open your project, then click on and adjust the ephemeral storage parameters.