Known Issues in Hue

Learn about the known issues in Hue, the impact or changes to the functionality, and the workaround.

Known Issues identified in Cloudera Runtime 7.3.1.706 SP3 CHF 2

- CDPD-95086: Hue UI fails to load due to a connection timeout error

- 7.3.1.706

Known Issues identified in Cloudera Runtime 7.3.1.600 SP3 CHF 1

- CDPD-92946: Hue debug logging cannot be disabled

- 7.3.1.600, 7.3.1.706

Known Issues identified in Cloudera Runtime 7.3.1.500 SP3

- CDPD-88964: Hue Logs Missing in Hue UI

- 7.3.1.500, 7.3.1.600, 7.3.1.706

- CDPD-90510: Defunct Hue gunicorn worker processes

- 7.3.1.500

- Uploading files from Hue to S3 fails

- 7.3.1.500

Known Issues identified in Cloudera Runtime 7.3.1.400 SP2

There are no new known issues identified in this release.

Known Issues identified in Cloudera Runtime 7.3.1.300 SP1 CHF 1

- CDPD-83015: Issues with files or directories with the % character may fail to cpen or Copy

- 7.3.1.300, 7.3.1.400, 7.3.1.500, 7.3.1.600, 7.3.1.700

Known Issues identified in Cloudera Runtime 7.3.1.200 SP1

There are no new known issues identified in this release.

Known Issues identified in Cloudera Runtime 7.3.1.100 CHF 1

There are no new known issues identified in this release.

Known Issues in Cloudera Runtime 7.3.1

- CDPD-58978: Batch query execution using Hue fails with Kerberos error

- 7.2.16 SPs and its higer versions, 7.2.17 SPs and its higer versions, 7.3.1 and its higer versions

- CDPD-54376: Clicking the home button on the File Browser page redirects to HDFS user directory

- 7.2.17 SPs and its higer versions, 7.3.1 and its higer versions

- CDPD-43293: Unable to import Impala table using Importer

- 7.2.16 SPs and its higer versions, 7.2.17 SPs and its higer versions, 7.3.1 and its higer versions

- CDPD-64541, CDPD-63617: Creating managed tables using Hue Importer fails on RAZ-enabled GCP environments

- 7.2.18 SPs and its higer versions, 7.3.1 SPs and its higer versions

- CDPD-56888: Renaming a folder with special characters results in a duplicate folder with a new name on AWS S3.

- 7.2.17 SPs and its higer versions, 7.2.18 SPs and its higer versions, 7.3.1 SPs and its higer versions

- CDPD-48146: Error while browsing S3 buckets or ADLS containers from the left-assist panel

- 7.2.17 SPs and its higer versions, 7.2.18 SPs and its higer versions, 7.3.1 SPs and its higer versions

- CDPD-42619: Unable to import a large CSV file from the local workstation

- 7.2.16 SPs and its higer versions, 7.2.17 SPs and its higer versions, 7.2.18 SPs and its higer versions, 7.3.1 SPs and its higer versions

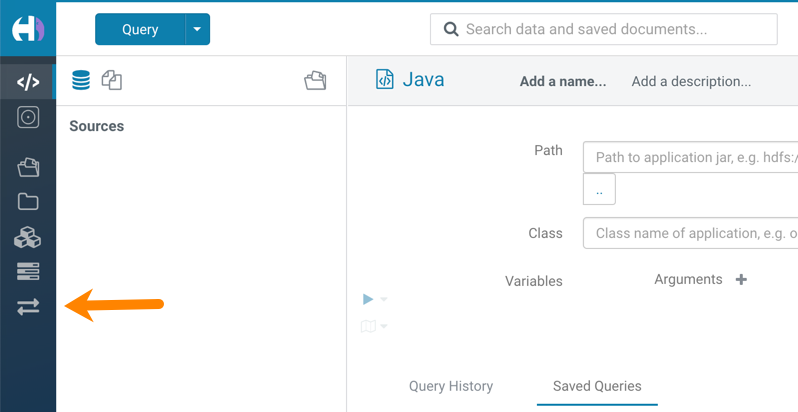

- Hue Importer is not supported in the Data Engineering template

- When you create a Cloudera Data Hub cluster

using the Cloudera Data Engineering template, the Importer application is not

supported in Hue:

Unsupported features

- CDPD-59595: Spark SQL does not work with all Livy servers that are configured for High Availability

- SparkSQL support in Hue with Livy servers in HA mode is not

supported. Hue does not automatically connect to one of the Livy servers. You must

specify the Livy server in the Hue Advanced Configuration Snippet as

follows:

Moreover, you may see the following error in Hue when you submit a SparkSQL query: Expecting value: line 2 column 1 (char 1). This happens when the Livy server does not respond to the request from Hue.[desktop] [spark] livy_server_url=http(s)://[***LIVY-FOR-SPARK3-SERVER-HOST***]:[***LIVY-FOR-SPARK3-SERVER-PORT***] - Importing and exporting Oozie workflows across clusters and between different CDH versions is not supported

-

You can export Oozie workflows, schedules, and bundles from Hue and import them only within the same cluster if the cluster is unchanged. You can migrate bundle and coordinator jobs with their workflows only if their arguments have not changed between the old and the new cluster. For example, hostnames, NameNode, Resource Manager names, YARN queue names, and all the other parameters defined in the

workflow.xmlandjob.propertiesfiles.Using the import-export feature to migrate data between clusters is not recommended. To migrate data between different versions of CDH, for example, from CDH 5 to Cloudera 7, you must take the dump of the Hue database on the old cluster, restore it on the new cluster, and set up the database in the new environment. Also, the authentication method on the old and the new cluster should be the same because the Oozie workflows are tied to a user ID, and the exact user ID needs to be present in the new environment so that when a user logs into Hue, they can access their respective workflows.