The NFS Gateway for HDFS allows HDFS to be mounted as part of the client's local file system.

This release of NFS Gateway supports and enables the following usage patterns:

Users can browse the HDFS file system through their local file system on NFSv3 client compatible operating systems.

Users can download files from the the HDFS file system on to their local file system

Users can upload files from their local file system directly to the HDFS file system

![[Note]](../common/images/admon/note.png) | Note |

|---|---|

NFS access to HDFS does not support random write and file appends in this release of HDP. If you need support for file appends to stream data to HDFS through NFS, upgrade to HDP 2.0. |

Prerequisites:

The NFS gateway machine needs everything to run an HDFS client like Hadoop core JAR file, HADOOP_CONF directory.

The NFS gateway can be on any DataNode, NameNode, or any HDP client machine. Start the NFS server on that machine.

Instructions: Use the following instructions to configure and use the HDFS NFS gateway:

Configure settings for the HDFS NFS gateway:

NFS gateway uses the same configurations as used by the NameNode and DataNode. Configure the following three properties based on your application's requirement:

Edit the

hdfs-default.xmlfile on your NFS gateway machine and modify the following property:<property> <name>dfs.access.time.precision</name> <value>3600000</value> <description>The access time for HDFS file is precise upto this value. The default value is 1 hour. Setting a value of 0 disables access times for HDFS. </description> </property>![[Note]](../common/images/admon/note.png)

Note If the export is mounted with access time update allowed, make sure this property is not disabled in the configuration file. Only NameNode needs to restart after this property is changed. If you have disabled access time update by mounting with "noatime" you do NOT have to change this property nor restart your NameNode.

Update the following property to

hdfs-site.xml:<property> <name>dfs.datanode.max.xcievers</name> <value>1024</value> </property>![[Note]](../common/images/admon/note.png)

Note If the number files being uploaded in parallel through the NFS gateway exceeds this value (1024), increase the value of this property accordingly. The new value must be based on the maximum number of files being uploaded in parallel.

Restart your DataNodes after making this change to the configuration file.

Add the following property to

hdfs-site.xml:<property> <name>dfs.nfs3.dump.dir</name> <value>/tmp/.hdfs-nfs</value> </property>Optional - Customize log settings.

Edit the

log4j.propertyfile to add the following:To change trace level, add the following:

log4j.logger.org.apache.hadoop.hdfs.nfs=DEBUG

To get more details on RPC requests, add the following:

log4j.logger.org.apache.hadoop.oncrpc=DEBUG

Start NFS gateway service.

Three daemons are required to provide NFS service:

rpcbind(orportmap),mountdandnfsd. The NFS gateway process has bothnfsdandmountd. It shares the HDFS root "/" as the only export. It is recommended to use theportmapincluded in NFS gateway package as shown below:Stop

nfs/rpcbind/portmapservices provided by the platform:service nfs stop service rpcbind stop

Start package included

portmap(needs root privileges):hadoop portmap

OR

hadoop-daemon.sh start portmap

Start

mountdandnfsd.No root privileges are required for this command. However, verify that the user starting the Hadoop cluster and the user starting the NFS gateway are same.

hadoop nfs3

OR

hadoop-daemon.sh start nfs3

![[Note]](../common/images/admon/note.png)

Note If the

hadoop-daemon.shscript starts the NFS gateway, its log can be found in the hadoop log folder.Stop NFS gateway services.

hadoop-daemon.sh stop nfs3 hadoop-daemon.sh stop portmap

Verify validity of NFS related services.

Execute the following command to verify if all the services are up and running:

rpcinfo -p $nfs_server_ip

You should see output similar to the following:

program vers proto port 100005 1 tcp 4242 mountd 100005 2 udp 4242 mountd 100005 2 tcp 4242 mountd 100000 2 tcp 111 portmapper 100000 2 udp 111 portmapper 100005 3 udp 4242 mountd 100005 1 udp 4242 mountd 100003 3 tcp 2049 nfs 100005 3 tcp 4242 mountdVerify if the HDFS namespace is exported and can be mounted by any client.

showmount -e $nfs_server_ip

You should see output similar to the following:

Exports list on $nfs_server_ip : / (everyone)

Mount the export “/”.

Currently NFS v3 is supported and uses TCP as the transportation protocol is TCP. The users can mount the HDFS namespace as shown below:

mount -t nfs -o vers=3,proto=tcp,nolock $server:/ $mount_point

Then the users can access HDFS as part of the local file system except that, hard/symbolic link and random write are not supported in this release. We do not recommend using tools like vim, for creating files on the mounted directory. The supported use cases for this release are file browsing, uploading, and downloading.

User authentication and mapping:

NFS gateway in this release uses

AUTH_UNIXstyle authentication which means that the the login user on the client is the same user that NFS passes to the HDFS. For example, if the NFS client has current user asadmin, when the user accesses the mounted directory, NFS gateway will access HDFS as useradmin. To access HDFS ashdfsuser, you must first switch the current user tohdfson the client system before accessing the mounted directory.Set up client machine users to interact with HDFS through NFS.

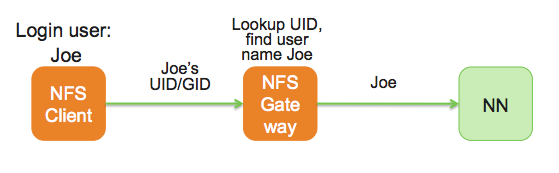

NFS gateway converts UID to user name and HDFS uses username for checking permissions.

The system administrator must ensure that the user on NFS client machine has the same name and UID as that on the NFS gateway machine. This is usually not a problem if you use same user management system (e.g., LDAP/NIS) to create and deploy users to HDP nodes and to client node.

If the user is created manually, you might need to modify UID on either client or NFS gateway host in order to make them the same.

The following illustrates how the user ID and name are communicated between NFS client, NFS gateway, and NameNode.