Chapter 7. Using Spark SQL

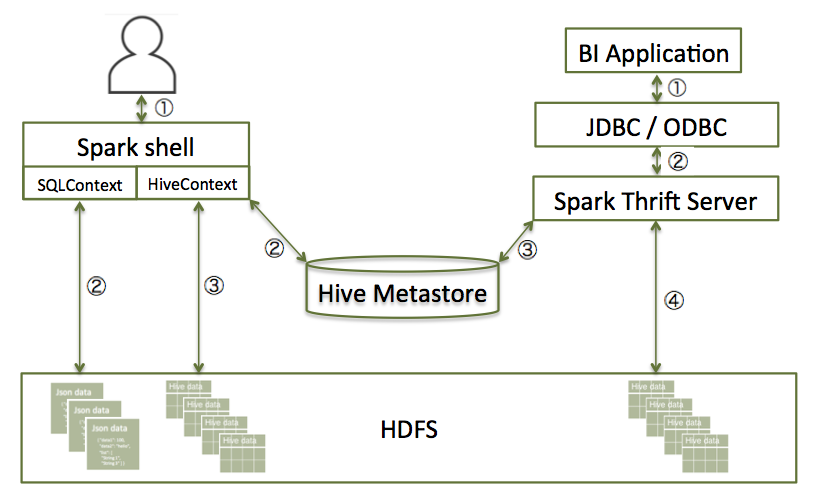

Spark SQL can read data directly from the filesystem, when SQLContext is used. This is useful when the data you are trying to analyze does not reside in Hive (for example, JSON files stored in HDFS).

Spark SQL can also read data by interacting with the Hive MetaStore, when HiveContext is used. If you already use Hive, you should use HiveContext; it supports all Hive data formats and user-defined functions (UDFs), and allows full access to the HiveQL parser. HiveContext extends SQLContext, so HiveContext supports all SQLContext functionality.

There are two ways to interact with Spark SQL:

Interactive access using the Spark shell (see Accessing Spark SQL through the Spark Shell).

From an application, operating through one of the following two APIs and the Spark Thrift Server:

JDBC, using your own Java code or the Beeline JDBC client.

ODBC, via the Simba ODBC driver.

For more information, see Accessing Spark SQL through JDBC and ODBC.

The following diagram outlines the access process, depending on type of interaction: