Sqoop-HCatalog Integration

This section describes the interaction between HCatalog with Sqoop.

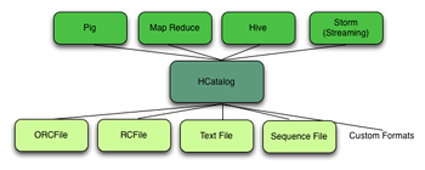

HCatalog is a table and storage management service for Hadoop that enables users with different data processing tools – Pig, MapReduce, and Hive – to more easily read and write data on the grid. HCatalog’s table abstraction presents users with a relational view of data in the Hadoop distributed file system (HDFS) and ensures that users need not worry about where or in what format their data is stored: RCFile format, text files, or SequenceFiles.

HCatalog supports reading and writing files in any format for which a Hive SerDe (serializer-deserializer) has been written. By default, HCatalog supports RCFile, CSV, JSON, and SequenceFile formats. To use a custom format, you must provide the InputFormat and OutputFormat as well as the SerDe.

The ability of HCatalog to abstract various storage formats is used in providing RCFile (and future file types) support to Sqoop.

Exposing HCatalog Tables to Sqoop

HCatalog interaction with Sqoop is patterned on an existing feature set that supports Avro and Hive tables. This section introduces five command line options. Some command line options defined for Hive are reused.

Relevant Command-Line Options

| Command-line Option | Description |

|---|---|

| Specifies the database name for the HCatalog table. If not specified, the default database name ‘default’ is used. Providing the --hcatalog-database option without --hcatalog-table is an error. This is not a required option. |

| The argument value for this option is the HCatalog tablename. The presence of the --hcatalog-table option signifies that the import or export job is done using HCatalog tables, and it is a required option for HCatalog jobs. |

| The home directory for the HCatalog installation. The directory is expected to have a lib subdirectory and a share/hcatalog subdirectory with necessary HCatalog libraries. If not specified, the system environment variable HCAT_HOME will be checked and failing that, a system property hcatalog.home will be checked. If none of these are set, the default value will be used and currently the default is set to /usr/lib/hcatalog. This is not a required option. |

| This option specifies whether an HCatalog table should be created automatically when importing data. By default, HCatalog tables are assumed to exist. The table name will be the same as the database table name translated to lower case. Further described in the section called “Automatic Table Creation”. |

| This option specifies the storage stanza to be appended to the table. Further described in the section called “Automatic Table Creation”. |

Supported Sqoop Hive Options

The following Sqoop options are also used along with the --hcatalog-table option to provide additional input to the HCatalog jobs. Some of the existing Hive import job options are reused with HCatalog jobs instead of creating HCatalog-specific options for the same purpose.

| Command-line Option | Description |

|---|---|

| This option maps a database column to HCatalog with a specific HCatalog type. |

| The Hive home location. |

| Used for static partitioning filter. The partitioning key should be of type STRING. There can be only one static partitioning key. |

| The value associated with the partition. |

Direct Mode Support

HCatalog integration in Sqoop has been enhanced to support direct mode connectors. Direct mode connectors are high performance connectors specific to a database. The Netezza direct mode connector is enhanced to use this feature for HCatalog jobs.

![[Important]](../common/images/admon/important.png) | Important |

|---|---|

Only the Netezza direct mode connector is currently enabled to work with HCatalog. |

Unsupported Sqoop Hive Import Options

Sqoop Hive options that are not supported with HCatalog jobs:

--hive-import--hive-overwrite

In addition, the following Sqoop export and import options are not supported with HCatalog jobs:

--direct--export-dir--target-dir--warehouse-dir--append--as-sequencefile--as-avrofile

Ignored Sqoop Options

All input delimiter options are ignored.

Output delimiters are generally ignored unless either

--hive-drop-import-delims or --hive-delims-replacement

is used. When the --hive-drop-import-delims or

--hive-delims-replacement option is specified, all database columns of

type CHAR are post-processed to either remove or replace the delimiters, respectively. (See

the section called “Delimited Text Formats and Field and Line Delimiter Characters”.) This is only needed if the HCatalog table uses text

format.