Creating a new deployment

Follow these instructions if you want to deploy a new cluster to run your flow.

Name your flow and deployment, and assign it to a project

On the Overview page provide deployment and flow names, and assign the deployment to a project. You can also import a previously exported deployment configuration, auto-filling configuration values and speeding up deployment.

Configure NiFi

After selecting the target environment, project, and naming your flow, you need to set Apache NiFi version, possible inbound connections, and custom processors. Depending on the flow definition, you may also need to provide values for a number of configuration parameters. Finally, you need to set the capacity of the NiFi cluster servicing your deployment.

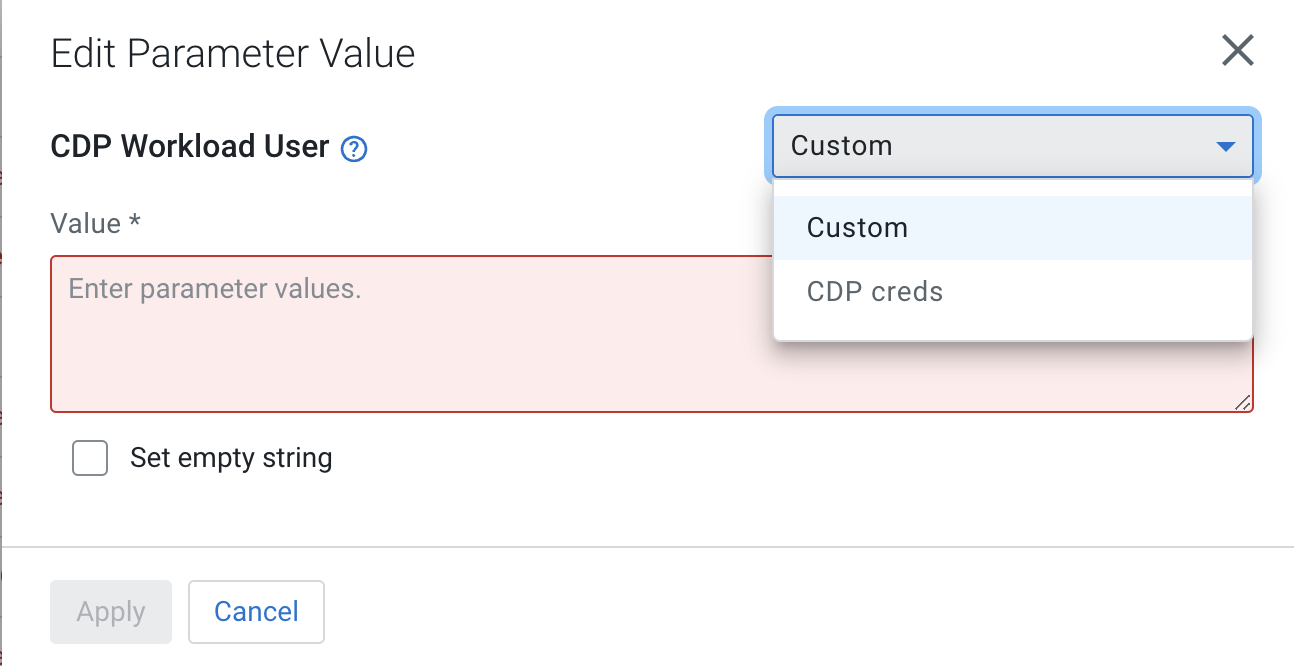

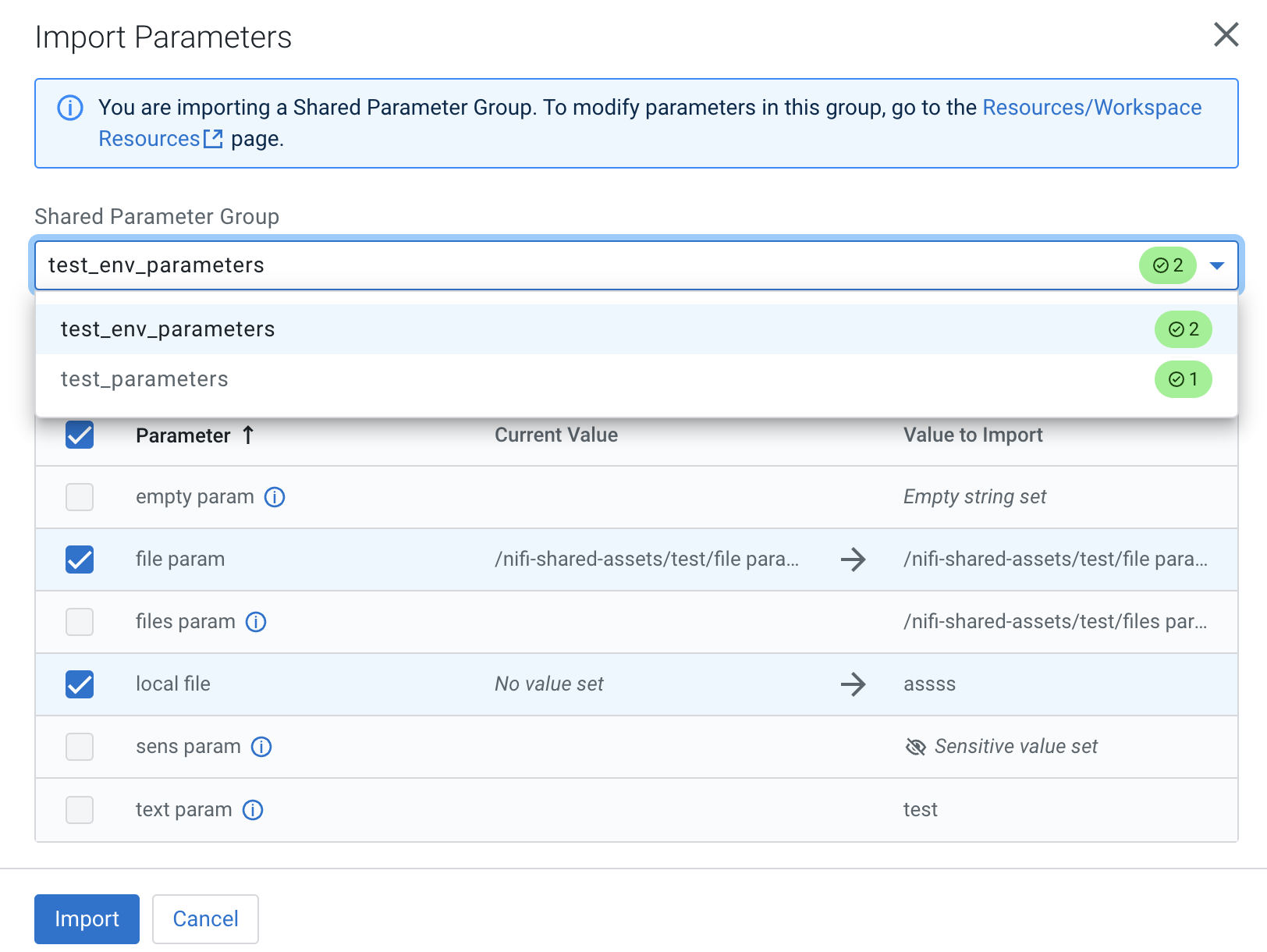

Provide parameter values

Depending on the flow you deploy, you may need to specify parameter values like connection strings or credentials, and upload files like truststores or JARs.

Configure sizing and scaling

Set the size and number of Apache NiFi nodes, auto-scaling, and the type of storage to be used.

Set Key performance indicators

Optionally add key performance indicators to help you track the performance of your flow deployment then review your settings and launch the deployment process.

Verify your settings and initiate deployment

Review deployment settings, make any necessary changes, and start deployment.

After you click Deploy, you are redirected to the Alerts tab in the Flow Details where you can track how the deployment progresses.

icon next to them

or you may edit entire parameter groups by selecting the

icon next to them

or you may edit entire parameter groups by selecting the

Import Shared Parameters

Import Shared Parameters

Previous

Previous