The following table describes several concepts related to HBase file operations and memory (RAM) caching.

|

HBase Component |

Description |

|---|---|

|

HFile |

An HFile contains table data, indexes over that data, and metadata about the data. |

|

Block |

An HBase block is the smallest unit of data that can be read from an HFile. Each HFile consists of a series of blocks. (Note: an HBase block is different than an HDFS block or other underlying file system blocks.) |

|

BlockCache |

BlockCache is the main HBase mechanism for low-latency random read operations. BlockCache is one of two memory cache structures maintained by HBase. When a block is read from HDFS, it is cached in BlockCache. Frequent access to rows in a block cause the block to be kept in cache, improving read performance. |

|

MemStore |

MemStore ("memory store") is the second of two cache structures maintained by HBase. MemStore improves write performance. It accumulates data until it is full, and then writes ("flushes") the data to a new HFile on disk. MemStore serves two purposes: it increases the total amount of data written to disk in a single operation, and it retains recently-written data in memory for subsequent low-latency reads. |

|

Write Ahead Log (WAL) |

The WAL is a log file that records all changes to data until the data is successfully written to disk (MemStore is flushed). This protects against data loss in the event of a failure before MemStore contents are written to disk. |

BlockCache and MemStore reside in random-access memory (RAM); HFiles and the Write Ahead Log are persisted to HDFS.

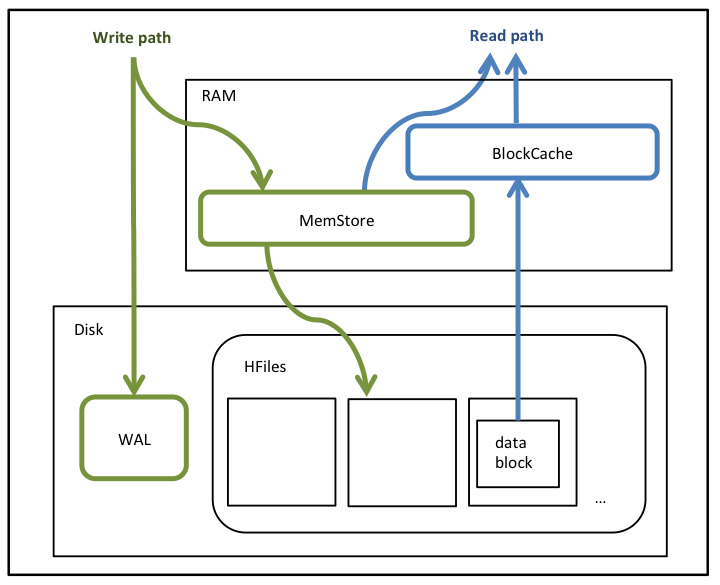

Figure 1 shows write and read paths (simplified):

During write operations, HBase writes to WAL and MemStore. Data is flushed from MemStore to disk according to size limits and flush interval.

During read operations, HBase reads the block from BlockCache or MemStore if it is available in those caches. Otherwise it reads from disk and stores a copy in BlockCache.

Figure 1. HBase read and write operations

By default, BlockCache resides in an area of RAM that is managed by the Java Virtual Machine ("JVM") Garbage Collector; this area of memory is known as “on-heap" memory or the "Java heap. .The BlockCache implementation that manages on-heap cache is called LruBlockCache.

If you have stringent read latency requirements and you have more than 20 GB of RAM available on your servers for use by HBase RegionServers, consider configuring BlockCache to use both on-heap and off-heap ("direct") memory, as shown below. The associated BlockCache implementation is called BucketCache. Read latencies for BucketCache tend to be less erratic than LruBlockCache for large cache loads, because BucketCache (not JVM Garbage Collection) manages block cache allocation.

Figure 2 illustrates the two BlockCache implementations and MemCache, which always resides on the JVM heap.

Figure 2. BucketCache implementation

Additional notes:

BlockCache is enabled by default for all HBase tables.

BlockCache is beneficial for all read operations -- random and sequential -- though it is of primary consideration for random reads.

All regions hosted by a RegionServer share the same BlockCache.

You can turn BlockCache caching on or off per column family.