Using the %livy Interpreter to Access Spark

The Livy interpreter offers several advantages over the default Spark interpreter

(%spark):

Sharing of Spark context across multiple Zeppelin instances.

Reduced resource use, by recycling resources after 60 minutes of activity (by default). The default Spark interpreter runs jobs--and retains job resources--indefinitely.

User impersonation. When the Zeppelin server runs with authentication enabled, the Livy interpreter propagates user identity to the Spark job so that the job runs as the originating user. This is especially useful when multiple users are expected to connect to the same set of data repositories within an enterprise. (The default Spark interpreter runs jobs as the default Zeppelin user.)

The ability to run Spark in yarn-cluster mode.

Prerequisites:

Before using SparkR through Livy, R must be installed on all nodes of your cluster. For more information, see SparkR Prerequisites in the Spark Component Guide.

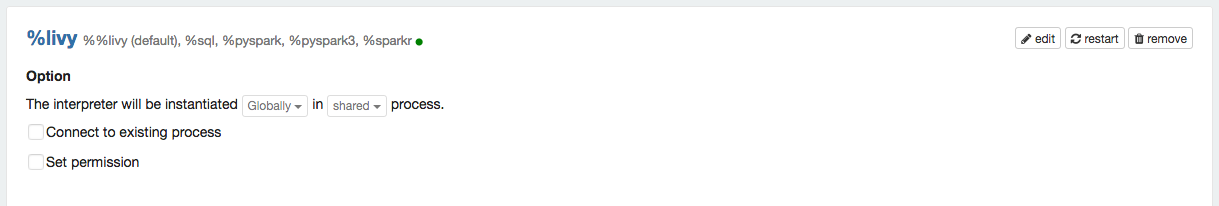

Before using Livy in a note, check the Interpreter page to ensure that the Livy interpreter is configured properly for your cluster:

Note: The Interpreter page is subject to access control settings. If the Interpreters page does not list access settings, check with your system administrator for more information.

To access PySpark using Livy, specify the corresponding interpreter directive before the code that accesses Spark; for example:

%livy.pyspark print "1" 1

Similarly, to access SparkR using Livy, specify the corresponding interpreter directive:

%livy.sparkr

hello <- function( name ) {

sprintf( "Hello, %s", name );

}

hello("livy")![[Important]](../common/images/admon/important.png) | Important |

|---|---|

To use SQLContext with Livy, do not create SQLContext explicitly. Zeppelin creates SQLContext by default. If necessary, remove the following lines from the SparkSQL declaration area of your note: //val sqlContext = new org.apache.spark.sql.SQLContext(sc) //import sqlContext.implicits._ |

Livy sessions are recycled after a specified period of session inactivity. The default is one hour.

For more information about using Livy with Spark 1 or Spark 2, see Submitting Spark Applications Through Livy.

Importing External Packages

To import an external package for use in a note that runs with Livy:

Navigate to the interpreter settings.

If you are running the Livy interpreter in local mode (as specified by

livy.spark.master), add jar files to the/usr/hdp/<version>/livy/repl-jarsdirectory.If you are running the Livy interepreter in yarn-cluster mode, either complete step 2 or edit the Livy configuration on the Interpreters page as follows:

Add a new key,

livy.spark.jars.packages.Set its value to

<group>:<id>:<version>.Here is an example for the

spray-jsonlibrary, which implements JSON in Scala:io.spray:spray-json_2.10:1.3.1

Running Applications that Require Third-party Libraries

If you want to run Spark applications that require third-party libraries through Zeppelin/Livy, complete the following additional step so that the libraries are available on all nodes:

In the Zeppelin UI, click the user settings menu at the top right, then select Interpreter. On the Interpreters page, set the following livy.spark.jars livy property to point to the applicable .jar files. This must be an HDFS location.