Customizing Dynamic Resource Allocation Settings on an Ambari-Managed Cluster

On an Ambari-managed cluster, dynamic resource allocation is enabled and configured for the Spark Thrift server as part of the Spark installation process. Dynamic resource allocation is not enabled by default for general Spark jobs.

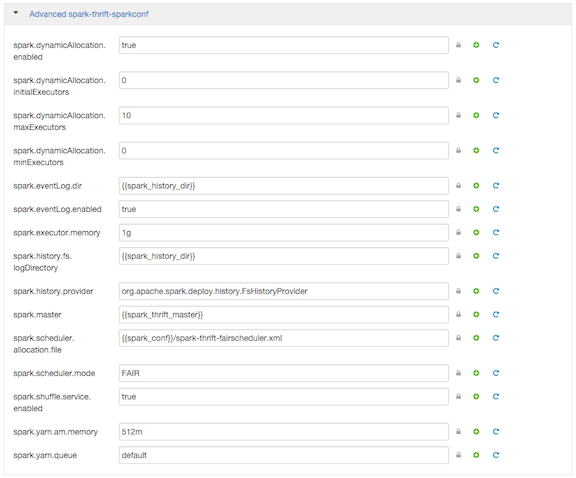

You can review dynamic resource allocation for the Spark Thrift server, and enable and configure settings for general Spark jobs, by choosing Services > Spark and then navigating to the "Advanced spark-thrift-sparkconf" group:

The "Advanced spark-thrift-sparkconf" group lists required settings. You can specify optional properties in the custom section. For a complete list of DRA properties, see Dynamic Resource Allocation Properties.

Dynamic resource allocation requires an external shuffle service that runs on each worker node as an auxiliary service of NodeManager. If you installed your cluster using Ambari, the service is started automatically for use by the Thrift server and general Spark jobs; no further steps are needed.