Troubleshooting Migration Issues

--------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------

Issue: HDFS restart fails after Ambari upgrade on a NameNode HA-enabled, IOP cluster.

Cause:The package hadoop-hdfs-zkfc is not supported by this version of the stack-select tool.

Error: ZooKeeperFailoverController restart fails with the following errors

shown in the Ambari operation log about hadoop-hdfs-zkfc is not a supported package.

File "/usr/lib/python2.6/site-packages/resource_management/libraries/functions/stack_select.py",

line 109, in get_package_name package = get_packages(PACKAGE_SCOPE_STACK_SELECT, service_name, component_name)

File "/usr/lib/python2.6/site-packages/resource_management/libraries/functions/stack_select.py",

line 234, in get_packages raise Fail("The package {0} is not supported by this version of the stack-select tool.".format(package))

resource_management.core.exceptions.Fail: The package hadoop-hdfs-zkfc is not supported by this version of the stack-select tool. Resolution:

On each ZKFailoverController:

Edit

/usr/bin/iop-select. Insert"hadoop-hdfs-zkfc": "hadoop-hdfs",after line 33.![[Note]](../common/images/admon/note.png)

Note the "," is also part of the insert

Run one the following command to create the symbolic link.

If your cluster is IOP 4.2.5, run the following command:

ln -sfn /usr/iop/4.2.5.0-0000/hadoop-hdfs /usr/iop/current/hadoop-hdfs-zkfc

If your cluster is IOP 4.2.0, run the following command:

ln -sfn /usr/iop/4.2.0.0/hadoop-hdfs /usr/iop/current/hadoop-hdfs-zkfc

Restart ZKFailoverController via Ambari web UI.

--------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------

Issue: UI does not come up after migration to HDP

Error in logs:

Ambari-server log: Caused by: java.lang.RuntimeException: Trying to create a ServiceComponent not recognized in stack info, clusterName=c1, serviceName=HBASE, componentName=HBASE_REST_SERVER, stackInfo=HDP-2.6

Cause: HBASE_REST_SERVER component was not deleted before migration, as described here.

Resolution: Delete the HBASE_REST_SERVER component from the Ambari Server database, using the following steps:

DELETE FROM hostcomponentstate

WHERE service_name = 'HBASE'

AND component_name = 'HBASE_REST_SERVER';

DELETE FROM hostcomponentdesiredstate

WHERE service_name = 'HBASE'

AND component_name = 'HBASE_REST_SERVER';

DELETE FROM servicecomponent_version

WHERE component_id IN (SELECT

id

FROM servicecomponentdesiredstate

WHERE service_name = 'HBASE'

AND component_name = 'HBASE_REST_SERVER');

DELETE FROM servicecomponentdesiredstate

WHERE service_name = 'HBASE'

AND component_name = 'HBASE_REST_SERVER';-------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------

Issue: Ambari Metrics does not work after migration

Error in logs:

2017-08-03 22:07:25,524 INFO org.apache.zookeeper.ClientCnxn: Opening socket connection to server eyang-2.openstacklocal/172.26.111.18:61181. Will not attempt to authenticate using SASL (unknown error) 2017-08-03 22:07:25,524 WARN org.apache.zookeeper.ClientCnxn: Session 0x15daa1cd6780004 for server null, unexpected error, closing socket connection and attempting reconnect java.net.ConnectException: Connection refused at sun.nio.ch.SocketChannelImpl.checkConnect(Native Method) at sun.nio.ch.SocketChannelImpl.finishConnect(SocketChannelImpl.java:717) at org.apache.zookeeper.ClientCnxnSocketNIO.doTransport(ClientCnxnSocketNIO.java:361) at org.apache.zookeeper.ClientCnxn$SendThread.run(ClientCnxn.java:1081)

Cause: Ambari Metrics data is incompatible between IOP and HDP release.

Resolution: If AMS mode is embedded remove the transient ZooKeeper data on the collector node:

rm -rf /var/lib/ambari-metrics-collector/hbase-tmp/zookeeper/zookeeper_0/version-2/*

-------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------

Issue: Spark History server failed to start and displays the following message:

raise ExecutionFailed(err_msg, code, out, err) resource_management.core.exceptions.ExecutionFailed: Execution of '/usr/bin/kinit -kt /etc/security/keytabs/spark.headless.keytab spark-hey@IBM.COM; ' returned 1. kinit: Key table file '/etc/security/keytabs/spark.headless.keytab' not found while getting initial credentials

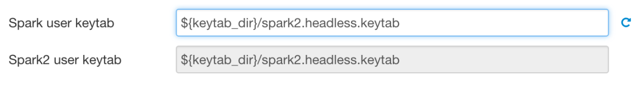

If Kerberos is enabled, AND both SPARK and SPARK2 are installed AND SPARK and SPARK2 have the same service user names, then make sure the following properties are the same:

| Config Type | Property | Value |

| spark-defaults | spark.history.kerberos.keytab | /etc/security/keytabs/spark2.headless.keytab |

| spark-defaults | spark.history.kerberos.keytab | /etc/security/keytabs/spark2.headless.keytab |

This can be addressed during add service wizard as well by setting the correct value on the Configure Identities section:

--------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------

Issue: Oozie start fails after upgrade from IOP 4.2.0.

If oozie server fails with following message in catalina.out log

file:

Error in logs:

org.apache.jasper.compiler.JDTCompiler$1 findType SEVERE: Compilation error org.eclipse.jdt.internal.compiler.classfmt.ClassFormatException at org.eclipse.jdt.internal.compiler.classfmt.ClassFileReader.<init>(ClassFileReader.java:372) at org.apache.jasper.compiler.JDTCompiler$1.findType(JDTCompiler.java:206)

Cause:This is because the IOP bigtop-tomcat version is older than what is required by Oozie in HDP 2.6.4. The Express Upgrade process did not upgrade it to the specific minor version required because it is not possible to do a side-by-side install of this dependency.

Resolution:

Fix this problem by upgrading to big-tomcat 6.0.48-1

yum upgrade bigtop-tomcat

-------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------

Issue: Ambari upgrade results in db consistency check warning message

After ambari server upgrade, it is quite likely that a warning message would be thrown during start operation regarding database consistency check, example:

2017-08-15 07:46:18,903 INFO - Checking for configs that are not mapped to any service 2017-08-15 07:46:18,964 WARN - You have config(s): wd-hiveserver2-config-version1500918446970,spark-metrics-properties-version1493664394129,spark -javaopts-properties-version1493664394129,spark-env-version1493752183508 that is(are) not mapped (in serviceconfigmapping table) to any service!

This indicates that there were service(s) that were deleted from Ambari and the orphaned config associations exist in the database. These do not affect the cluster operation and therefore are listed as Warnings.

This message can be safely ignored with --skip-database-check or you can

clear these warnings by following the steps mentioned in the description of this Apache

Jira:

https://issues.apache.org/jira/browse/AMBARI-20875

-------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------

Issue: Ambari server log shows consistency warning after service delete

After deleting a service and restarting ambari server, Ambari server log shows warning messages if there exists any ConfigGroup(s) for that service which are not deleted by Ambari.

ERROR [ambari-hearbeat-monitor] HostImpl:1085 - Config inconsistency exists: unknown configType=solr-site

These messages are benign and can be safely ignored. However, the problem of the logs filling up with these messages remains.

Cause: https://issues.apache.org/jira/browse/AMBARI-21784, can be used to track the fix for this issue.

Resolution: This warning can be fixed by deleting ConfigGroups that belong to the deleted services using Ambari API(s).

Get all ConfigGroups to delete for service (tag = service-name). This GET call can be performed from the browser:

http://<ambari-server-host>:<port>/api/v1/clusters/<cluster-name>/config_groups?ConfigGroup/tag=<service-name>&fields=ConfigGroup/id

Delete the ConfigGroups using the following delete API call (Use the <id> of the ConfigGroup(s) obtained from the previous call):

curl -u <ambari-admin-username>:<ambari-admin-password> -H "X-Requested-By:ambari" -i -X DELETE http://<ambari-server-host>:<port>/api/v1/clusters/<cluster-name>/config_groups/<id>

-------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------

Issue: Kerberos cluster - Hive service check failed post migration

This is not directly related to either the Ambari or the stack upgrade but is related to the local accounts on the hosts of the cluster.

The Hive service check will fail with an impersonation issue if the local ambari-qa user is not part of the expected group; which, by default is “users”. The expected groups can be seen by viewing the value of the core-site/hadoop.proxyuser.HTTP.groups in the HDFS configurations or via Ambari’s REST API.

Error in logs:

The error seen in the STDERR of the service check operation will be as follows:

resource_management.core.exceptions.ExecutionFailed:

Execution of '/var/lib/ambari-agent/tmp/templetonSmoke.sh c6402.ambari.apache.org ambari-qa 50111

idtest.ambari-qa.1503419582.05.pig /etc/security/keytabs/smokeuser.headless.keytab true

/usr/bin/kinit ambari-qa-c1@EXAMPLE.COM /var/lib/ambari-agent/tmp' returned 1. Templeton Smoke Test (ddl cmd): Failed.

: {"error":"java.lang.reflect.UndeclaredThrowableException"}http_code <500>Looking at the /var/log/hive/hivemetastore.log file, the following error can be seen:

2017-08-22 16:33:03,183 ERROR [pool-7-thread-54]: metastore.RetryingHMSHandler (RetryingHMSHandler.java:invokeInternal(203)) - MetaException(message:User: HTTP/c6402.ambari.apache.org@EXAMPLE.COM is not allowed to impersonate ambari-qa)

Resolution:

To fix the issue, either:

Add the ambari-qa user to an expected group

Example: usermod -a -G users ambari-qa

Example:

[root@c6402 hive]# groups ambari-qa ambari-qa : hadoop [root@c6402 hive]# usermod -a -G users ambari-qa [root@c6402 hive]# groups ambari-qa ambari-qa : hadoop users

OR

Add one (or more) of the ambari-qa groups to the HDFS configuration at:

core-site/hadoop.proxyuser.HTTP.groups

--------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------

Issue: Only post-upgrade Spark jobs display in Spark History Server.

Cause: IOP's default spark.eventLog.dir has been

changed to a custom value that includes the string /iop/apps but does not match the default

value (/iop/apps/4.2.0.0/spark/logs/history-server).

An example custom value would be /iop/apps/custom/dir.In this case, that

custom value will be changed to /hdp/apps/custom/dir during the stack upgrade.

Resolution:

To see pre-upgrade Spark jobs, change the setting back to the custom value established

before upgrading, in this case: /iop/apps/custom/dir

--------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------

Issue: Oozie Hive job failed with NoClassDefFoundError

If Oozie HA is enabled and the Oozie Hive job fails with the following error in the yarn application log:

<<< Invocation of Main class completed <<< Failing Oozie Launcher, Main class [org.apache.oozie.action.hadoop.HiveMain], main() threw exception, org/apache/hadoop/hive/shims/ShimLoader java.lang.NoClassDefFoundError: org/apache/hadoop/hive/shims/ShimLoader at org.apache.hadoop.hive.conf.HiveConf$ConfVars.<clinit>(HiveConf.java:400) at org.apache.hadoop.hive.conf.HiveConf.<clinit>(HiveConf.java:109) at sun.misc.Unsafe.ensureClassInitialized(Native Method)

Resolution:

Run the following command as the oozie user:

oozie admin -oozie http://<oozie-server-host>:11000/oozie -sharelibupdate

Rerun the oozie hive job to verify.

--------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------

Issue: Hive Server Interactive (HSI) fails to start in a kerberized cluster.

HSI is not present in IOP clusters, adding Hive and/or enabling HSI after migrating a kerberized cluser to HDP may result in HSI failing to start.

Cause: HSI conditional service logic not present in IOP cluster results in Keytabs not created in YARN/kerberos.json and therefore keytabs do not exist on all NameNodes.

Resolution: Manually regenerate keytabs from Ambari before enabling HSI, so that keytabs are distributed across all Node Managers.