Accessing the Web UI of a Completed Spark Application

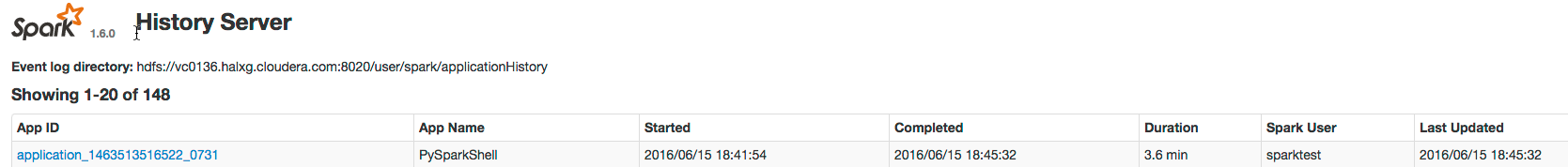

You can access the web application UI of a completed Spark application through the Spark history server.

Example Spark Application Web Application

Consider a job consisting of a set of transformation to join data from an accounts dataset with a weblogs dataset in order to determine the total number of web results for every account and then an action write the result to HDFS. In this example, the write is performed twice, resulting in two jobs. To view the application UI, in the History Server click the link in the App ID column:

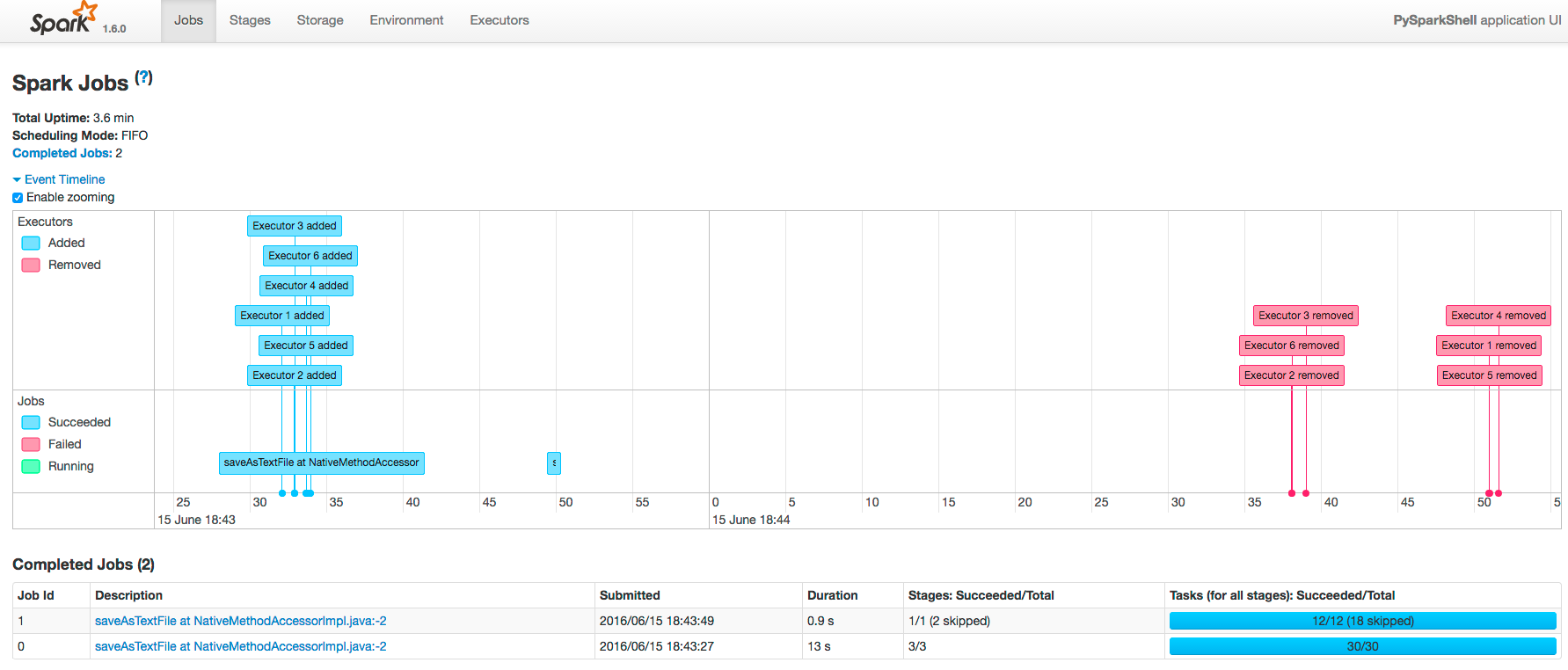

The following screenshot shows the timeline of the events in the

application including the jobs that were run and the allocation and deallocation of

executors. Each job shows the last action, saveAsTextFile, run for the job. The timeline shows that the application

acquires executors over the course of running the first job. After the second job finishes,

the executors become idle and are returned to the cluster.

- Pan - Press and hold the left mouse button and swipe left and right.

- Zoom - Select the Enable zooming checkbox and scroll the mouse up and down.

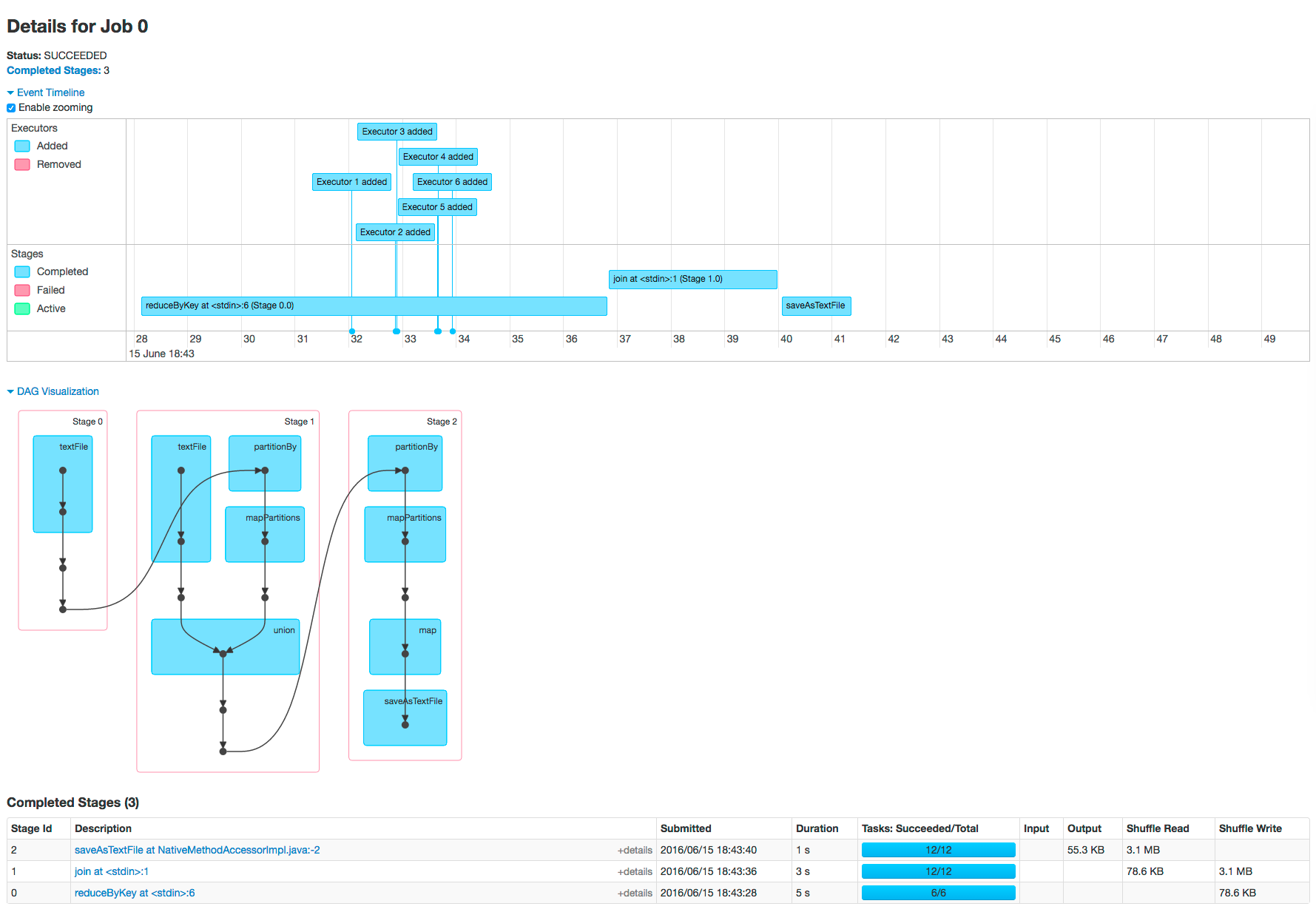

To view the details for Job 0, click the link in the Description column. The following screenshot shows details of each stage in Job 0 and the DAG visualization. Zooming in shows finer detail for the segment from 28 to 42 seconds:

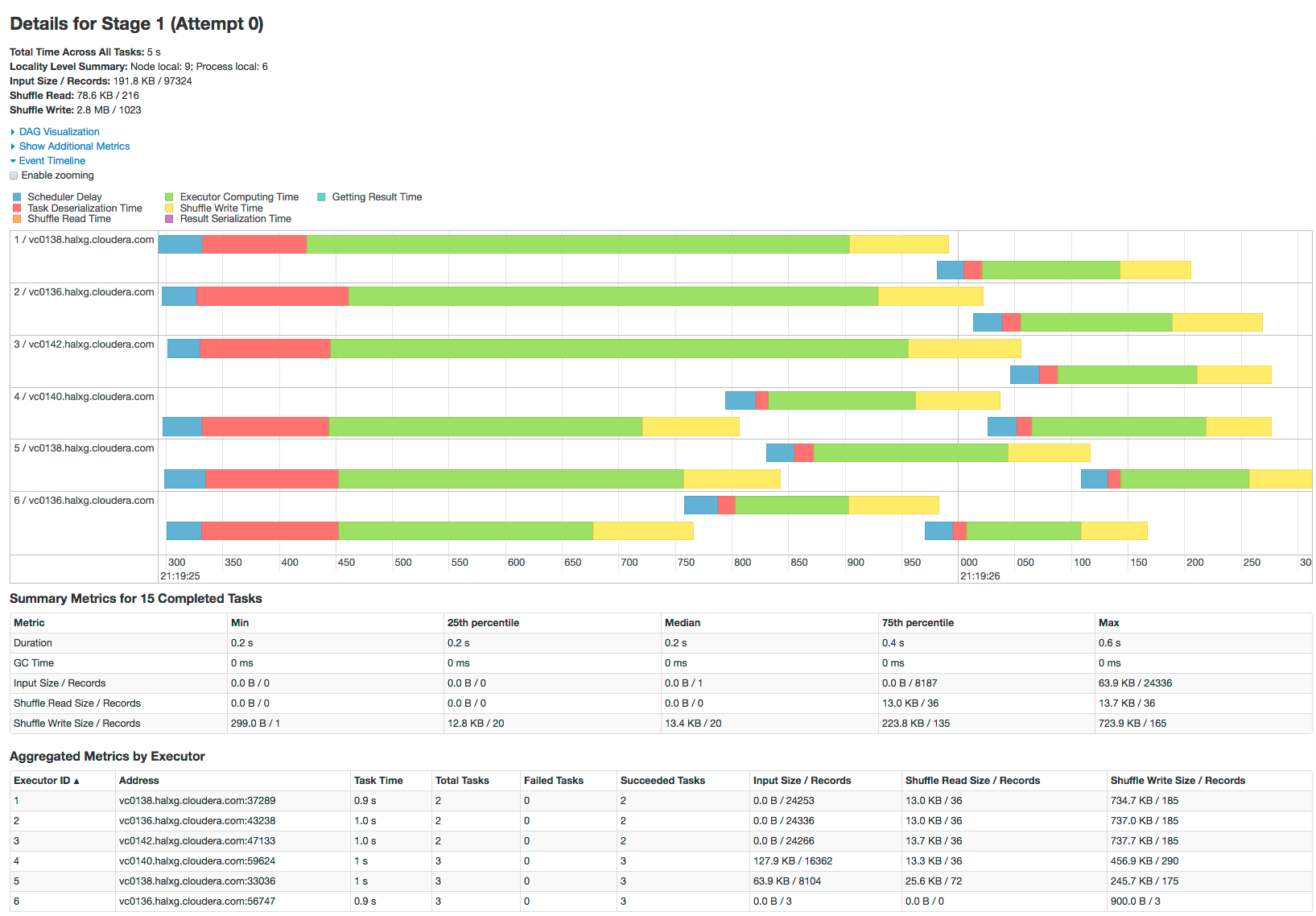

Clicking a stage shows further details and metrics:

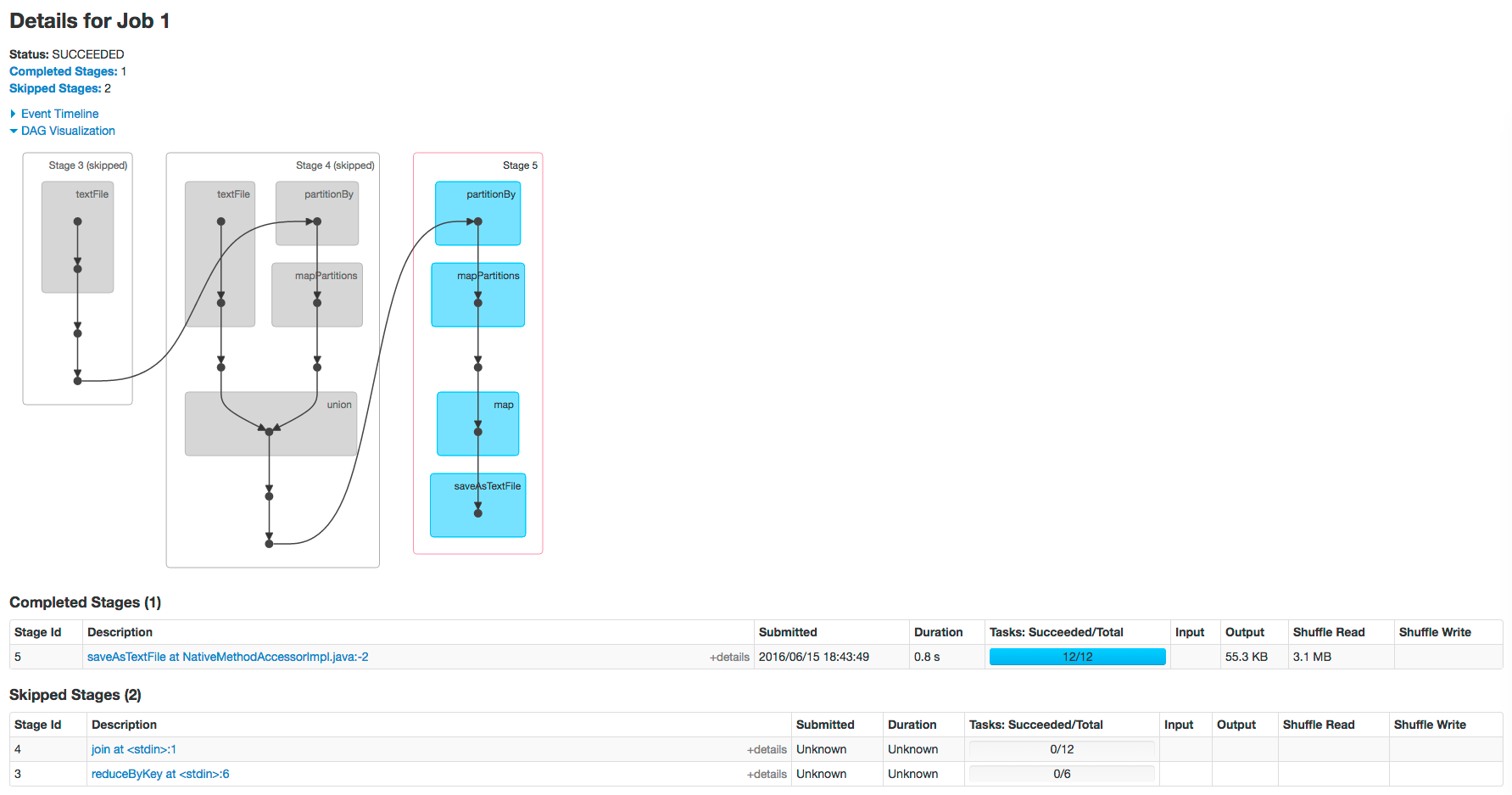

The web page for Job 1 shows how preceding stages are skipped because Spark retains the results from those stages:

Example Spark SQL Web Application

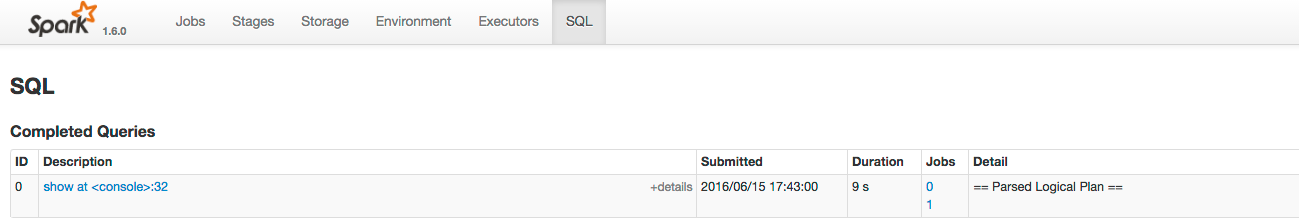

In addition to the screens described above, the web application UI of an application that uses the Spark SQL API also has an SQL tab. Consider an application that loads the contents of two tables into a pair of DataFrames, joins the tables, and then shows the result. After you click the application ID, the SQL tab displays the final action in the query:

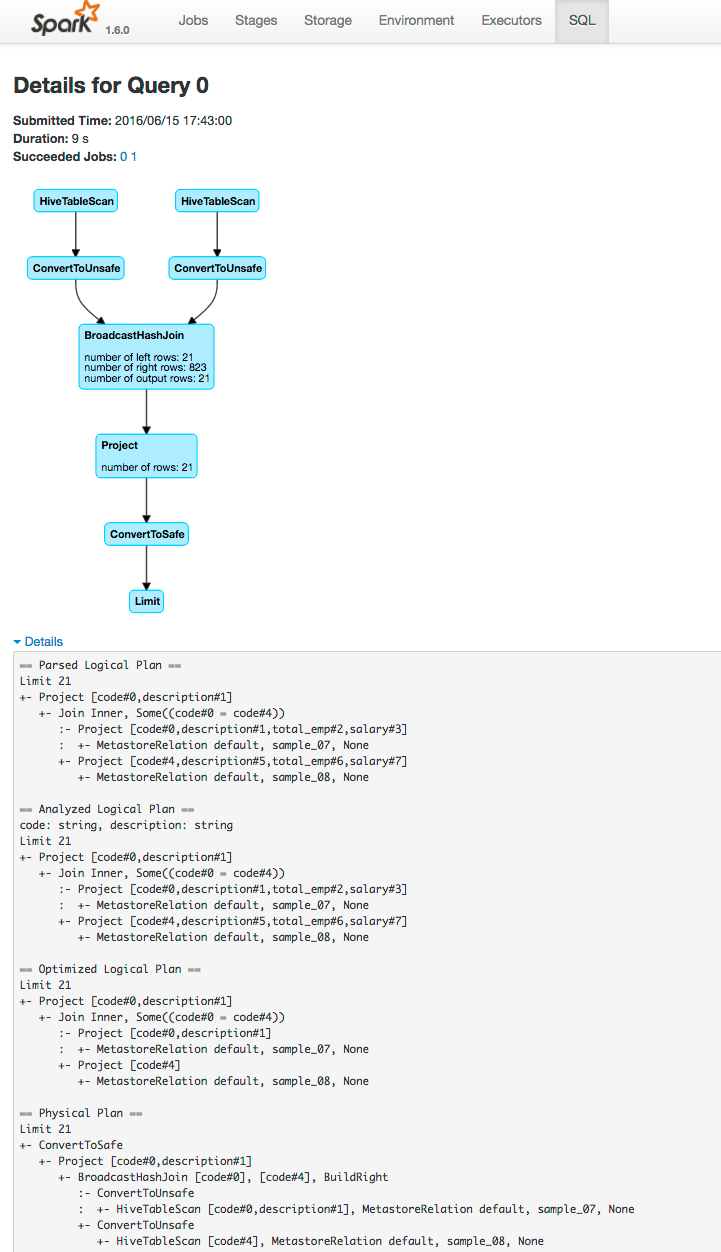

If you click the show

link you see the DAG of the job. Clicking the Details link on this page displays the logical query plan:

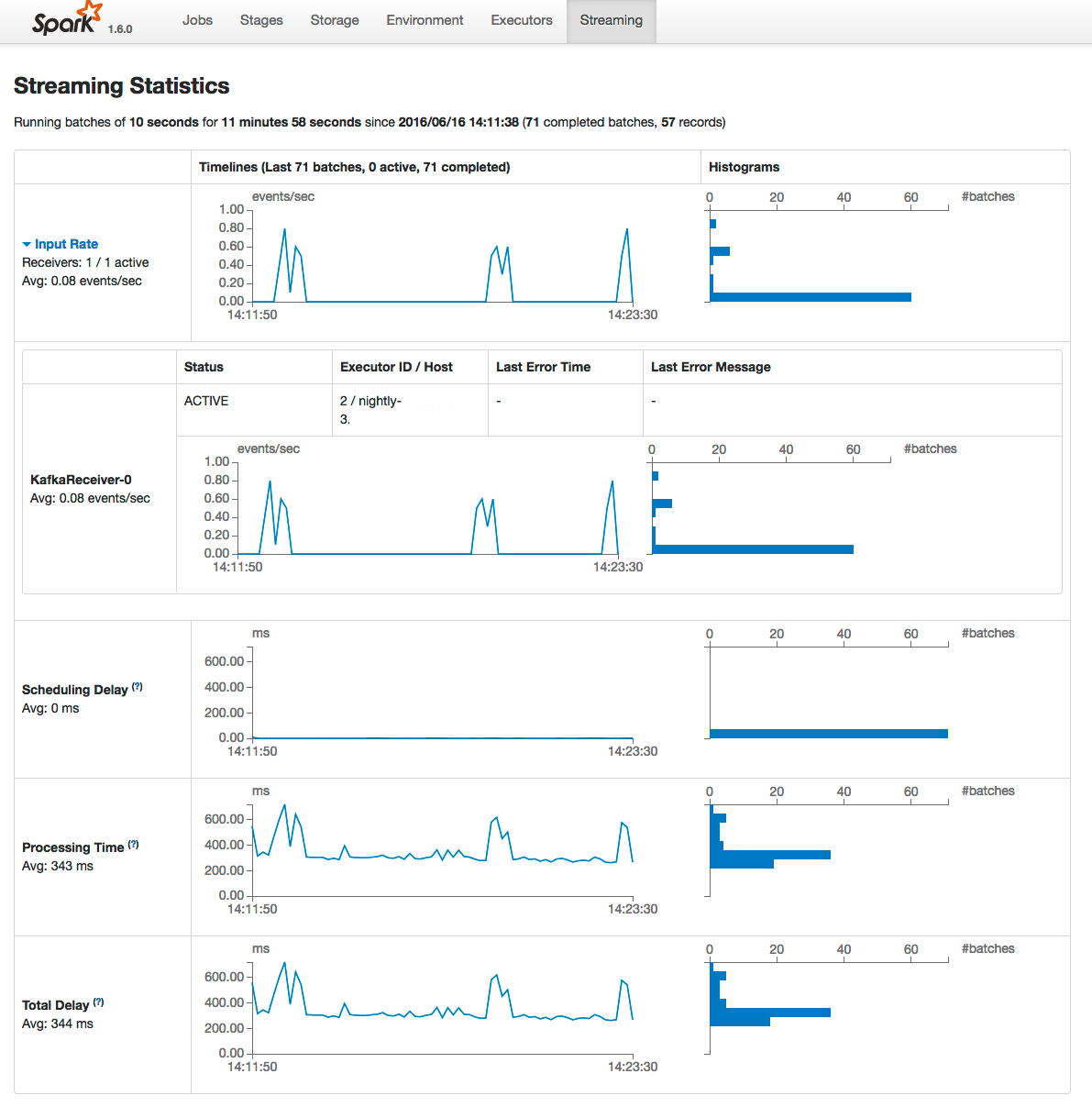

Example Spark Streaming Web Application

The Spark driver web application UI also supports displaying the behavior of streaming applications in the Streaming tab. If you run the example described in the Spark streaming example, and provide three bursts of data, the top of the tab displays a series of visualizations of the statistics summarizing the overall behavior of the streaming application:

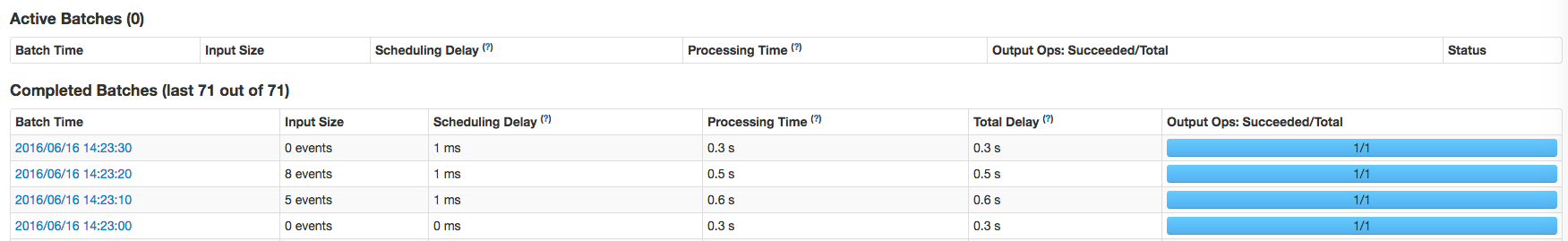

The application has one receiver that processed 3 bursts of event batches, which can be observed in the events, processing time, and delay graphs. Further down the page you can view details of individual batches:

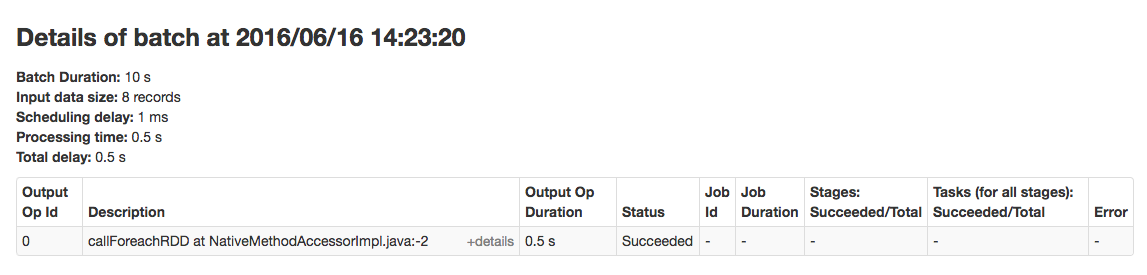

To view the details of a specific batch, click a link in the Batch Time column. Clicking the 2016/06/16 14:23:20 link with 8 events in the batch, provides the following details: