Accessing Ambari Managed HDF software for upgrades

Starting in February 2021, you must have credentials to access and download all binaries provided by Cloudera. This means that you must also ensure that Ambari has the necessary credentials to access the binaries on public repositories.

| Important |

|---|---|

HDF 3.5.2 is the last planned release of HDF software. All future releases of dataflow software will be done as CFM and CDP Private Cloud Base. Cloudera strongly encourages you to upgrade to HDF 3.5.2 before considering a migration to CFM and CDP Private Cloud Base. Review the CFM Release Notes in the Related information section for information about the latest features available in CFM. |

Ambari 2.7.4.0 and earlier

Ambari version 2.7.4.0 earlier supports HDF 3.4.x and earlier. See the Support Matrix in the Related information section below for complete information on version interoperability.

If you are using Ambari 2.7.4.0 or earlier, ensure that Ambari has access to HDF binaries by setting up a local repository. To do this you must manually downloading the tarball repository and make it accessible to Ambari using a local web server. For more information, see Setting up a Local Repository in the Related information section below.

| Note |

|---|---|

If you are upgrading from HDF 3.3.x or HDF 3.4.x to HDF 3.5.x, you must first upgrade Ambari to version 2.7.5. |

Ambari 2.7.5.0

If you are using Ambari 2.7.5.0 you can reference the Cloudera repositories if all the nodes of your cluster can access the internet.

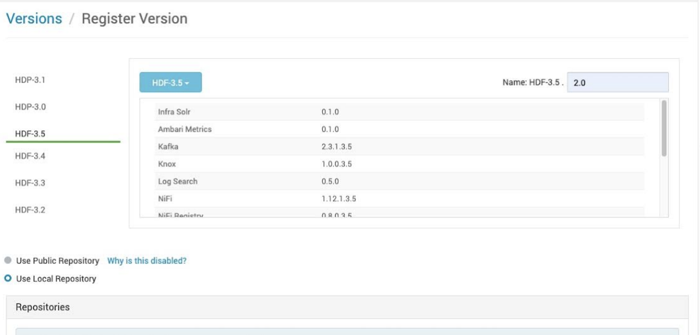

Once you have installed the HDF management pack corresponding to the HDF version to which you want to upgrade to, you need to register your version. See Register your Target Version in the Related information section below for information. When registering the target version, choose Use Local Repository and remove the Operating Systems that do not correspond to the one you are using for your cluster. For example:

You must also add links for both HDF and HDP-UTILS. For CentOS 7 / RHEL 7, and for HDF 3.5.2, the links would be:

-

HDF:

https://archive.cloudera.com/p/HDF/centos7/3.x/updates/3.5.2.0 -

HDP-UTILS:

https://archive.cloudera.com/p/HDP-UTILS/1.1.0.22/repos/centos7

Find exact details in the documentation for the HDF version in which you are interested.

Once you have provided the required URLs, add the credentials information that you

received from Cloudera. To do this, edit the HDF URL, between https:// and

archive, add: <login>:<password>@. Your HDF credentials

are copied to the HDP-UTILS URL. For example:

https://foo:123456@archive.cloudera.com/p/HDF/centos7/3.x/updates/3.5.2.0You can then proceed upgrade instructions as described in this guide.

If you do not want to expose your credentials in clear text, you should instead use the local repository option as described above.