Overview of Encryption Mechanisms for an Enterprise Data Hub

Cloudera provides various mechanisms to protect data persisted to disk or other storage media (data-at-rest) and to protect data as it moves among processes in the cluster or over the network (data-in-transit). The mechanisms, such as TLS for data-in-transit and HDFS encryption and Navigator Encrypt (and related Navigator Key Trustee Server), can all be centrally deployed and managed for the cluster using Cloudera Manager Server. This section provides a high level introductory overview of some of the underlying concepts.

Protecting Data At-Rest

Protecting data at rest typically means encrypting the data when it is stored on disk and letting authorized users and processes—and only authorized users and processes—to decrypt the data when needed for the application or task at hand. With data-at-rest encryption, encryption keys must be distributed and managed, keys should be rotated or changed on a regular basis (to reduce the risk of having keys compromised), and many other factors complicate the process.

However, encrypting data alone may not sufficient. For example, administrators and others with sufficient privileges may have access to personally identifiable information (PII) in log files, audit data, or SQL queries. Depending on the specific use case—in hospital or financial environment, the PII may need to be redacted from all such files, to ensure that users with privileges on the logs and queries that might contain sensitive data are nonetheless unable to view that data when they should not.

Cloudera provides complementary approaches to encrypting data at rest, and provides mechanisms to mask PII in log files, audit data, and SQL queries.

Encryption Options Available with Hadoop

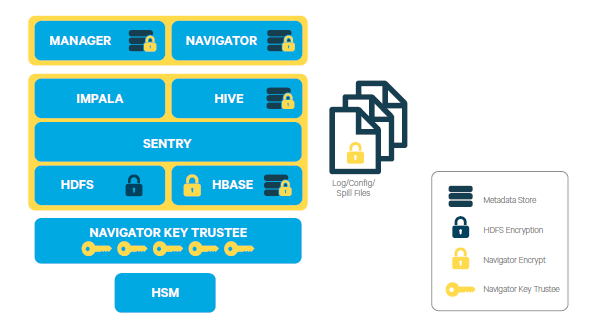

Cloudera provides several mechanisms to ensure that sensitive data is secure. CDH provides transparent HDFS encryption, ensuring that all sensitive data is encrypted before being stored on disk. This capability, together with enterprise-grade encryption key management with Navigator Key Trustee, delivers the necessary protection to meet regulatory compliance for most enterprises. HDFS Encryption together with Navigator Encrypt (available with Cloudera Enterprise) provides transparent encryption for Hadoop, for both data and metadata. These solutions automatically encrypt data while the cluster continues to run as usual, with a very low performance impact. It is massively scalable, allowing encryption to happen in parallel against all the data nodes - as the cluster grows, encryption grows with it.

Additionally, this transparent encryption is optimized for the Intel chipset for high performance. Intel chipsets include AES-NI co-processors, which provide special capabilities that make encryption workloads run extremely fast. Cloudera leverages the latest Intel advances for even faster performance.

- Cloudera Transparent HDFS Encryption to encrypt data stored on HDFS

- Navigator Encrypt for all other data (including metadata, logs, and spill data) associated with Cloudera Manager, Cloudera Navigator, Hive, and HBase

- Navigator Key Trustee for robust, fault-tolerant key management

In addition to applying encryption to the data layer of a Cloudera cluster, encryption can also be applied at the network layer, to encrypt communications among nodes of the cluster. See Configuring Encryption for more information.

Data Redaction with Hadoop

Data redaction is the suppression of sensitive data, such as any personally identifiable information (PII). PII can be used on its own or with other information to identify or locate a single person, or to identify an individual in context. Enabling redaction allow you to transform PII to a pattern that does not contain any identifiable information. For example, you could replace all Social Security numbers (SSN) like 123-45-6789with an unintelligible pattern like XXX-XX-XXXX, or replace only part of the SSN (XXX-XX-6789).

Although encryption can be used to protect Hadoop data, system administrators often have access to unencrypted sensitive user data. Even users with appropriate ACLs on the data could have access to logs and queries where sensitive data might have leaked.

Data redaction provides compliance with industry regulations such as PCI and HIPAA, which require that access to PII be restricted to only those users whose jobs require such access. PII or other sensitive data must not be available through any other channels to users like cluster administrators or data analysts. However, if you already have permissions to access PII through queries, the query results will not be redacted. Redaction only applies to any incidental leak of data. Queries and query results must not show up in cleartext in logs, configuration files, UIs, or other unprotected areas.

Scope:

Data redaction in CDH targets sensitive SQL data and log files. Currently, you can enable or disable redaction for the whole cluster with a simple HDFS service-wide configuration change. Redaction is implemented with the assumption that sensitive information resides in the data itself, not the metadata. If you enable redaction for a file, only sensitive data inside the file is redacted. Metadata such as the name of the file or file owner is not redacted.

When data redaction is enabled, the following data is redacted:

- Logs in HDFS and any dependent cluster services. Log redaction is not available in Isilon-based clusters.

- Audit data sent to Cloudera Navigator

- SQL query strings displayed by Hue, Hive, and Impala.

For more information on enabling this feature, see How to Enable Sensitive Data Redaction.

Cloudera Manager and Passwords

- In the Cloudera Manager Admin Console:

- In the Processes page for a given role instance, passwords in the linked configuration files are replaced by *******.

- Advanced Configuration Snippet (Safety Valve) parameters, such as passwords and secret keys, are visible to users (such as admins) who have edit permissions on the parameter, while those with read-only access see redacted data. However, the parameter name is visible to anyone. (Data to be redacted from these snippets is identified by a fixed list of key words: password, key, aws, and secret.)

- On all Cloudera Manager Server and Cloudera Manager Agent hosts:

- Passwords in the configuration files in /var/run/cloudera-scm-agent/process are replaced by ********.

Cloudera Manager Server Database Password Handling

# Auto-generated by scm_prepare_database.sh on Mon Jan 30 05:02:18 PST 2017 # # For information describing how to configure the Cloudera Manager Server # to connect to databases, see the "Cloudera Manager Installation Guide." # com.cloudera.cmf.db.type=mysql com.cloudera.cmf.db.host=localhost com.cloudera.cmf.db.name=cm com.cloudera.cmf.db.user=cm com.cloudera.cmf.db.setupType=EXTERNAL com.cloudera.cmf.db.password=password

However, as of Cloudera Manager 5.10 (and higher), rather than using a cleartext password you can use a script or other executable that uses stdout to return a password for use by the system.

During installation of the database, you can pass the script name to the scm_prepare_database.sh script with the --scm-password-script parameter. See Setting up the Cloudera Manager Server Database and scm_prepare_database.sh Syntax for details.

You can also replace an existing cleartext password in /etc/cloudera-scm-server/db.properties by replacing the com.cloudera.cmf.db.password setting with com.cloudera.cmf.db.password_script and setting the name of the script or executable:

| Cleartext Password (5.9 and prior) | Script (5.10 and higher) |

|---|---|

| com.cloudera.cmf.db.password=password | com.cloudera.cmf.db.password_script=script_name_here |

At runtime, if /etc/cloudera-scm-server/db.properties does not include the script identified by com.cloudera.cmf.db.password_script, the system looks for the value of com.cloudera.cmf.db.password.

Protecting Data In-Transit

- HDFS Transparent Encryption: Data encrypted using HDFS Transparent Encryption is protected end-to-end. Any data written to and from HDFS can only be encrypted or decrypted by the client. HDFS does not have access to the unencrypted data or the encryption keys. This supports both, at-rest encryption as well as in-transit encryption.

- Data Transfer: The first channel is data transfer, including the reading and writing of data blocks to HDFS. Hadoop uses a SASL-enabled wrapper around its

native direct TCP/IP-based transport, called DataTransportProtocol, to secure the I/O streams within an DIGEST-MD5 envelope (For steps, see Configuring Encrypted HDFS Data Transport). This procedure also employs secured HadoopRPC (see Remote Procedure

Calls) for the key exchange. The HttpFS REST interface, however, does not provide secure communication between the client and HDFS, only secured authentication using SPNEGO.

For the transfer of data between DataNodes during the shuffle phase of a MapReduce job (that is, moving intermediate results between the Map and Reduce portions of the job), Hadoop secures the communication channel with HTTP Secure (HTTPS) using Transport Layer Security (TLS). See Encrypted Shuffle and Encrypted Web UIs.

- Remote Procedure Calls: The second channel is system calls to remote procedures (RPC) to the various systems and frameworks within a Hadoop cluster. Like data transfer activities, Hadoop has its own native protocol for RPC, called HadoopRPC, which is used for Hadoop API client communication, intra-Hadoop services communication, as well as monitoring, heartbeats, and other non-data, non-user activity. HadoopRPC is SASL-enabled for secured transport and defaults to Kerberos and DIGEST-MD5 depending on the type of communication and security settings. For steps, see Configuring Encrypted HDFS Data Transport.

- User Interfaces: The third channel includes the various web-based user interfaces within a Hadoop cluster. For secured transport, the solution is straightforward; these interfaces employ HTTPS.

SSL/TLS Certificates Overview

| Type | Usage Note |

|---|---|

| Public CA-signed certificates | Recommended. Using certificates signed by a trusted public CA simplifies deployment because the default Java client already trusts most public CAs. Obtain certificates from one of the trusted well-known (public) CAs, such as Symantec and Comodo, as detailed in Generate TLS Certificates |

| Internal CA-signed certificates | Obtain certificates from your organization's internal CA if your organization has its own. Using an internal CA can reduce costs (although cluster configuration may require establishing the trust chain for certificates signed by an internal CA, depending on your IT infrastructure). See How to Configure TLS Encryption for Cloudera Manager for information about establishing trust as part of configuring a Cloudera Manager cluster. |

| Self-signed certificates | Not recommended for production deployments. Using self-signed certificates requires configuring each client to trust the specific certificate (in addition to generating and distributing the certificates). However, self-signed certificates are fine for non-production (testing or proof-of-concept) deployments. See How to Use Self-Signed Certificates for TLS for details. |

For more information on setting up SSL/TLS certificates, see TLS/SSL Overview.

TLS/SSL Encryption for CDH Components

Cloudera recommends securing a cluster using Kerberos authentication before enabling encryption such as SSL on a cluster. If you enable SSL for a cluster that does not already have Kerberos authentication configured, a warning will be displayed.

- HDFS, MapReduce, and YARN daemons act as both SSL servers and clients.

- HBase daemons act as SSL servers only.

- Oozie daemons act as SSL servers only.

- Hue acts as an SSL client to all of the above.

For information on setting up SSL/TLS for CDH services, see Configuring TLS/SSL Encryption for CDH Services.

Data Protection within Hadoop Projects

The table below lists the various encryption capabilities that can be leveraged by CDH components and Cloudera Manager.

| Project | Encryption for Data-in-Transit | Encryption for Data-at-Rest

(HDFS Encryption + Navigator Encrypt + Navigator Key Trustee) |

|---|---|---|

| HDFS | SASL (RPC), SASL (DataTransferProtocol) | Yes |

| MapReduce | SASL (RPC), HTTPS (encrypted shuffle) | Yes |

| YARN | SASL (RPC) | Yes |

| Accumulo | Partial - Only for RPCs and Web UI (Not directly configurable in Cloudera Manager) | Yes |

| Flume | TLS (Avro RPC) | Yes |

| HBase | SASL - For web interfaces, inter-component replication, the HBase shell and the REST, Thrift 1 and Thrift 2 interfaces | Yes |

| HiveServer2 | SASL (Thrift), SASL (JDBC), TLS (JDBC, ODBC) | Yes |

| Hue | TLS | Yes |

| Impala | TLS or SASL between impalad and clients, but not between daemons | |

| Oozie | TLS | Yes |

| Pig | N/A | Yes |

| Search | TLS | Yes |

| Sentry | SASL (RPC) | Yes |

| Spark | None | Yes |

| Sqoop | Partial - Depends on the RDBMS database driver in use | Yes |

| Sqoop2 | Partial - You can encrypt the JDBC connection depending on the RDBMS database driver | Yes |

| ZooKeeper | SASL (RPC) | No |

| Cloudera Manager | TLS - Does not include monitoring | Yes |

| Cloudera Navigator | TLS - Also see Cloudera Manager | Yes |

| Backup and Disaster Recovery | TLS - Also see Cloudera Manager | Yes |