Update from 1.4.1 or 1.5.0 to 1.5.1 (ECS)

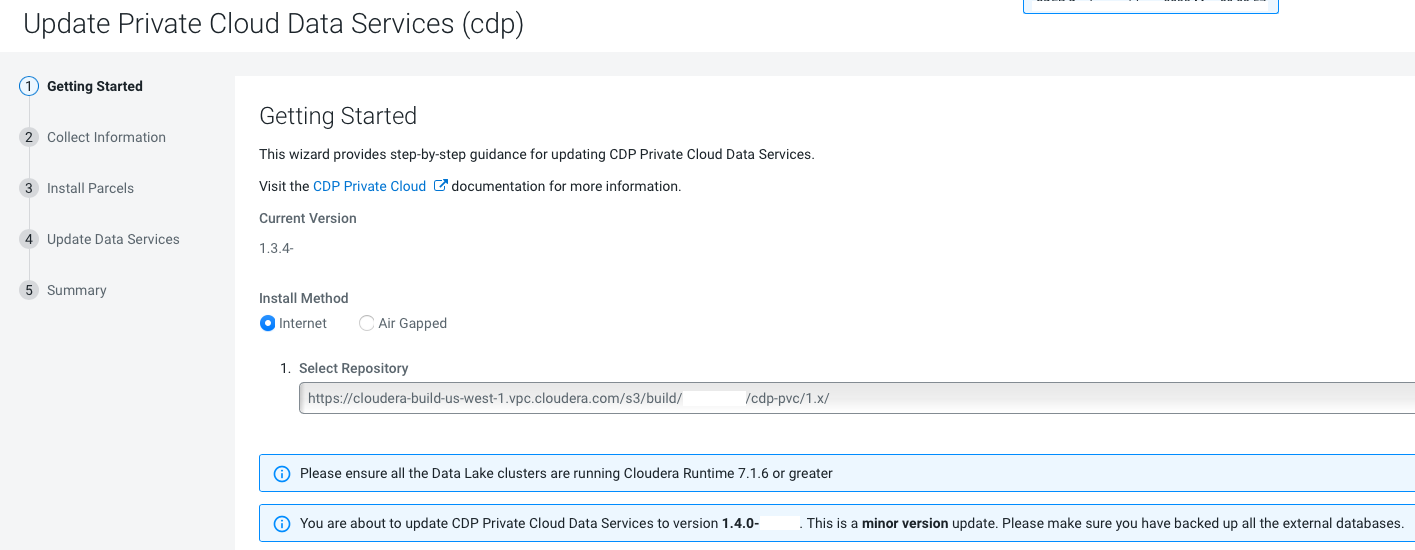

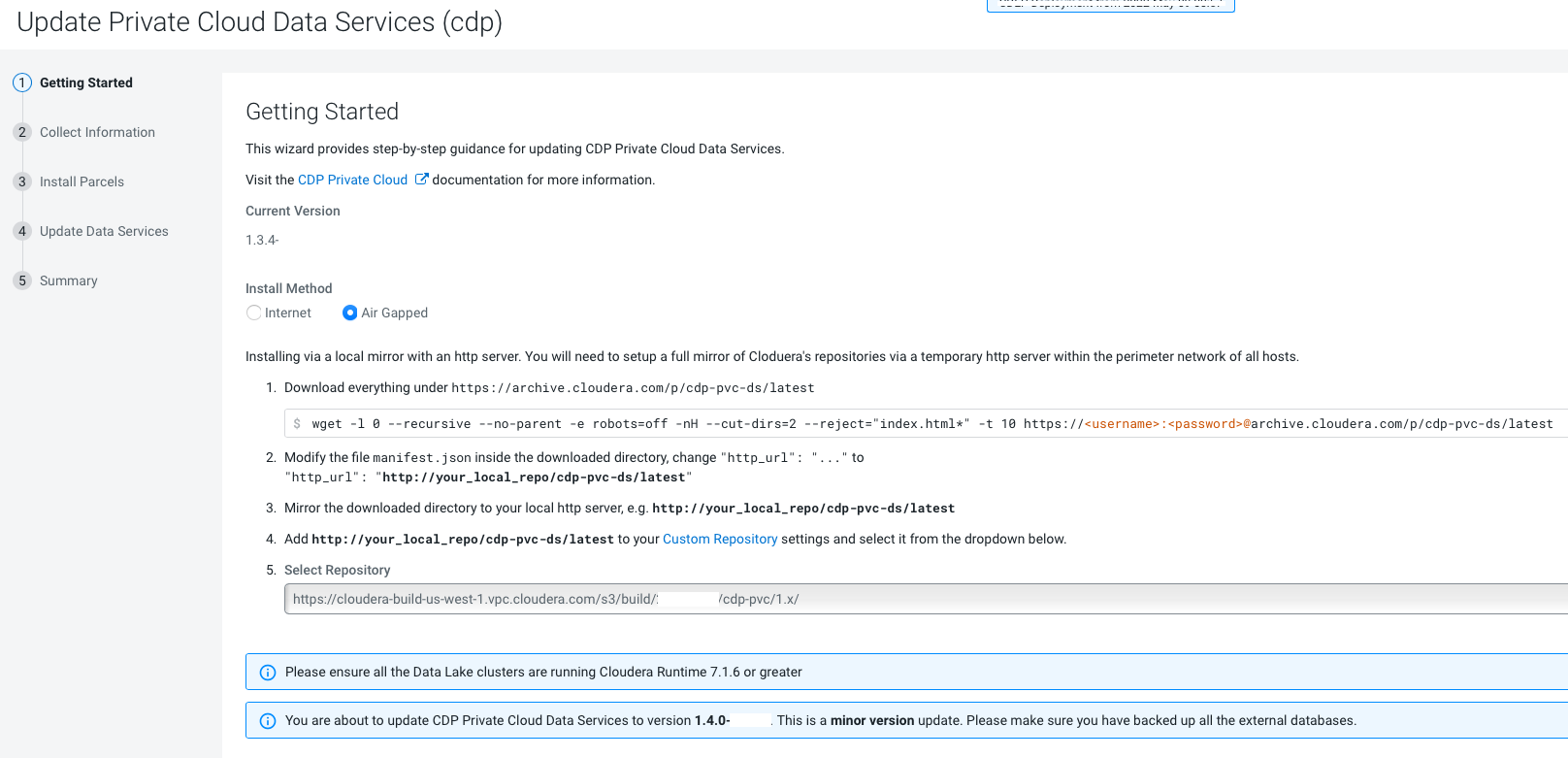

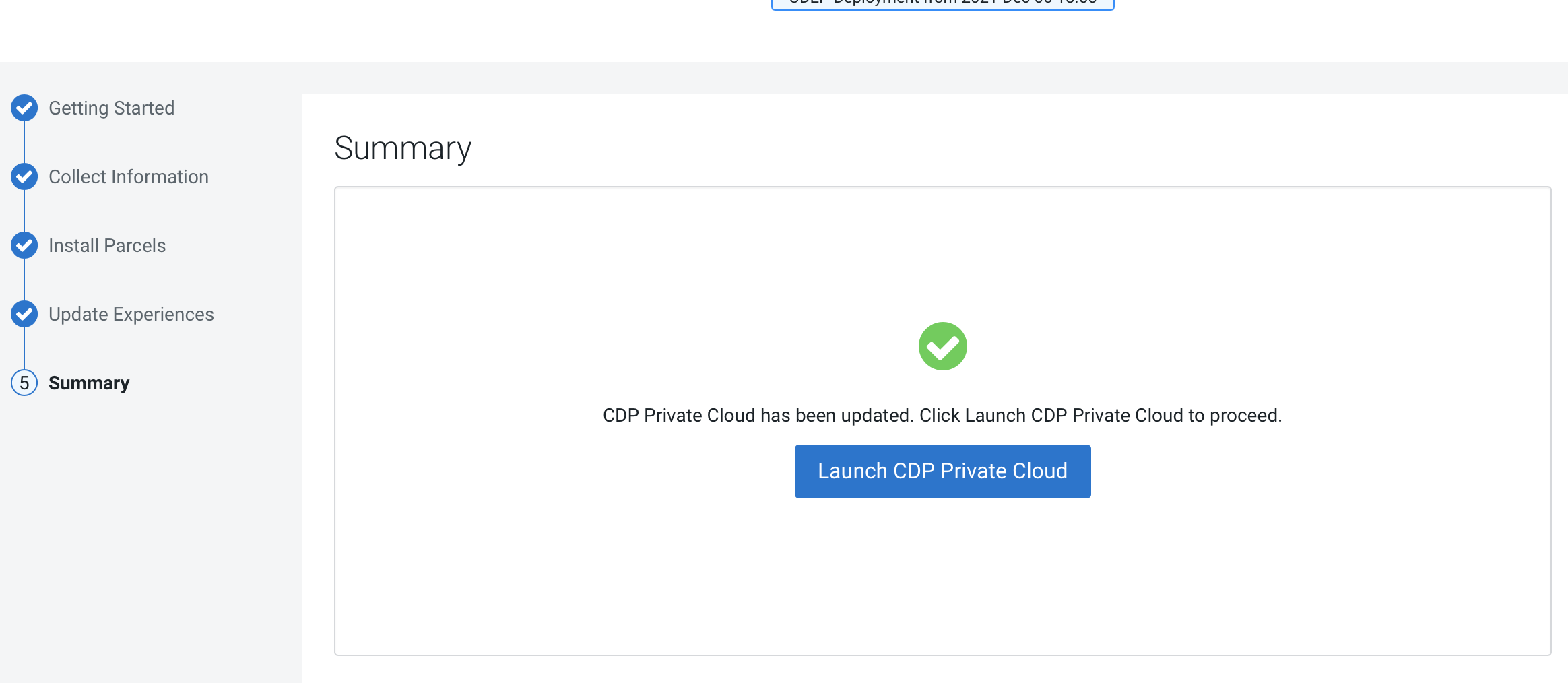

You can update your existing CDP Private Cloud Data Services 1.4.1 or 1.5.0 to 1.5.1 without performing an uninstall.

- Review the Software Support Matrix for ECS.

-

As of CDP Private Cloud Data Services 1.5.1, external metadata databases are no longer supported. If you are upgrading from CDP Private Cloud Data Services 1.4.1 or 1.5.0 to 1.5.1, and you were previously using an external Control Plane database, you must run the following

psqlcommands to create the required databases. You should also ensure that the three new databases are owned by the common database users known by the control plane.CREATE DATABASE db-dss-app; CREATE DATABASE db-cadence; CREATE DATABASE db-cadence-visibility;

- If you are upgrading from CDP Private Cloud Data Services 1.4.1 or 1.5.0 to 1.5.1, and you were previously using an external Control Plane database, you must regenerate the DB certificate with SAN before upgrading to CDP Private Cloud Data Services 1.5.1. For more information see Pre-upgrade - Regenerate external DB cert as SAN (if applicable).

- If the upgrade stalls, do the following:

- Check the status of all pods by running the following command on

the ECS server node:

kubectl get pods --all-namespaces

- If there are any pods stuck in “Terminating” state, then force

terminate the pod using the following

command:

kubectl delete pods <NAME OF THE POD> -n <NAMESPACE> --grace-period=0 —force

If the upgrade still does not resume, continue with the remaining steps.

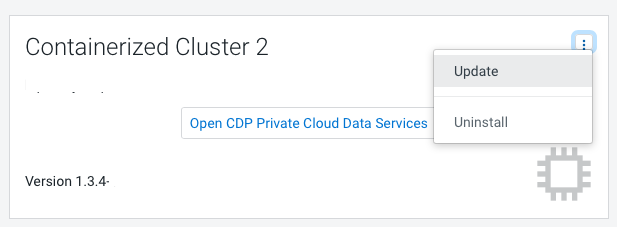

- In the Cloudera Manager Admin Console, go to the ECS service and

click .

The Longhorn dashboard opens.

-

Check the "in Progress" section of the dashboard to see whether there are any volumes stuck in the attaching/detaching state in. If a volume is that state, reboot its host.

- Check the status of all pods by running the following command on

the ECS server node:

- You may see the following error message during the

Upgrade Cluster > Reapplying all settings > kubectl-patch

:

kubectl rollout status deployment/rke2-ingress-nginx-controller -n kube-system --timeout=5m error: timed out waiting for the condition

If you see this error, do the following:- Check whether all the Kubernetes nodes are ready for scheduling.

Run the following command from the ECS Server

node:

kubectl get nodesYou will see output similar to the following:NAME STATUS ROLES AGE VERSION <node1> Ready,SchedulingDisabled control-plane,etcd,master 103m v1.21.11+rke2r1 <node2> Ready <none> 101m v1.21.11+rke2r1 <node3> Ready <none> 101m v1.21.11+rke2r1 <node4> Ready <none> 101m v1.21.11+rke2r1

-

Run the following command from the ECS Server node for the node showing a status of

SchedulingDisabled:kubectl uncordonYou will see output similar to the following:<node1>node/<node1> uncordoned

- Scale down and scale up the

rke2-ingress-nginx-controller pod by

running the following command on the ECS Server

node:

kubectl delete pod rke2-ingress-nginx-controller-<pod number> -n kube-system

- Resume the upgrade.

- Check whether all the Kubernetes nodes are ready for scheduling.

Run the following command from the ECS Server

node:

- After upgrade, the Cloudera Manager admin role may be missing the Host Administrators

privilege in an upgraded cluster. The cluster administrator should run the following

command to manually add this privilege to the

role.

ipa role-add-privilege <cmadminrole> --privileges="Host Administrators"

- If you specified a custom certificate, select the ECS cluster in Cloudera Manager, then

select Actions > Update Ingress Controller. This command copies the

cert.pemandkey.pemfiles from the Cloudera Manager server host to the ECS Management Console host.

icon, then click

icon, then click