HBase High Availability

To help you achieve redundancy for high availability in a production environment, Apache HBase supports deployment of multiple HBase Masters in a cluster. If you are working in a Hortonworks Data Platform (HDP) 2.2 or later environment, Apache Ambari enables simple setup of multiple HBase Masters.

During the Apache HBase service installation and depending on your component assignment, Ambari installs and configures one HBase Master component and multiple RegionServer components. To configure high availability for the HBase service, you can run two or more HBase Master components. HBase uses ZooKeeper for coordination of the active Master in a cluster running two or more HBase Masters. This means, when primary HBase Master fails, the client will be automatically routed to secondary Master.

Set Up Multiple HBase Masters Through Ambari

Hortonworks recommends that you use Ambari to configure multiple HBase Masters. Complete the following tasks:

Add a Secondary HBase Master to a New Cluster

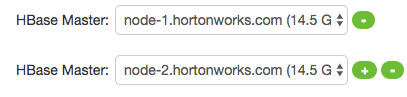

When installing HBase, click the “+” sign that is displayed on the right side of the name of the existing HBase Master to add and select a node on which to deploy a secondary HBase Master:

Add a New HBase Master to an Existing Cluster

Log in to the Ambari management interface as a cluster administrator.

In Ambari Web, browse to Services > HBase.

In Service Actions, click + Add HBase Master.

Choose the host on which to install the additional HBase master; then click Confirm Add.

Ambari installs the new HBase Master and reconfigures HBase to manage multiple Master instances.

Set Up Multiple HBase Masters Manually

Before you can configure multiple HBase Masters manually, you must configure the first node (node-1) on your cluster by following the instructions in the Installing, Configuring, and Deploying a Cluster section in Apache Ambari Installation Guide. Then, complete the following tasks:

Configure Passwordless SSH Access

Prepare node-1

Prepare node-2 and node-3

Start and test your HBase Cluster

Configure Passwordless SSH Access

The first node on the cluster (node-1) must be able to log in to other nodes on the cluster and then back to itself in order to start the daemons. You can accomplish this by using the same user name on all hosts and by using passwordless Secure Socket Shell (SSH) login:

On node-1, stop HBase service.

On node-1, log in as an HBase user and generate an SSH key pair:

$ ssh-keygen -t rsa

The system prints the location of the key pair to standard output. The default name of the public key is id_rsa.pub.

Create a directory to hold the shared keys on the other nodes:

On node-2, log in as an HBase user and create an .ssh/ directory in your home directory.

On node-3, repeat the same procedure.

Use Secure Copy (scp) or any other standard secure means to copy the public key from node-1 to the other two nodes.

On each node in the cluster, create a new file called .ssh/authorized_keys (if it does not already exist) and append the contents of the id_rsa.pub file to it:

$ cat id_rsa.pub >> ~/.ssh/authorized_keys

Ensure that you do not overwrite your existing .ssh/authorized_keys files by concatenating the new key onto the existing file using the >> operator rather than the > operator.

Use Secure Shell (SSH) from node-1 to either of the other nodes using the same user name.

You should not be prompted for password.

On node-2, repeat Step 5, because it runs as a backup Master.

Prepare node-1

Because node-1 should run your primary Master and ZooKeeper processes, you must stop the RegionServer from starting on node-1:

Edit conf/regionservers by removing the line that contains localhost and adding lines with the host name or IP addresseses for node-2 and node-3.

![[Note]](../common/images/admon/note.png)

Note If you want to run a RegionServer on node-1, you should refer to it by the hostname the other servers would use to communicate with it. For example, for node-1, it is called as node-1.test.com.

Configure HBase to use node-2 as a backup Master by creating a new file in conf/ called backup-Masters, and adding a new line to it with the host name for node-2: for example, node-2.test.com.

Configure ZooKeeper on node-1 by editing conf/hbase-site.xml and adding the following properties:

<property> <name>hbase.zookeeper.quorum</name> <value>node-1.test.com,node-2.test.com,node-3.test.com</value> </property> <property> <name>hbase.zookeeper.property.dataDir</name> <value>/usr/local/zookeeper</value> </property>This configuration directs HBase to start and manage a ZooKeeper instance on each node of the cluster. You can learn more about configuring ZooKeeper in ZooKeeper.

Change every reference in your configuration to node-1 as localhost to point to the host name that the other nodes use to refer to node-1: in this example, node-1.test.com.

Prepare node-2 and node-3

Before preparing node-2 and node-3, each node of your cluster must have the same configuration information.

node-2 runs as a backup Master server and a ZooKeeper instance.

Download and unpack HBase on node-2 and node-3.

Copy the configuration files from node-1 to node-2 and node-3.

Copy the contents of the conf/ directory to the conf/ directory on node-2 and node-3.

Start and Test your HBase Cluster

Use the jps command to ensure that HBase is not running.

Kill HMaster, HRegionServer, and HQuorumPeer processes, if they are running.

Start the cluster by running the start-hbase.sh command on node-1.

Your output is similar to this:

$ bin/start-hbase.sh node-3.test.com: starting zookeeper, logging to /home/hbuser/hbase-0.98.3-hadoop2/bin/../logs/hbase-hbuser-zookeeper-node-3.test.com.out node-1.example.com: starting zookeeper, logging to /home/hbuser/hbase-0.98.3-hadoop2/bin/../logs/hbase-hbuser-zookeeper-node-1.test.com.out node-2.example.com: starting zookeeper, logging to /home/hbuser/hbase-0.98.3-hadoop2/bin/../logs/hbase-hbuser-zookeeper-node-2.test.com.out starting master, logging to /home/hbuser/hbase-0.98.3-hadoop2/bin/../logs/hbase-hbuser-master-node-1.test.com.out node-3.test.com: starting regionserver, logging to /home/hbuser/hbase-0.98.3-hadoop2/bin/../logs/hbase-hbuser-regionserver-node-3.test.com.out node-2.test.com: starting regionserver, logging to /home/hbuser/hbase-0.98.3-hadoop2/bin/../logs/hbase-hbuser-regionserver-node-2.test.com.out node-2.test.com: starting master, logging to /home/hbuser/hbase-0.98.3-hadoop2/bin/../logs/hbase-hbuser-master-node2.test.com.outZooKeeper starts first, followed by the Master, then the RegionServers, and finally the backup Masters.

Run the jps command on each node to verify that the correct processes are running on each server.

You might see additional Java processes running on your servers as well, if they are used for any other purposes.

Example1. node-1 jps Output

$ jps 20355 Jps 20071 HQuorumPeer 20137 HMaster

Example 2. node-2 jps Output

$ jps 15930 HRegionServer 16194 Jps 15838 HQuorumPeer 16010 HMaster

Example 3. node-3 jps Output

$ jps 13901 Jps 13639 HQuorumPeer 13737 HRegionServer

ZooKeeper Process Name

![[Note]](../common/images/admon/note.png)

Note The HQuorumPeer process is a ZooKeeper instance which is controlled and started by HBase. If you use ZooKeeper this way, it is limited to one instance per cluster node and is appropriate for testing only. If ZooKeeper is run outside of HBase, the process is called QuorumPeer. For more about ZooKeeper configuration, including using an external ZooKeeper instance with HBase, see zookeeper section.

Browse to the Web UI and test your new connections.

You should be able to connect to the UI for the Master http://node-1.test.com:16010/ or the secondary master at http://node-2.test.com:16010/. If you can connect through localhost but not from another host, check your firewall rules. You can see the web UI for each of the RegionServers at port 16030 of their IP addresses, or by clicking their links in the web UI for the Master.

Web UI Port Changes

![[Note]](../common/images/admon/note.png)

Note In HBase newer than 0.98.x, the HTTP ports used by the HBase Web UI changed from 60010 for the Master and 60030 for each RegionServer to 16010 for the Master and 16030 for the RegionServer.