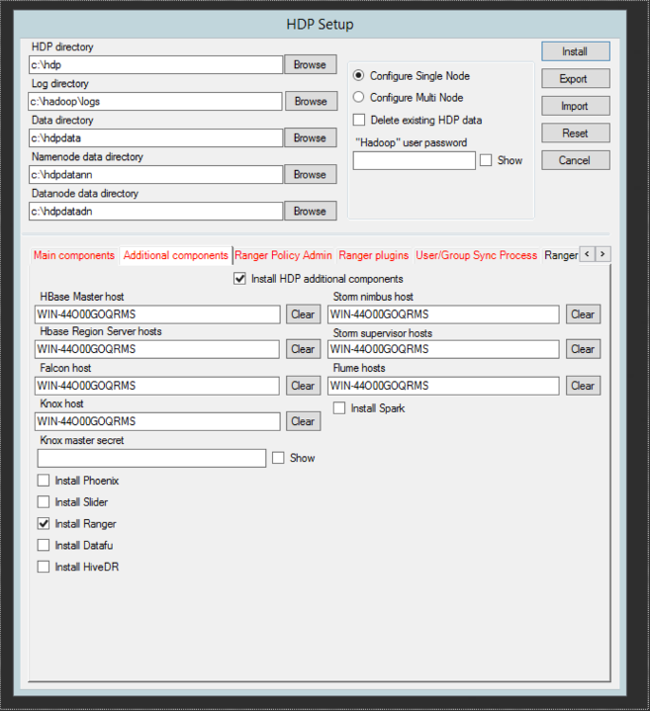

The top part of the form, which includes HDP directory, Log directory, Data directory, Name node data directory and Data node data directory, is filled in with default values. Customize these entries as needed, and note whether you are configuring a single- or multi-node installation.

Complete the fields at the top of the HDP Setup form:

Table 2.1. Main component screen values

Configuration Property Name

Description

Example Value

Mandatory/Optional/Conditional

HDP directory

HDP installation directory

d:\hdp

Mandatory

Log directory

HDP's operational logs are written to this directory on each cluster host. Ensure that you have sufficient disk space for storing these log files.

d:\hadoop\logs

Mandatory

Data directory

HDP data will be stored in this directory on each cluster node. You can add multiple comma-separated data locations for multiple data directories.

d:\hdp\data

Mandatory

Name node data directory

Determines where on the local file system the HDFS name node should store the name table (fsimage). You can add multiple comma-separated data locations for multiple data directories.

d:\hdpdata\hdfs

Mandatory

Data node data directory

Determines where on the local file system an HDFS data node should store its blocks. You can add multiple comma-separated data locations for multiple data directories.

d:\hdpdata\hdfs

Mandatory

To choose single- or multi-node deployment, select one of the following:

Configure Single Node -- installs all cluster nodes on the current host; the host name fields are pre-populated with the name of the current computer. For information on installing on a single-node cluster, see the Quick Start Guide for Installing HDP for Windows on a Single-Node Cluster.

Configure Multi Node -- creates a property file, which you can use for cluster deployment or to manually install a node (or subset of nodes) on the current computer.

Specify whether or not you want to delete existing HDP data.

If you want to delete existing HDP data, select

Delete existing HDP dataand supply thehadoopuser password in the field immediately below. (You can either shield the password while entering it or selectShowto show it.)Mandatory: Enter the password for the

hadoopsuper user (the administrative user). This password enables you to log in as the administrative user and perform administrative actions. Password requirements are controlled by Windows, and typically require that the password include a combination of uppercase and lowercase letters, digits, and special characters.Specify component-related values:

Table 2.2. Component configuration property information

Configuration Property Name

Description

Example Value

Mandatory/Optional/Conditional

NameNode Host

The FQDN for the cluster node that will run the NameNode master service.

NAMENODE_MASTER. acme.com

Mandatory

Secondary NameNode Host

The FQDN for the cluster node that will run the Secondary NameNode master service. (Not applicable for HA.)

SECONDARY_NN_MASTER. acme.com

Mandatory/NA

ResourceManager Host

The FQDN for the cluster node that will run the YARN Resource Manager master service.

RESOURCE_MANAGER. acme.com

Mandatory

Hive Server Host

The FQDN for the cluster node that will run the Hive Server master service.

HIVE_SERVER_MASTER. acme.com

Mandatory

Oozie Server Host

The FQDN for the cluster node that will run the Oozie Server master service.

OOZIE_SERVER_MASTER. acme.com

Mandatory

WebHCat Host

The FQDN for the cluster node that will run the WebHCat master service.

WEBHCAT_MASTER. acme.com

Mandatory

Slave hosts

A comma-separated list of FQDN for those cluster nodes that will run the DataNode and TaskTracker services.

slave1.acme.com, slave2.acme.com, slave3.acme.com

Mandatory

Client hosts

A comma-separated list of FQDN for those cluster nodes that will store JARs and other job-related files.

client.acme.com, client1.acme.com, client2.acme.com

Optional

Zookeeper Hosts

A comma-separated list of FQDN for those cluster nodes that will run the ZooKeeper hosts.

ZOOKEEPER-HOST.acme.com

Mandatory

Enable LZO codec

Use LZO compression for HDP.

Selected

Optional

Use Tez in Hive

Install Tez on the Hive host.

Selected

Optional

Enable GZip compression

Enable gzip file compression.

Selected

Optional

Install the Oozie Web console

Install web-based console for Oozie.

The Oozie Web console requires the ext-2.2.zip file.

Selected

Optional

Enter database information for Hive and Oozie at the bottom of the form:

Table 2.3. Hive and Oozie configuration property information

Configuration Property Name

Description

Example Value

Mandatory/Optional

Hive DB Name

The name of the database used for Hive.

hivedb

Mandatory

Hive DB User name

User account credentials for Hive metastore database instance. Ensure that this user account has appropriate permissions.

hive_user

Mandatory

Hive DB Password

User account credentials for Hive metastore database instance. Ensure that this user account has appropriate permissions.

hive_pass

Mandatory

Oozie DB Name

Database for Oozie metastore. If using SQL Server, ensure that you create the database on the SQL Server instance.

ooziedb

Mandatory

Oozie DB User name

User account credentials for Oozie metastore database instance. Ensure that this user account has appropriate permissions.

oozie_user

Mandatory

Oozie DB Password

User account credentials for Oozie metastore database instance. Ensure that this user account has appropriate permissions.

oozie_pass

Mandatory

DB Flavor

Database type for Hive and Oozie metastores (allowed databases are SQL Server and Derby). To use default embedded Derby instance, set the value of this property to derby. To use an existing SQL Server instance as the metastore DB, set the value as mssql.

msql or derby

Mandatory

Database host name

FQDN for the node where the metastore database service is installed. If using SQL Server, set the value to your SQL Server host name. If using Derby for Hive metastore, set the value to HIVE_SERVER_HOST.

sqlserver1.acme.com

Mandatory

Database port

This is an optional property required only if you are using SQL Server for Hive and Oozie metastores. By default, the database port is set to 1433.

1433

Optional

To install HBase, Falcon Knox, Storm, Flume, Spark, Phoenix, Slider, Ranger, DataFu or HiveDR, click the

Additional componentstab, and complete the fields as shown in the table below:

Table 2.4. Additional components screen values

Configuration Property Name

Description

Example Value

Mandatory/Optional/Conditional

HBase Master host

The FQDN for the cluster node that runs the HBase master

HBASE-MASTER.acme.com

Mandatory

Storm nimbus host

The FQDN for the cluster node that runs the Storm Nimbus master service

storm-host.acme.com

Optional

HBase region Server hosts

A comma-separated list of FQDN for cluster nodes that run the HBase Region Server services

slave1.acme.com, slave2.acme.com, slave3.acme.com

Mandatory

Storm supervisor hosts

A comma-separated list of FQDN for those cluster nodes that run the Storm Supervisors.

storm-sup=host.acme.com

Optional

Falcon host

The FQDN for the cluster node that runs Falcon

falcon-host.acme.com

Flume hosts

A comma-separated list of FQDN for cluster nodes that run Flume

flume-host.acme.com

Optional

Install Spark

Indicates that you want to install Spark

Check box selected

Optional

Spark job history server

Specifies the Spark job history server

spark-host.acme.com

Optional

Spark hive metastore

Specifies the Spark hive metastore value

metastore

Optional

Knox host

The FQDN for the cluster node that runs Knox

knox-host.acme.com

Mandatory

Knox Master secret

Password for starting and stopping the gateway

knox-secret

Mandatory

Install Phoenix

Install Phoenix on the HBase server

Selected

Optional

Install Slider

Install Slider platform services for the YARN environment

Selected

Optional

Install Ranger

Installs Ranger security

Selected

Optional

Install DataFu

Install DataFu user-defined functions for data analysis

Selected

Optional

Install HiveDR

Installs HiveDR

Check box selected

Optional