Troubleshooting with the Job Comparison Feature

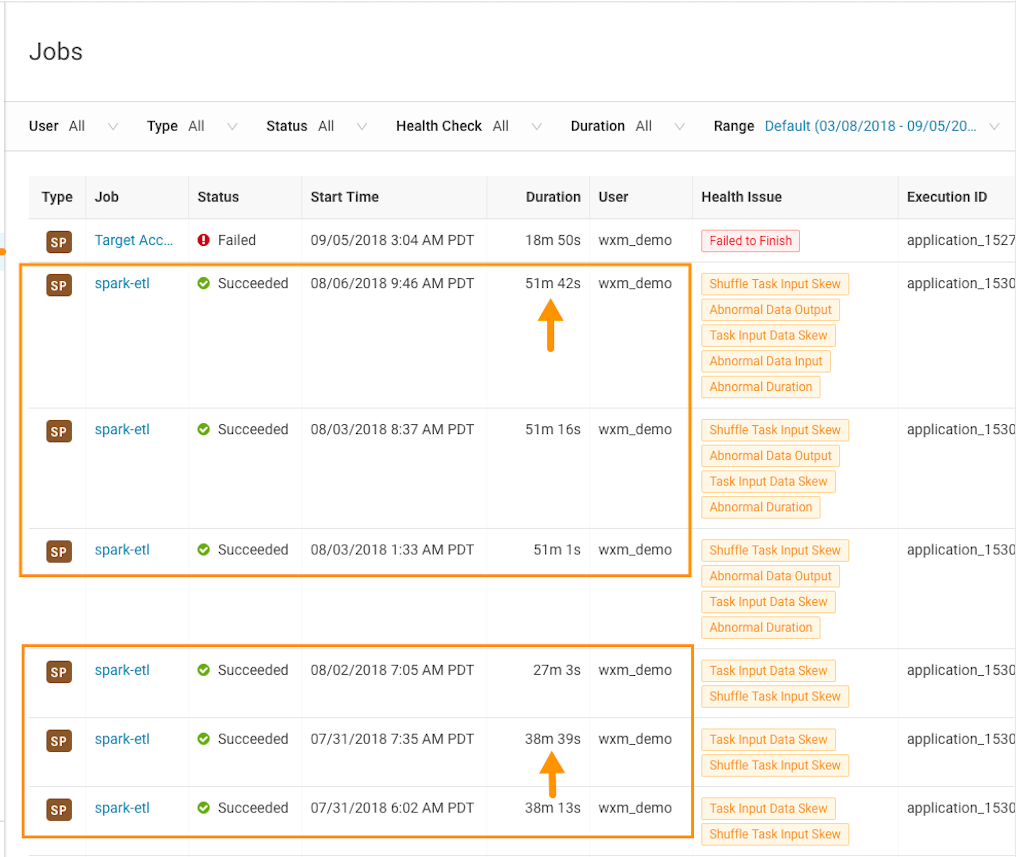

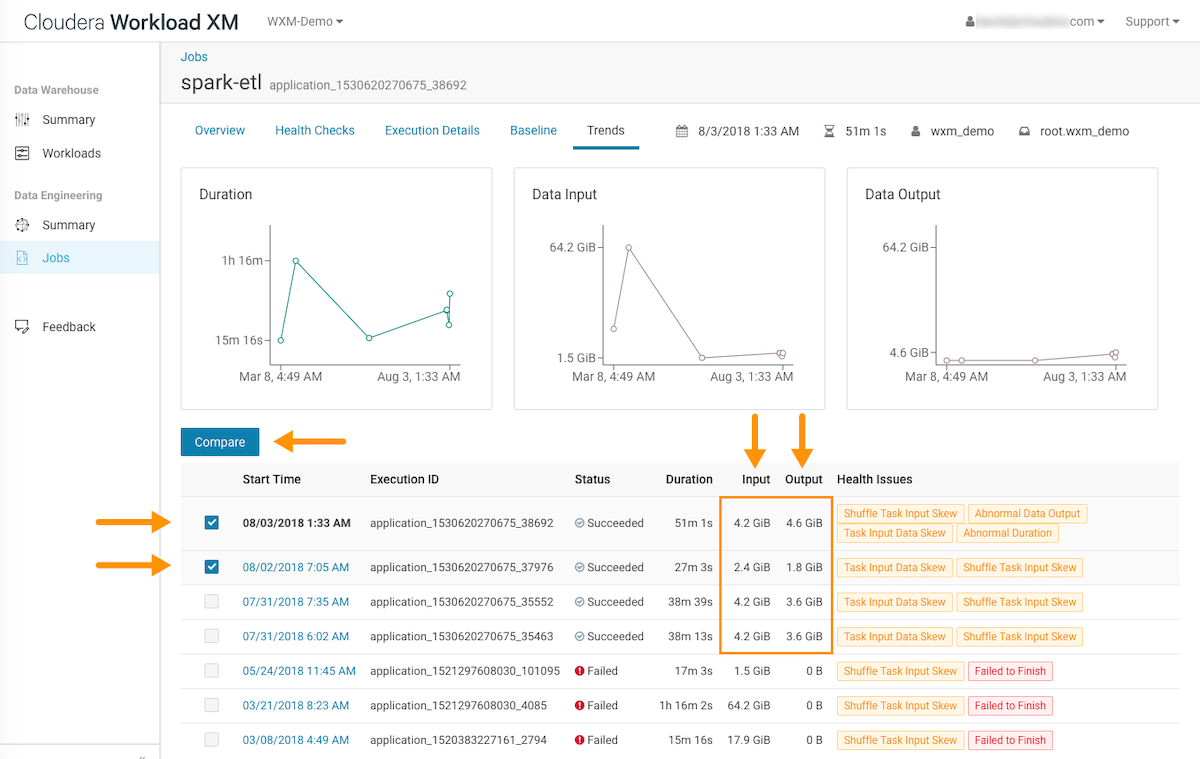

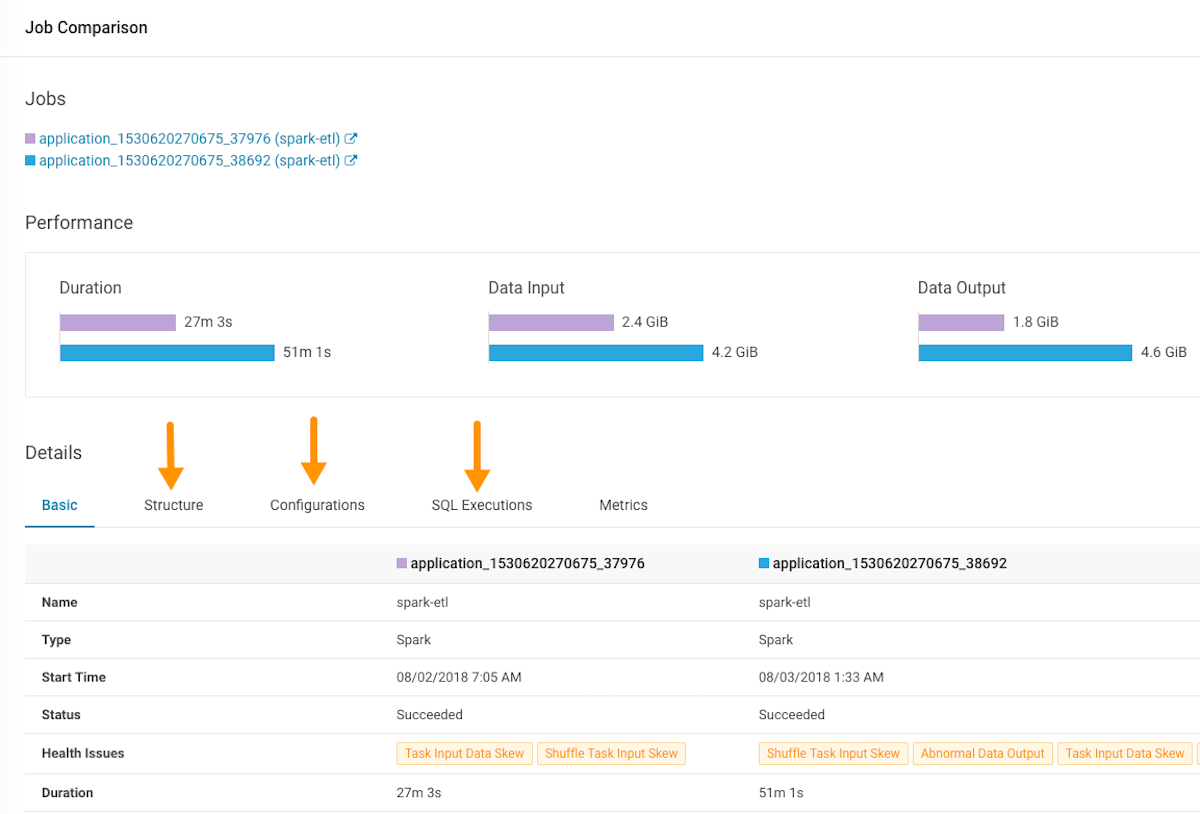

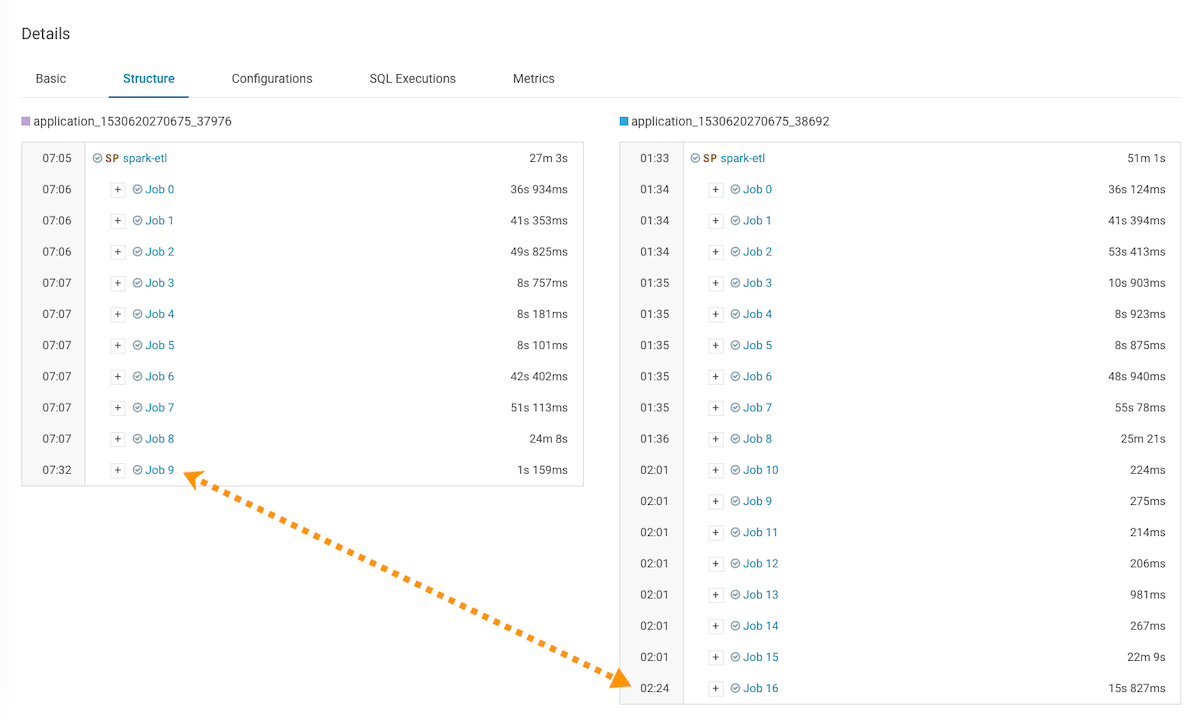

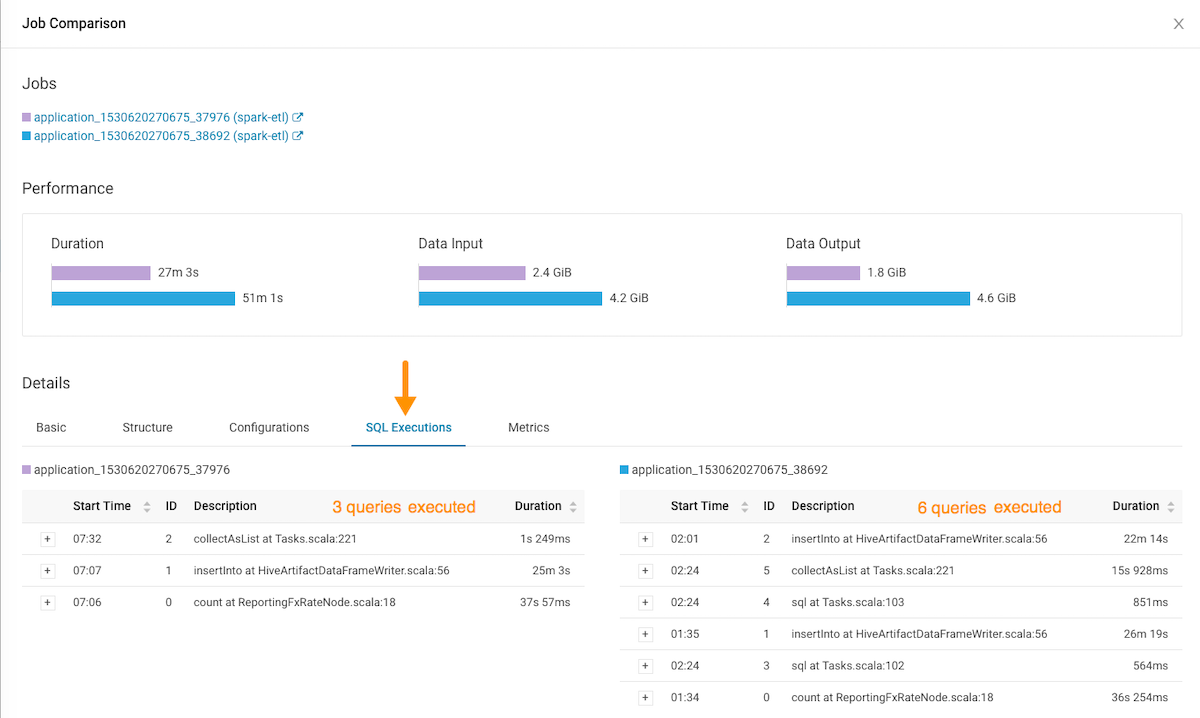

Steps for comparing two different runs of the same job, which is especially useful when you notice unexpected changes. For example, when you have a job that consistently completes within a specific amount of time and then it starts taking longer, comparing two runs of the same job enables you to analyze the differences so that you can troubleshoot the cause.

Steps with examples are included that help explain how to further investigate and troubleshoot.