Configuring Transient Apache Hive ETL Jobs to Use the Amazon S3 Filesystem in CDH

Apache Hive is a popular choice for batch extract-transform-load (ETL) jobs such as cleaning, serializing, deserializing, and transforming data. In on-premise deployments, ETL jobs operate on data stored in a permanent Hadoop cluster that runs HDFS on local disks. However, ETL jobs are frequently transient and can benefit from cloud deployments where cluster nodes can be quickly created and torn down as needed. This approach can translate to significant cost savings.

For information about how to set up a shared Amazon Relational Database Service (RDS) as your Hive metastore, see How To Set Up a Shared Amazon RDS as Your Hive Metastore for CDH. For information about tuning Hive read and write performance to the Amazon S3 file system, see Tuning Apache Hive Performance on the Amazon S3 Filesystem in CDH.

About Transient Jobs

Most ETL jobs on transient clusters run from scripts that make API calls to a provisioning service such as Cloudera Director. They can be triggered by external events, such as IoT (internet of things) events like reaching a temperature threshold, or they can be run on a regular schedule, such as every day at midnight.

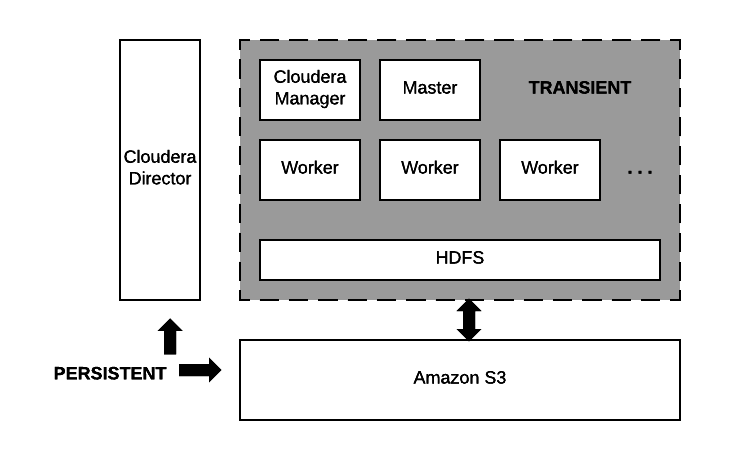

Transient Jobs Hosted on Amazon S3

Data residing on Amazon S3 and the node running Cloudera Director are the only persistent components. The computing nodes and local storage come and go with each transient workload.

Configuring and Running Jobs on Transient Clusters

Using AWS to run transient jobs involves the following steps, which are documented in an end-to-end example you can download from this Cloudera GitHub repository. Use this example to test transient clusters with Cloudera Director.

- Configure AWS settings.

- Install Cloudera Director server and client.

- Design and test a cluster configuration file for the job.

- Prepare Amazon Machine Images (AMIs) with preloaded and pre-extracted CDH parcels.

- Package the job into a shell script with the necessary bootstrap steps.

- Prepare a job submission script.

- Schedule the recurring job.

See Tuning Hive Write Performance on the Amazon S3 Filesystem for information about tuning Hive to write data to S3 tables or partitions more efficiently.

Configuring AWS Settings

Use the AWS web console to configure Virtual Private Clouds (VPCs), Security Groups, and Identity and Access Management (IAM) roles on AWS before you install Cloudera Director.

Best Practices

Networking

Cloudera recommends deploying clusters within a VPC, using Security Groups to control network traffic. Each cluster should have outbound internet connectivity through a NAT (network address translation) server when you deploy in a private subnet. If you deploy in a public subnet, each cluster needs direct connectivity. Inbound connections should be limited to traffic from private IPs within the VPC and SSH access through port 22 to the gateway nodes from approved IP addresses. For details about using Cloudera Director to perform these steps, see Setting up the AWS Environment.

Data Access

Create an IAM role that gives the cluster access to S3 buckets. Using IAM roles is a more secure way to provide access to S3 than adding the S3 keys to Cloudera Manager by configuring core-site.xml safety valves.

AWS Placement Groups

To improve performance, place worker nodes in an AWS placement group. See Placement Groups in the AWS documentation set.

Install Cloudera Director

See Launching an EC2 Instance for Cloudera Director. Install Cloudera Director server and client in a virtual machine that can reach the VPC you set up in the Configuring AWS section.

Create the Cluster Configuration File

The cluster configuration file contains the information that Director needs to bootstrap and properly configure a cluster:

- Deployment environment configuration.

- Instance groups configuration.

- List of services.

- Pre- and post-creation scripts.

- Custom service and role configurations.

- Billing ID and license for hourly billing for Director use from Cloudera. See Usage-Based Billing.

Creating the cluster configuration file represents the bulk of the work of configuring Hive to use the S3 filesystem. This GitHub repository contains sample configurations for different cloud providers.

Testing the Cluster Configuration File

After defining the cluster configuration file, test it to make sure it runs without errors:

- Use secure shell (SSH) to log in to the server running Cloudera Director.

- Run the validate command by passing the configuration file to it:

cloudera-director validate <cluster_configuration_file_name.conf>

If Cloudera Director server is running in a separate instance from the Cloudera Director client, you must run:

cloudera-director bootstrap-remote <admin_username> --lp.remote.password=<admin_password> --lp.remote.hostAndPort=<host_name>:<port_number>

Prepare the CDH AMIs

It is not a requirement to have preloaded AMIs containing CDH parcels that are already extracted. However, preloaded AMIs significantly speed up the cluster provisioning process. See this repo in GitHub for instructions and scripts that create preloaded AMIs.

After you have created preloaded AMIs, replace the AMI IDs in the cluster configuration file with the new preloaded AMI IDs to ensure that all cluster instances use the preloaded AMIs.

Run the Cloudera Director validate command again to test bringing up the cluster. See Testing the Cluster Configuration File. The cluster should come up significantly faster than it did when you tested it before.

Prepare the Job Wrapper Script

Define the Hive query or job that you want to execute and a wrapper shell script that runs required prerequisite commands before it executes the query or job on the transient cluster. The Director public GitHub repository contains simple examples of a MapReduce job wrapper script and an Oozie job wrapper script.

For example, the following is a Bash shell wrapper script for a Hive query:

set -x -e sudo -u hdfs hadoop fs -mkdir /user/ec2-user sudo -u hdfs hadoop fs -chown ec2-user:ec2-user /user/ec2-user hive -f query.q exit 0

Where query.q contains the Hive query. After you create the job wrapper script, test it to make sure it runs without errors.

Log Collection

Save all relevant log files in S3 because they disappear when you terminate the transient cluster. Use these log files to debug any failed jobs after the cluster is terminated. To save the log files, add an additional step to your job wrapper shell script.

Example for copying Hive logs from a transient cluster node to S3:

# Install AWS CLI curl "https://s3.amazonaws.com/aws-cli/awscli-bundleszip" -o "awscli-bundle.zip" sudo yum install -y unzip unzip awscli-bundle.zip sudo ./awscli-bundle/install -i /usr/local/aws -b /usr/local/bin/aws # Set Credentials export AWS_ACCESS_KEY_ID=[] export AWS_SECRET_ACCESS_KEY=[] # Copy Log Files aws s3 cp /tmp/ec2-user/hive.log s3://bucket-name/output/hive/logs/

Prepare the End-to-End Job Submission Script

This script automates the end-to-end workflow, including the following steps:

- Submit the transient cluster configuration file to Cloudera Director.

- Wait for the cluster to be provisioned and ready to use.

- Copy all job-related files to the cluster.

- Submit the job script to the cluster.

- Wait for the job to complete.

- Shutdown the cluster.

See the Cloudera Engineering Blog post How-to: Integrate Cloudera Director with a Data Pipeline in the Cloud for information about creating an end-to-end job submission script. A sample script can be downloaded from GitHub here.

Schedule the Recurring Job

To schedule the recurring job, wrap the end-to-end job submission script in a Cron job or by triggering the script to run when a particular event occurs.