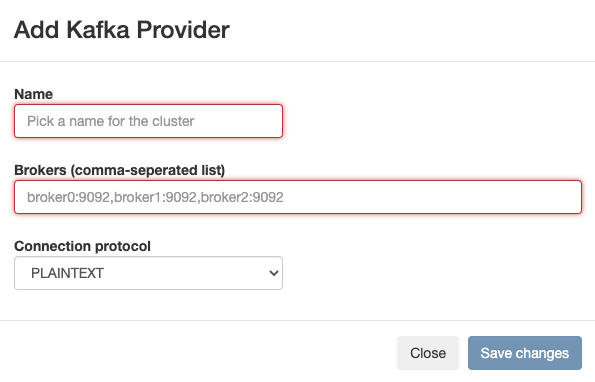

Adding Kafka as Data Provider

You need to register Kafka as a Data Provider using the Streaming SQL Console to create Kafka tables in SQL Stream Builder (SSB).

- Make sure that you have Kafka service on your cluster.

- Make sure that you have the right permissions set in Ranger.