Building your dataflow

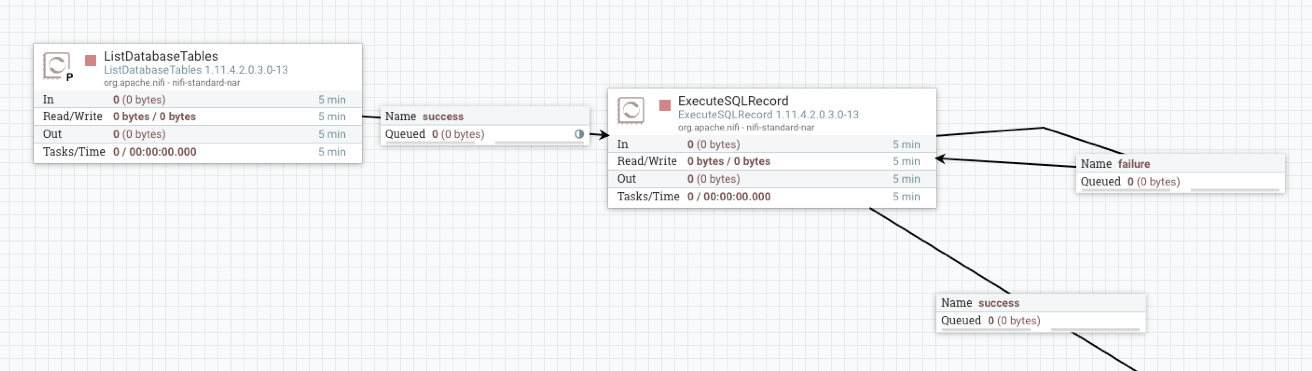

Set up the elements of your NiFi dataflow that enables you to move data out of Snowflake using Apache NiFi. This involves opening NiFi in CDP Public Cloud, adding processors to your NiFi canvas, and connecting the processors.

When you are building a data flow to move data out of Snowflake using Apache NiFi, you can consider using the following processors to build your dataflow:

ListDatabaseTablesExecuteSQLRecord

You must have added the Snowflake CA certificates to the NiFi truststore.

Once you have finished building the dataflow, move on to the following steps:

- Create Controller Services for your dataflow.

- Configure your source Processor.

- Configure your target Processor.