Making an inference call to a Model Endpoint with an OpenAI API

Language models for text generation are deployed using NVIDIA’s NIM microservices. These model endpoints are compliant with the OpenAI Protocol. See NVIDIA NIM documentation for supported OpenAI APIs and NVIDIA NIM specific extensions such as Function Calling and Structured Generation.

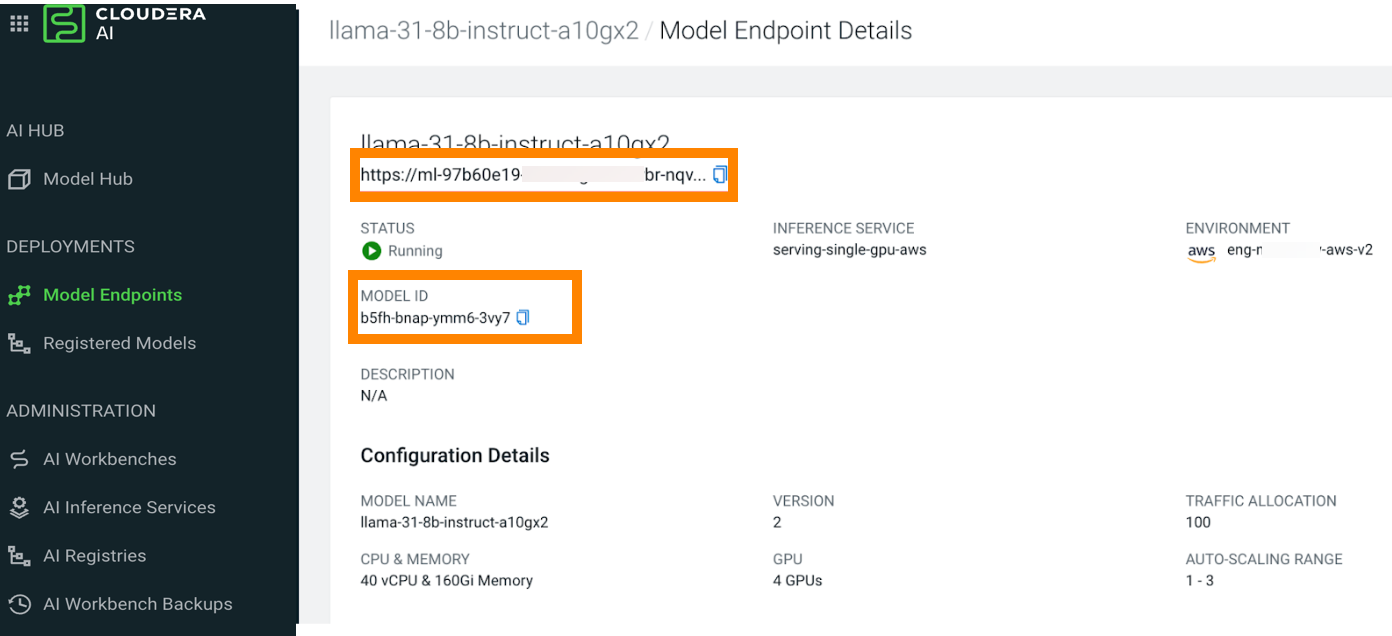

This section details the process for obtaining the Model Endpoint Details.

The base_url is the model endpoint URL up to the API version

v1. To get the base_url, copy the model endpoint URL and

delete the last two path components.

Copy the model endpoint URL from the Model Endpoint Details UI and

modify it to

https://[***DOMAIN***]/namepaces/serving-default/endpoints/[***ENDPOINT_NAME***]/v1The MODEL_NAME is the model name assigned to the model when it is registered

to the Cloudera AI Registry. You can find this in the Model

Endpoint Details UI.