June 22, 2022

This release of the Cloudera Data Warehouse (CDW) service on CDP Public Cloud has the following known issues:

New known Issues in this release

- DWX-12758 DBC upgrade fails for some source versions

- In this release, if you are using a Database Catalog that was created in CDW 2022.0.7.0-80 (released April 27, 2022) or 2022.0.7.1-2 (released May 10, 2022) a failure can occur when you try to upgrade the Database Catalog.

- DWX-12528 Tags are not removed for environment activated in reduced permissions mode

- You see a DWX server log error, "... error delete tags" when in reduced permissions mode. You can ignore this error.

- DWX-12658 Rebuild materialized view after Database Catalog upgrade

- You must rebuild materialized views created in version of the Database Catalog that is older than the version of your Virtual Warehouse. This is expected behavior.

- DWX-12598 DESCRIBE EXTENDED does not show bucketing information about an Iceberg table.

- This issue affects the Hive and Impala Virtual Warehouses. The Hive Virtual Warehouse, which supports DESCRIBE FORMATTED of Iceberg talble, has the same problem

- CDPD-40749 IMPALA-11346 Iceberg query failure

- When a partitioned legacy table is converted to Iceberg, Impala queries of the table might fail. The problem occurs when the query contains a WHERE clause plus a predicate on the partition columns.

Carried over from the previous release: General

- TSB 2023-656: TSB Cloudera Data Warehouse does not clean up old Helm releases

- Cloudera Data Warehouse (CDW) uses Helm release manager to deploy component releases into the Kubernetes cluster of the customer. However, CDW’s current behavior in CDW keeps the Helm release history without any boundary. Since the storage for Helm in CDW uses secrets, this behavior can lead to a very high number of secrets (Impala/Hive scale-up/down creates new releases;clusters were seen with 6000+ secrets). Therefore, parsing and decoding a high number of Helm releases can require a significant amount of memory. As an example, for a cluster with 6000+ secrets, it could be 6-7 GB or more of memory utilized. This may result in performance degradation, instability and/or higher cloud cost because several CDW cluster components rely on secrets, which would cause the memory footprint of these other components to increase significantly.

This issue is addressed in the CDW version 1.5.1-b110 (workload image version 2022.0.11.0-122). Cloudera highly recommends upgrading Apache Impala (Impala) and Apache Hive (Hive) in CDW to 2022.0.11.0-122 or higher versions to ensure performance, stability and/or cloud cost are not impacted by this behavior.

- Knowledge article

- For the latest update on this issue see the corresponding Knowledge article: TSB 2023-656: Cloudera Data Warehouse does not clean up old Helm releases.

- DWX-6619 Browser auto-close not working on some browsers after token-Based authentication for accessing CDW

- The Firefox and Edge browser window does not close automatically after successful authentication.

- DWX-9774 Database Catalog or Virtual Warehouse image version problem

- Background: In Cloudera Data Warehouse 2021.0.3-b27 - 2021.0.5-b36, you can choose any supported image version when you create a Database Catalog or Virtual Warehouse, assuming you have the CDW_VERSIONED_DEPLOY entitlement.

- DWX-5841: Virtual Warehouse endpoints are now restricted to TLS 1.2

- Problem: TLS 1.0 and 1.1 are no longer considered secure, so now Virtual Warehouse

endpoints must be secured with TLS 1.2 or later, and then the environment that the Virtual

Warehouse uses must be reactivated in CDW. This includes both Hive and Impala Virtual

Warehouses. To reactivate the environment in the CDW UI:

- Deactivate the environment. See Deactivating AWS environments or Deactivating Azure environments.

- Activate the environment. See Activating AWS environments or Activating Azure environments

- DWX-5742: Upgrading multiple Hive and Impala Virtual Warehouses or Database Catalogs at the same time fails

- Problem: Upgrading multiple Hive and Impala Virtual Warehouses or Database Catalogs at the same time fails.

Carried over from the previous release: AWS

- AWS availability zone inventory issue

- In this release, you can select a preferred availability zone when you create a Virtual Warehouse; however, AWS might not be able to provide enough compute instances of the type that Cloudera Data Warehouse needs.

- DWX-8573: EKS upgrade from DWX UI to K8s v1.20 fails in reduced permissions mode

- In reduced permissions mode, attempting to update Amazon Elastic Kubernetes Service (EKS) to Kubernetes version 1.20 fails with an AccessDenied error.

- DWX-7613: CloudFormation stack creation using AWS CLI broken for CDW Reduced Permissions Mode

- Problem: If you use the AWS CLI to create a CloudFormation stack to activate an

AWS environment for use in Reduced Permissions Mode, it fails and returns the following

error:

The default value of SdxDDBTableName is not being set. If you create the CloudFormation stack using the AWS Console, there is no problem.An error occurred (ValidationError) when calling the CreateStack operation: Parameters: [SdxDDBTableName] must have values - ENGESC-8271: Helm 2 to Helm 3 migration fails on AWS environments where the overlay network feature is in use and namespaces are stuck in a terminating state

- Problem: While using the overlay network feature for AWS environments and after attempting to migrate an AWS environment from Helm 2 to Helm 3, the migration process fails.

- DWX-6970: Tags do not get applied in existing CDW environments

- Problem: You may see the following error while trying to apply tags to Virtual

Warehouses in an existing CDW environment:

An error occurred (UnauthorizedOperation) when calling the CreateTags operation: You are not authorized to perform this operationandCompute node tagging was unsuccessful. This happens because theec2:CreateTagsprivilege is missing from your AWS cluster-autoscaler inline policy for theNodeInstanceRolerole.

Carried over from the previous release: Azure

- Incorrect diagnostic bundle location

- Problem:The path you see to the diagnostic bundle is wrong when you create a Virtual

Warehouse, collect a diagnostic bundle of log files for troubleshooting, and click

. Your storage account name is missing from the beginning of the path.

. Your storage account name is missing from the beginning of the path.

- Changed environment credentials not propagated to AKS

-

Problem: When you change the credentials of a running cloud environment using the Management Console, the changes are not automatically propagated to the corresponding active Cloudera Data Warehouse (CDW) environment. As a result, the Azure Kubernetes Service (AKS) uses old credentials and may not function as expected resulting in inaccessible Hive or Impala Virtual Warehouses.

Workaround: To resolve this issue, you must manually synchronize the changes with the CDW AKS resources. To synchronize the updated credentials, see Update AKS cluster with new service principal credentials in the Azure product documentation.

Carried over from the previous release: Hue Query Editor

- TSB 2023-670: Hue frontend may become stuck in CrashLoopBackoff on CDW running on Azure

- Hue frontend for Apache Impala (Impala) and Apache Hive (Hive)

Virtual Warehouses created in Cloudera Data Warehouse (CDW) can be stuck in a

CrashLoopBackoff state on Microsoft Azure (Azure) platform, making it impossible to reach

the Virtual Warehouse through Hue. In this case, the following error message is displayed:

kubelet Error: failed to create containerd task: failed to create shim task: OCI

runtime create failed: runc create failed: unable to start container process: exec:

“run_httpd.sh”: cannot run executable found relative to current directory: unknown

The root cause of the issue is a recent node image upgrade in Azure made by Microsoft, where the version of the container runtime (containerd) was upgraded to 1.6.18. This version upgraded Go SDK to 1.19.6, where the behavior of how program execution works has changed. Due to security concerns, the newer version of Go SDK does not resolve a program using an implicit or explicit path entry relative to the current directory (for more information, see the Go documentation), and containerd indirectly uses this Go exec API. The Docker command of the Hue frontend relies on the former behavior. Therefore the command fails on recent node images, because it cannot find the expected executable in the current working directory of the containers anymore. Cloudera first noticed the aforementioned containerd version in the Azure Kubernetes Service (AKS) released on 2023-03-05. Every node image which is newer than the version released on 2023-03-05 is affected by this issue. Unfortunately, Cloudera does not have any insight to when Microsoft rolls out new node images in a given region. Therefore, it is possible that in some regions, the older node images are still in use, where the issue does not arise until a given regional update is applied to the node images.

CDW environments, which are already activated and running, are not affected as long as customers do not trigger a node image upgrade to the latest available version either using Azure Command Line Interface (CLI) or on the Azure portal. If the node image is upgraded to the latest version, then the Virtual Warehouses also need to be upgraded to the latest CDWH-2023.0.14.0 version. Newly created CDW environments are not affected, but customers are advised to not choose a Hue version lower than CDWH-2023.0.14.0 during any Virtual Warehouse creation because such a configuration is affected by this issue.

- Knowledge article

- For the latest update on this issue see the corresponding Knowledge article: TSB 2023-670: TSB Hue frontend may become stuck in CrashLoopBackoff on CDW running on Azure .

- IMPALA-11447 Selecting certain complex types in Hue crashes Impala

- Queries that have structs/arrays in the select list crash Impala if initiated by Hue.

- DWX-8460: Unable to delete, move, or rename directories within the S3 bucket from Hue

- Problem: You may not be able to rename, move, or delete directories within your S3 bucket from the Hue web interface. This is because of an underlying issue, which will be fixed in a future release.

- DWX-6674: Hue connection fails on cloned Impala Virtual Warehouses after upgrading

- Problem: If you clone an Impala Virtual Warehouse from a recently upgraded Impala Virtual Warehouse, and then try to connect to Hue, the connection fails.

- DWX-5650: Hue only makes the first user a superuser for all Virtual Warehouses within a Data Catalog

- Problem: Hue marks the user that logs in to Hue from a Virtual

Warehouse for the first time as the Hue superuser. But if multiple Virtual Warehouses are

connected to a single Data Catalog, then the first user that logs in to any one of the

Virtual Warehouses within that Data Catalog is the Hue superuser.

For example, consider that a Data Catalog DC-1 has two Virtual Warehouses VW-1 and VW-2. If a user named John logs in to Hue from VW-1 first, then he becomes the Hue superuser for all the Virtual Warehouses within DC-1. At this time, if Amy logs in to Hue from VW-2, Hue does not make her a superuser within VW-2.

Carried over from the previous release: Database Catalog

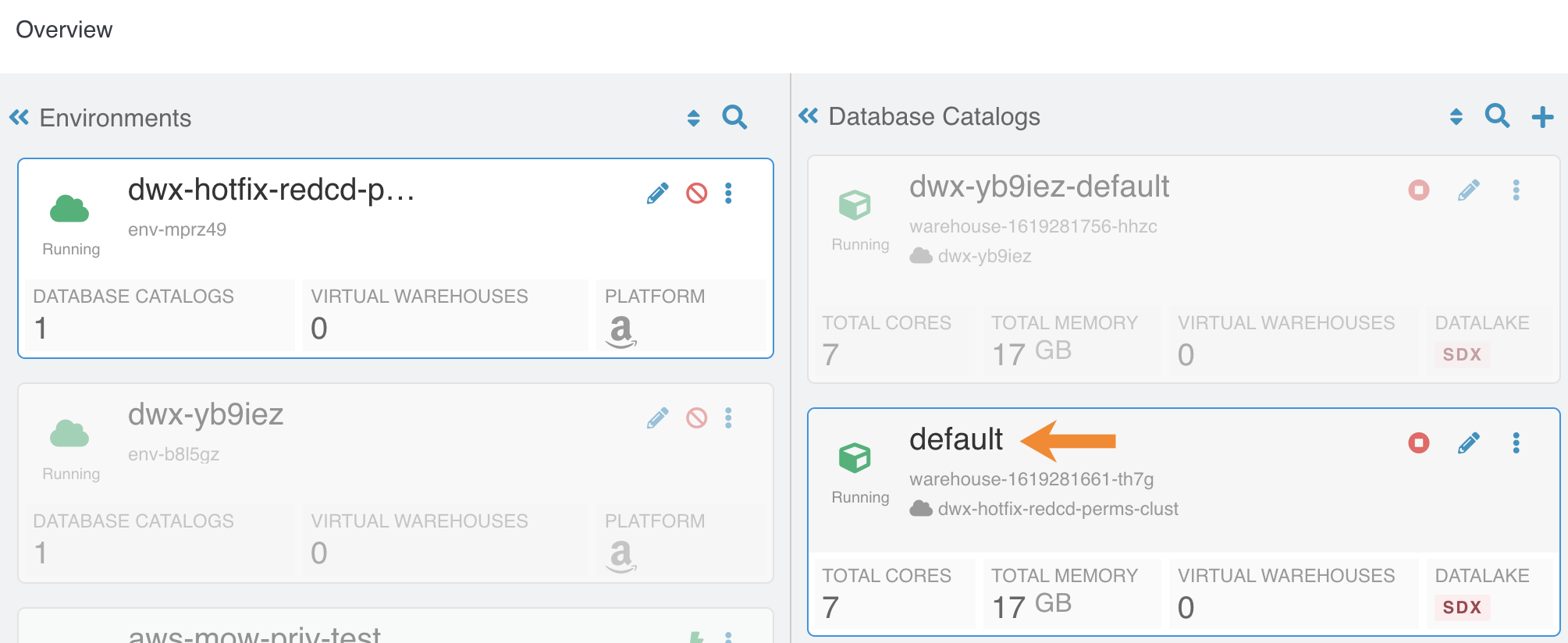

- DWX-7349: In reduced permissions mode, default Database Catalog name does not include the environment name

- Problem:

When you activate an AWS environment in reduced permissions mode, the default Database Catalog name does not include the environment name:

This does not cause collisions because each Database Catalog named "default" is associated with a different environment. For more information about reduced permissions mode, see Reduced permissions mode for AWS environments.

- DWX-6167: Maximum connections reached when creating multiple Database Catalogs

- Problem:After creating 17 Database Catalogs on one AWS environment, Virtual Warehouses failed to start.

Carried over from the previous release: Hive Virtual Warehouse

- DWX-10271: Missing log section in Hive query results

- In a Hive Virtual Warehouse, when you run a query in Hue, the query results do not contain a logs section.

- DWX-8118: INSERT INTO command fails under certain circumstances

- This problem affects users who have a PostgreSQL database as the backend Hive database. If

you create a table A and create a table B as select (CTAS) from an empty table A,

inserting values into table B fails as follows:

Error while compiling statement: FAILED: Execution Error, return code 1 from org.apache.hadoop.hive.ql.exec.StatsTask.org.apache.thrift.transport.TTransportException - DWX-5841: Virtual Warehouse endpoints are now restricted to TLS 1.2

- Problem: TLS 1.0 and 1.1 are no longer considered secure, so now Virtual Warehouse

endpoints must be secured with TLS 1.2 or later, and then the environment that the Virtual

Warehouse uses must be reactivated in CDW. This includes both Hive and Impala Virtual

Warehouses. To reactivate the environment in the CDW UI:

- Deactivate the environment. See Deactivating AWS environments or Deactivating Azure environments.

- Activate the environment. See Activating AWS environments or Activating Azure environments

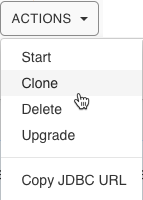

- DWX-5926: Cloning an existing Hive Virtual Warehouse fails

- Problem: If you have an existing Hive Virtual Warehouse that you clone by selecting Clone from the drop-down menu, the cloning process fails. This does not apply to creating a new Hive Virtual Warehouse.

- DWX-2690: Older versions of Beeline return SSLPeerUnverifiedException when submitting a query

-

Problem: When submitting queries to Virtual Warehouses that use Hive, older Beeline clients return an SSLPeerUnverifiedException error:

javax.net.ssl.SSLPeerUnverifiedException: Host name ‘ec2-18-219-32-183.us-east-2.compute.amazonaws.com’ does not match the certificate subject provided by the peer (CN=*.env-c25dsw.dwx.cloudera.site) (state=08S01,code=0)

Carried over from the previous release: Impala Virtual Warehouse

- IMPALA-11045 Impala Virtual Warehouses might produce an error when querying transactional (ACID) table even after you enabled the automatic metadata refresh (version DWX 1.1.2-b2008)

- Problem: Impala doesn't open a transaction for select queries, so you might get a FileNotFound error after compaction even though you refreshed the metadata automatically.

- Impala Virtual Warehouses might produce an error when querying transactional (ACID) tables (DWX 1.1.2-b1949 or earlier)

- Problem: If you are querying transactional (ACID) tables with an Impala Virtual Warehouse and compaction is run on the compacting Hive Virtual Warehouse, the query might fail. The compacting process deletes files and the Impala Virtual Warehouse might not be aware of the deletion. Then when the Impala Virtual Warehouse attempts to read the deleted file, an error can occur. This situation occurs randomly.

- Do not use the start/stop icons in Impala Virtual Warehouses version 7.2.2.0-106 or earlier

- Problem: If you use the stop/start icons in Impala Virtual Warehouses version 7.2.2.0-106 or earlier, it might render the Virtual Warehouse unusable and make it necessary for you to re-create it.

- DWX-6674: Hue connection fails on cloned Impala Virtual Warehouses after upgrading

- Problem: If you clone an Impala Virtual Warehouse from a recently upgraded Impala Virtual Warehouse, and then try to connect to Hue, the connection fails.

- DWX-5841: Virtual Warehouse endpoints are now restricted to TLS 1.2

- Problem: TLS 1.0 and 1.1 are no longer considered secure, so now Virtual Warehouse

endpoints must be secured with TLS 1.2 or later, and then the environment that the Virtual

Warehouse uses must be reactivated in CDW. This includes both Hive and Impala Virtual

Warehouses. To reactivate the environment in the CDW UI:

- Deactivate the environment. See Deactivating AWS environments or Deactivating Azure environments.

- Activate the environment. See Activating AWS environments or Activating Azure environments

- DWX-5276: Upgrading an older version of an Impala Virtual Warehouse can result in error state

- Problem: If you upgrade an older version of an Impala Virtual Warehouse (DWX 1.1.1.1-4) to the latest version, the Virtual Warehouse can get into an Updating or Error state.

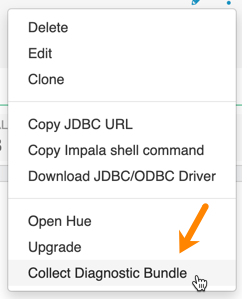

- DWX-3914: Collect Diagnostic Bundle option does not work on older environments

- The Collect Diagnostic Bundle menu option in Impala Virtual

Warehouses does not work for older environments:

- Data caching:

- This feature is limited to 200 GB per executor, multiplied by the total number of executors.

- Sessions with Impala continue to run for 15 minutes after the connection is disconnected.

- When a connection to Impala is disconnected, the session continues to run for 15 minutes in

case so the user or client can reconnect to the same session again by presenting the

session_token. After 15 minutes, the client must re-authenticate to Impala to establish a new connection.