File system for content repository

If your function is going to process very large files, the data may not be held in memory, which would cause errors. In that case you may want to attach a File System to your Lambda and tell Cloudera Data Flow Functions to use the File System as the Content Repository.

Configuration

If you want to attach a File System to your Lambda, you are required to connect your Lambda to the VPC where the File System is provisioned.

However, by default, Lambda runs your functions in a secure VPC with access to AWS services and the internet. Lambda owns this VPC, which is not connected to your account's default VPC. When you connect a function to a VPC in your account, the function cannot access the internet unless your VPC provides access. Access to the internet is required so that Cloudera Data Flow Functions can request the Cloudera Data Flow Catalog and retrieve the flow definition to be executed.

In such a situation, a recommended option is to create a VPC with a public subnet and multiple private subnets (to increase resiliency across multiple availability zones). You can then create an Internet Gateway and NAT Gateway to give your Lambda access to the internet. For more information, see the AWS Knowledge Center.

Concurrent access

Depending on your Lambda’s configuration and the throughput of the events triggering the function, you may have multiple instances of the Lambda running concurrently and each instance would share the same File System. For more information, see this AWS Compute Blog article.

To ensure each instance of the function has its own “content repository”, you can

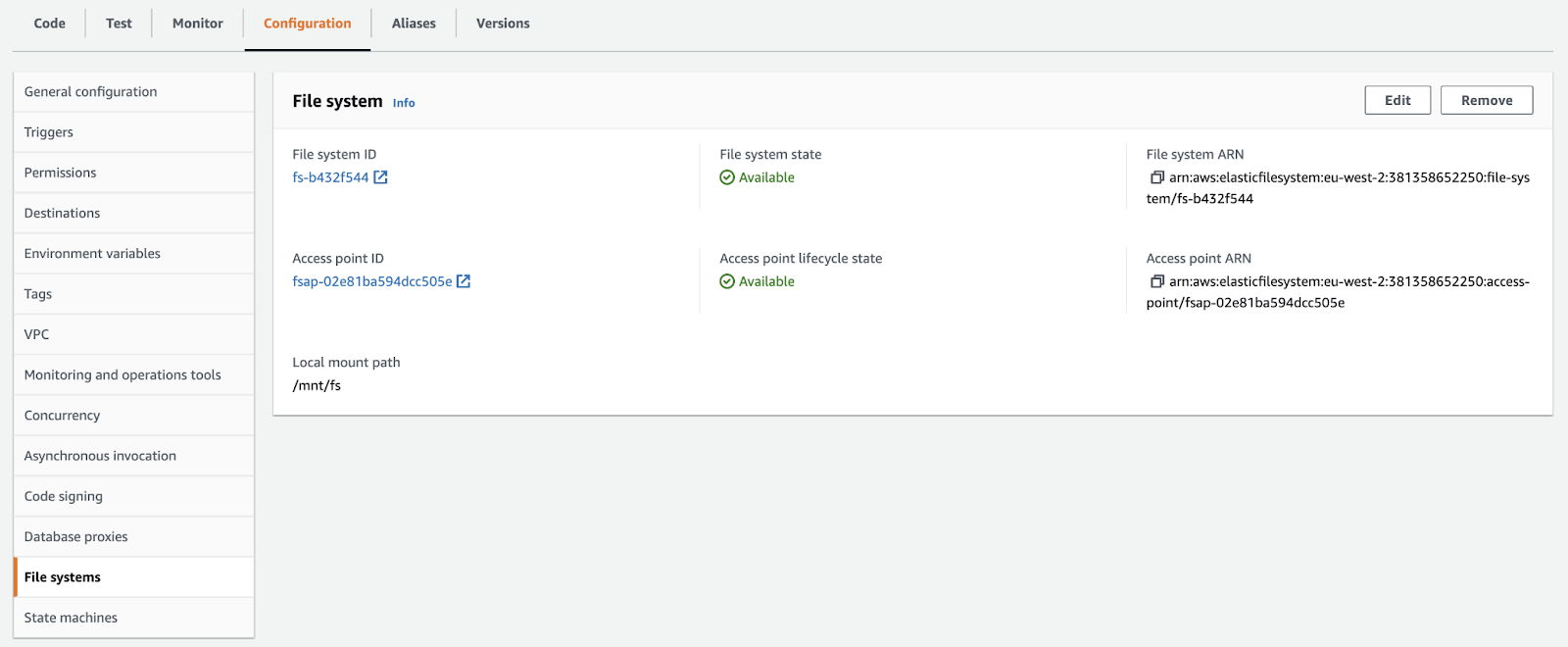

use the CONTENT_REPO environment variable when configuring your Lambda and

give it the value of the Local mount path of the attached File

System:

In this case, each instance of the function will create its content repository on

the File System under the following path: ${CONTENT_REPO}/<function

name>/content_repository/<epoch timestamp>-<UUID>

You can share the same File System across multiple Lambda functions if desired depending on the IO performances you need.